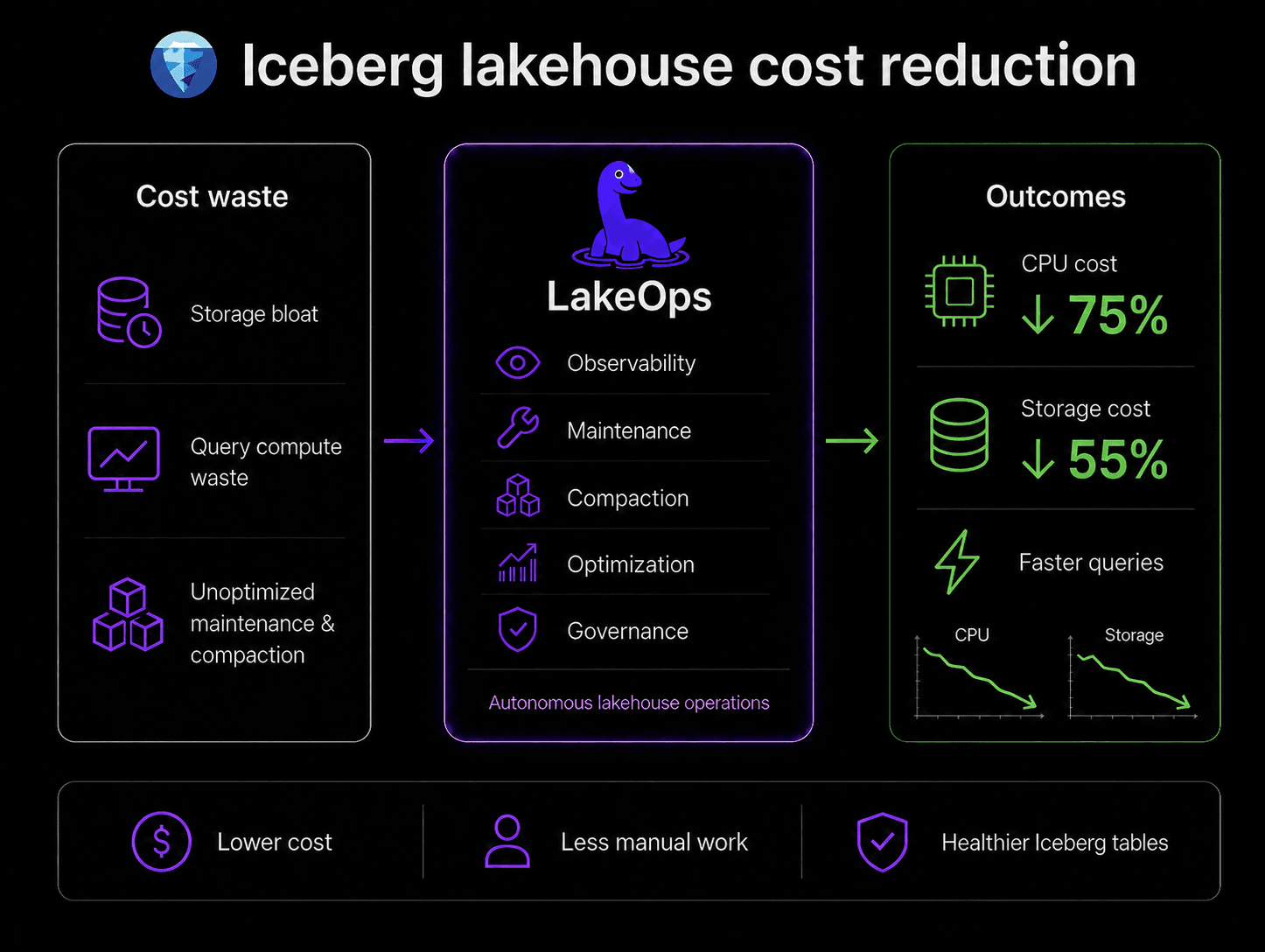

Apache Iceberg gives you a production-grade table format. It does not give you a production-grade operations layer. If you have adopted Iceberg and your tables are healthy, your queries are fast, and your storage bill is lean — you are in a small minority. Most teams discover that the gap between having Iceberg tables and having well-maintained Iceberg tables is where most of the cost and pain lives.

This article is a practical walkthrough of how LakeOps optimizes an Iceberg lakehouse end to end — from connecting your catalogs through diagnosing problems, fixing them, and keeping them fixed autonomously. Every capability described here is in production today. Every number comes from real deployments.

Step 1: Connect your catalogs and storage

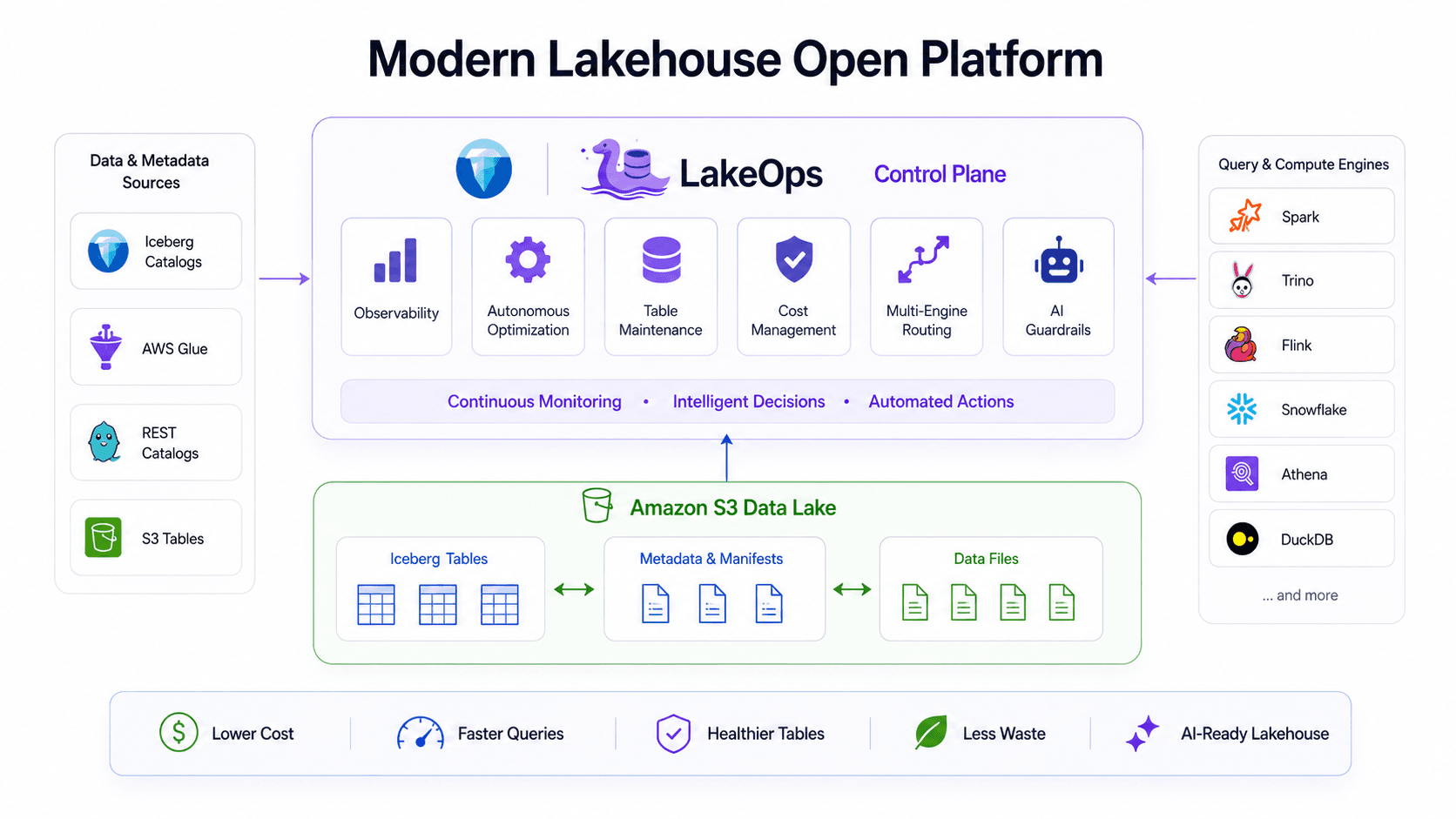

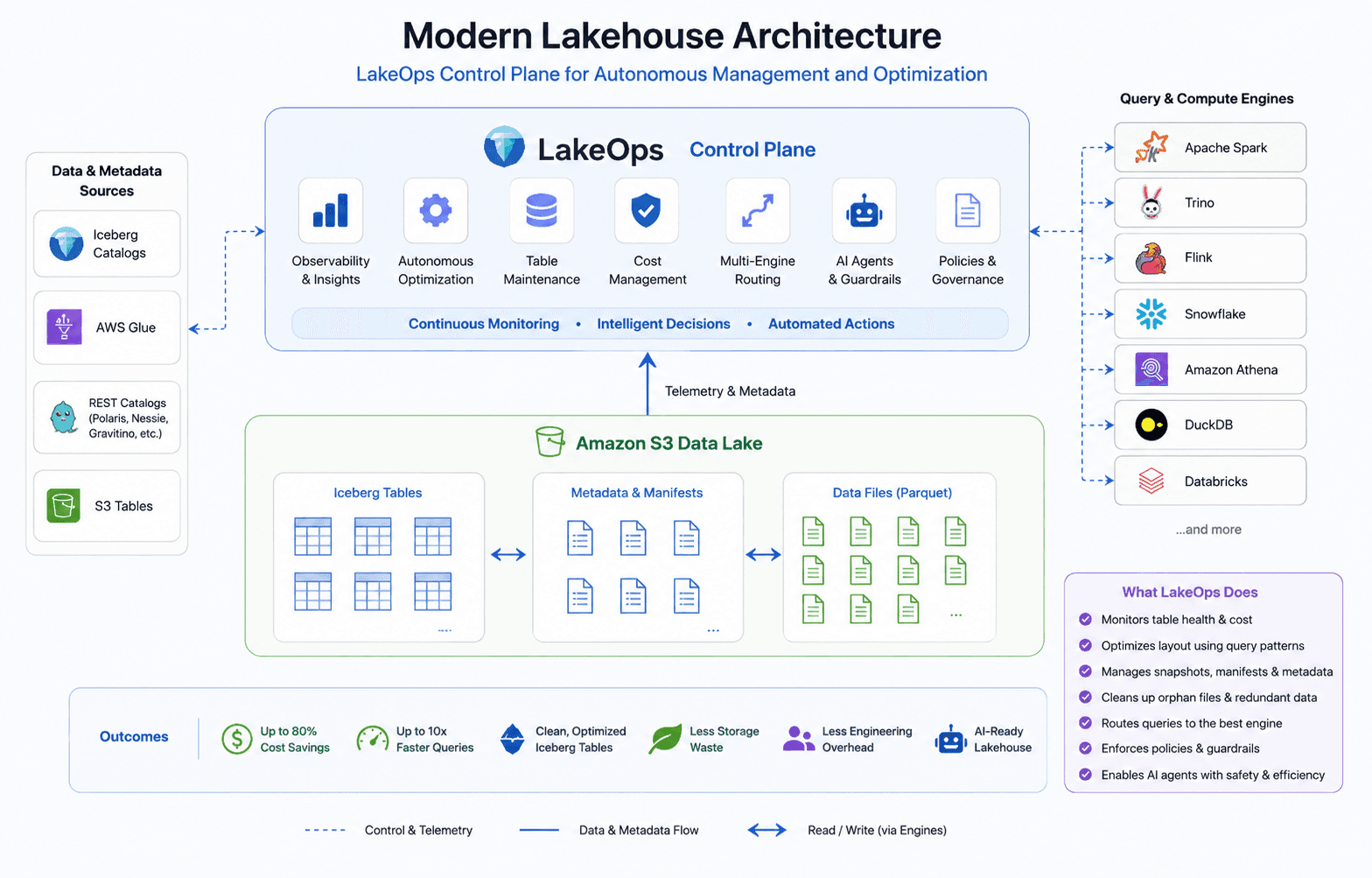

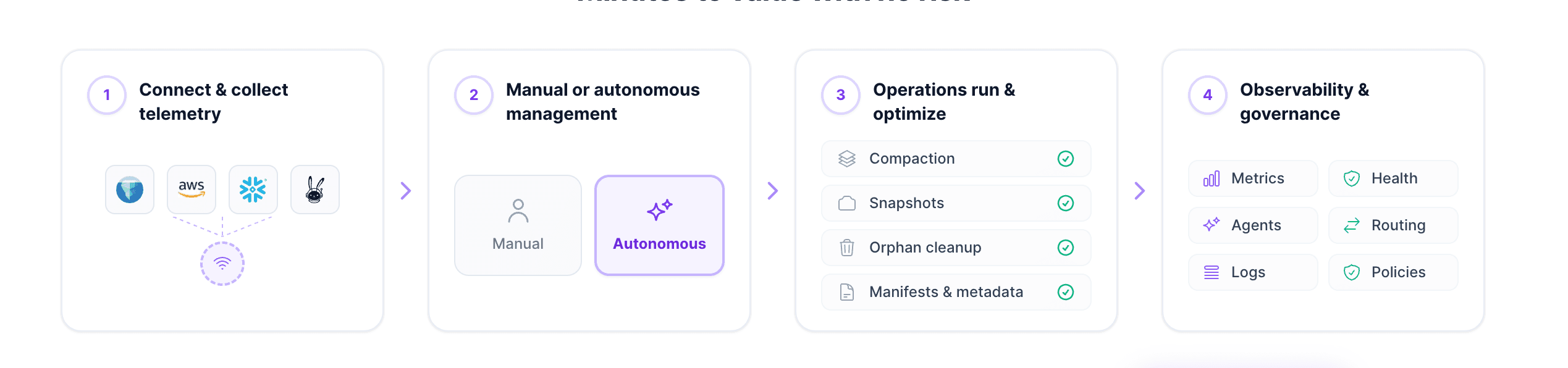

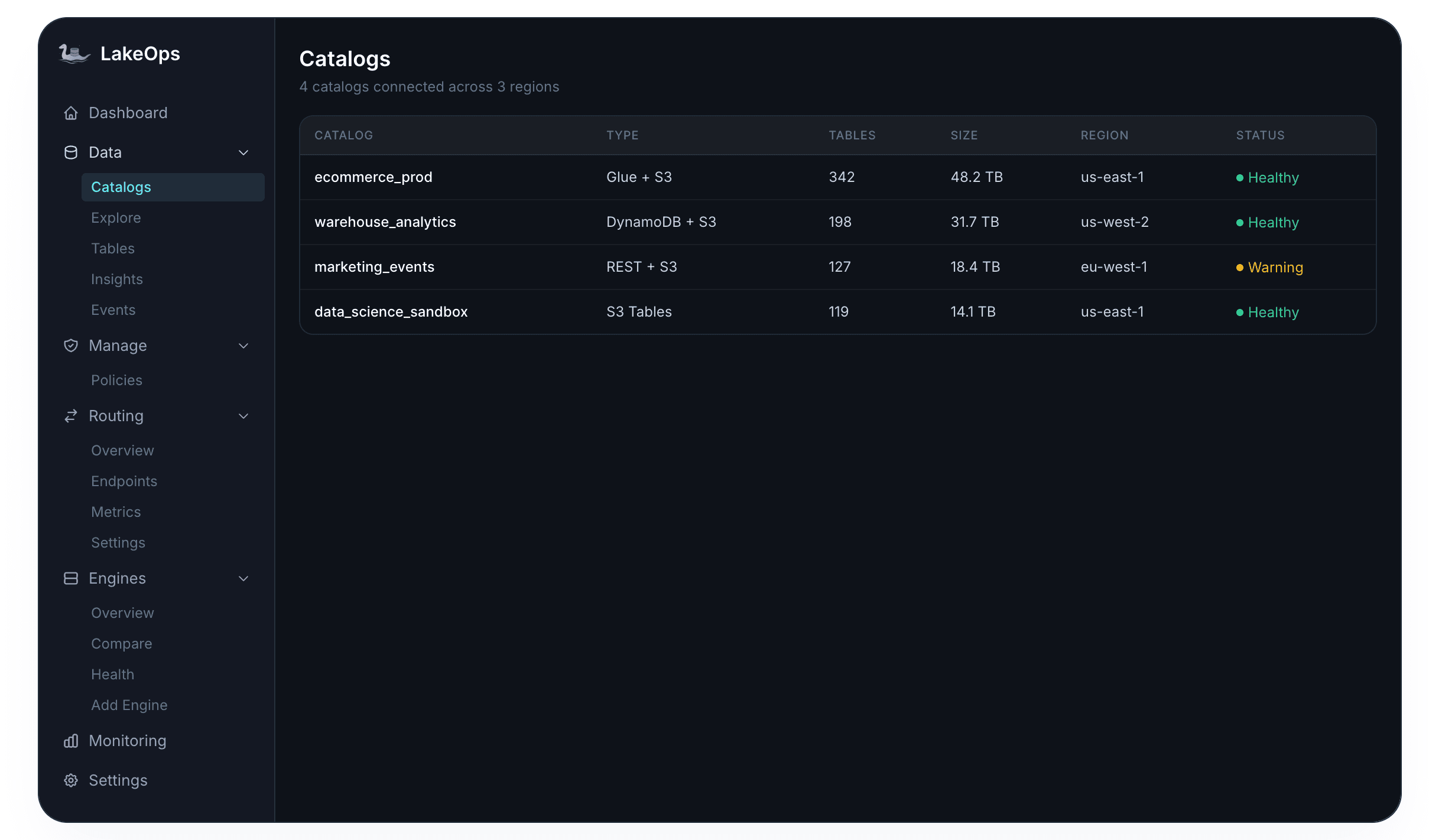

LakeOps connects to the catalogs and object storage you already run. The setup takes roughly ten minutes and requires no agents, no data movement, and no pipeline changes. Supported catalog types include AWS Glue, DynamoDB-backed catalogs, REST catalogs (Polaris, Gravitino, Nessie, Lakekeeper), and S3 Tables. Your data stays in your account — LakeOps reads metadata and telemetry, nothing else.

After connecting, the platform discovers every table across your catalogs, reads their Iceberg metadata (snapshots, manifests, file structure, partition layout), and begins collecting telemetry from your query engines — Trino, Spark, Snowflake, Athena, DuckDB, Flink, and others.

Step 2: Diagnose table health across the lake

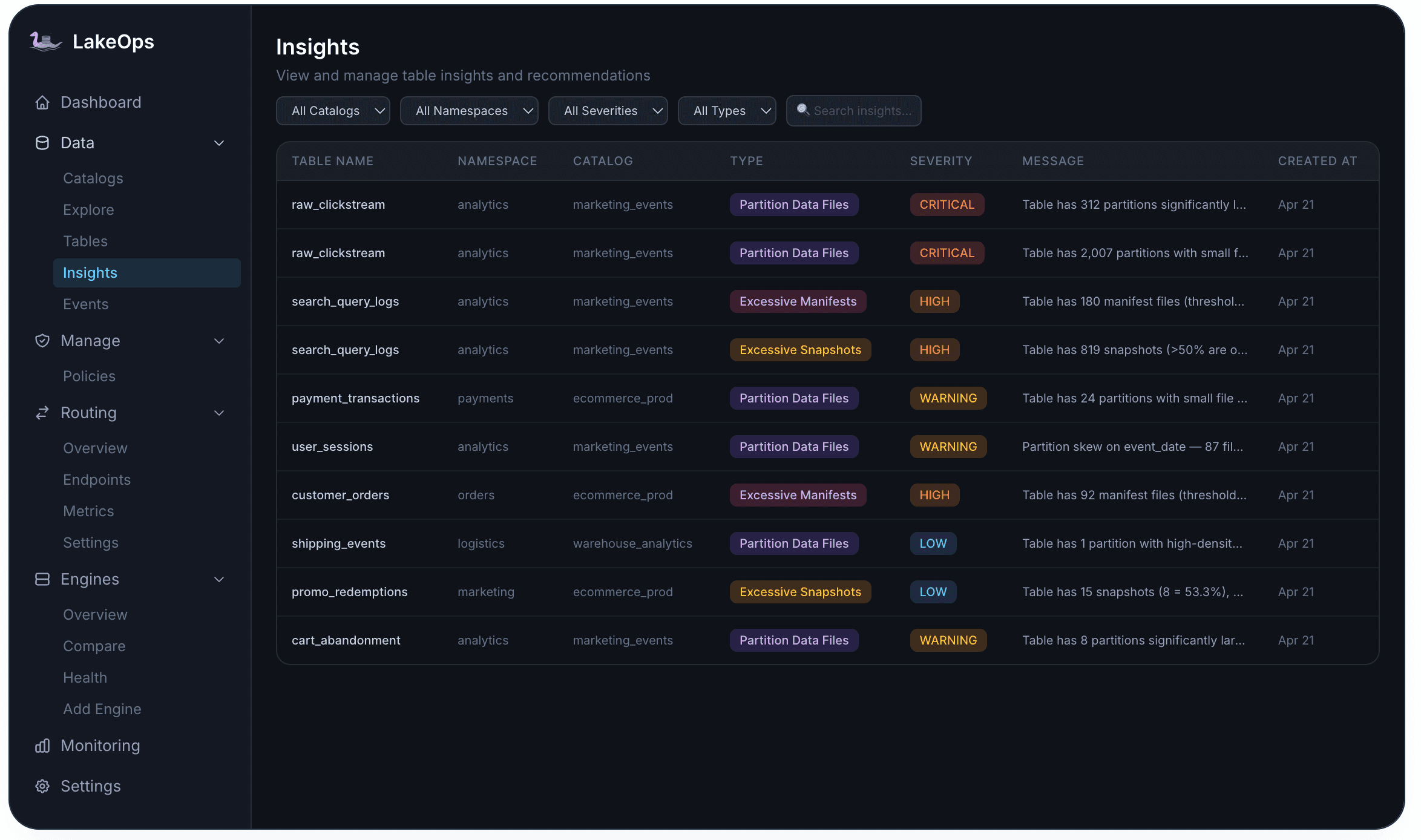

Once catalogs are connected, LakeOps classifies every table into three health tiers — Critical, Warning, or Healthy — based on structural signals: file count relative to target, manifest fragmentation, snapshot depth, orphan file volume, sort-order staleness, and query latency trends. This classification happens automatically and continuously.

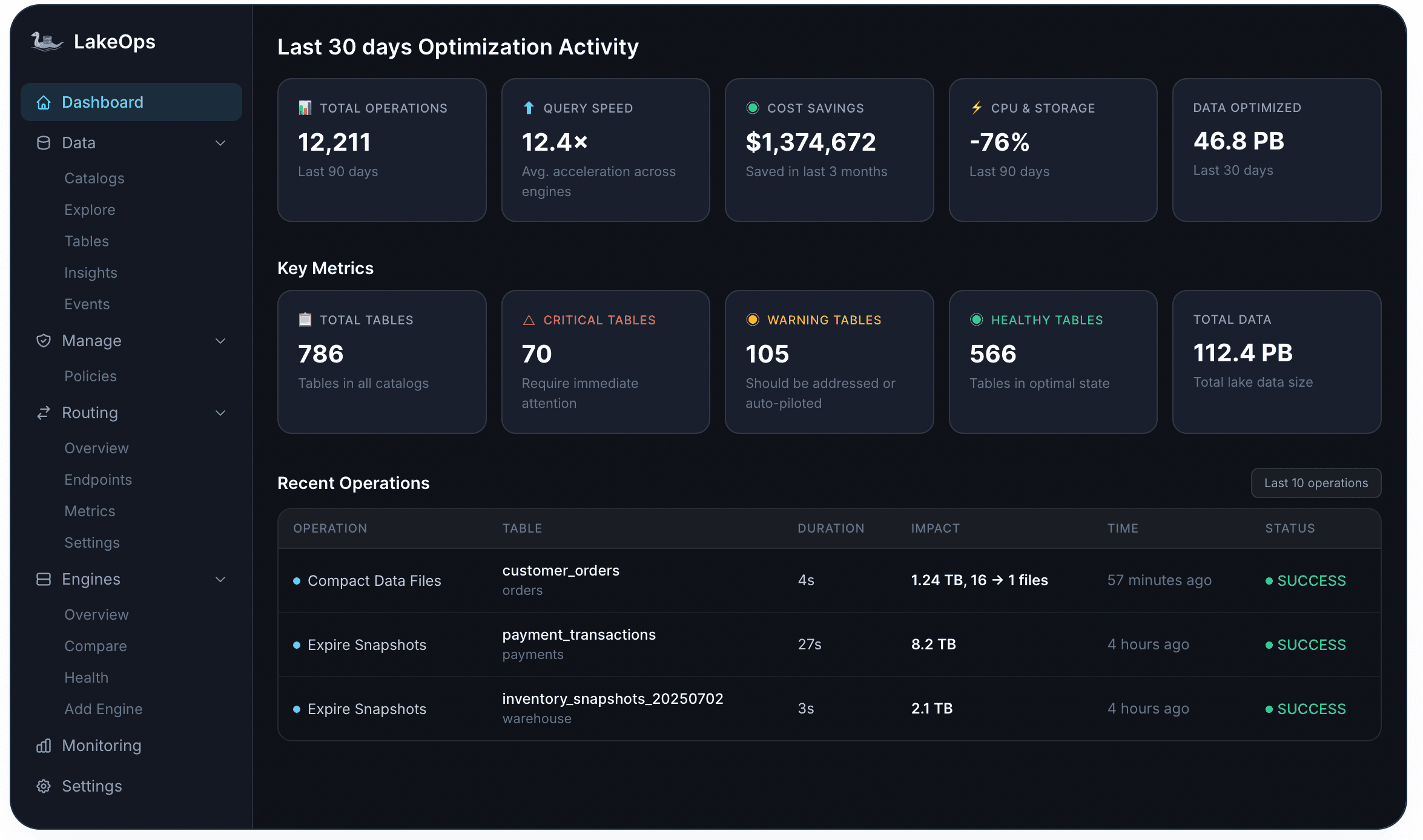

The executive dashboard shows the aggregate picture: total tables under management, how many need immediate attention, optimization activity over the last 30 days, and cumulative impact metrics. One production deployment showed 12,211 total operations, 12.4x average query acceleration, $1,374,672 in cost savings, and -76% CPU and storage reduction across 786 tables.

Drilling deeper, the Insights engine surfaces specific problems at four severity levels before users notice them. CRITICAL alerts flag severe fragmentation — tables with hundreds of partitions exceeding file-count thresholds. HIGH alerts catch excessive manifest counts (e.g., 92 manifests where 50 is the threshold) or runaway snapshot accumulation. WARNING alerts flag partition skew and emerging small-file patterns. LOW alerts note early-stage issues that will compound if left unaddressed.

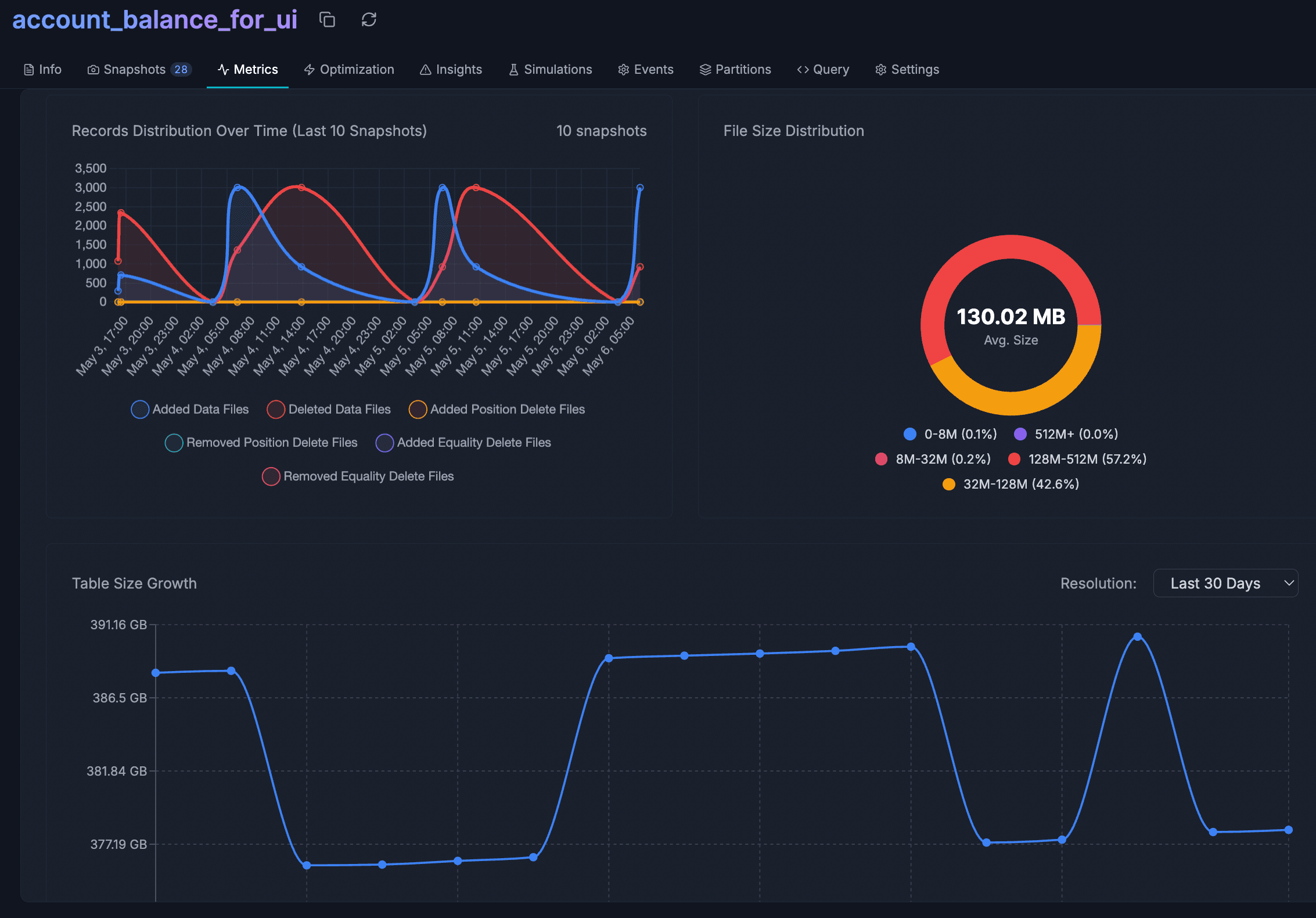

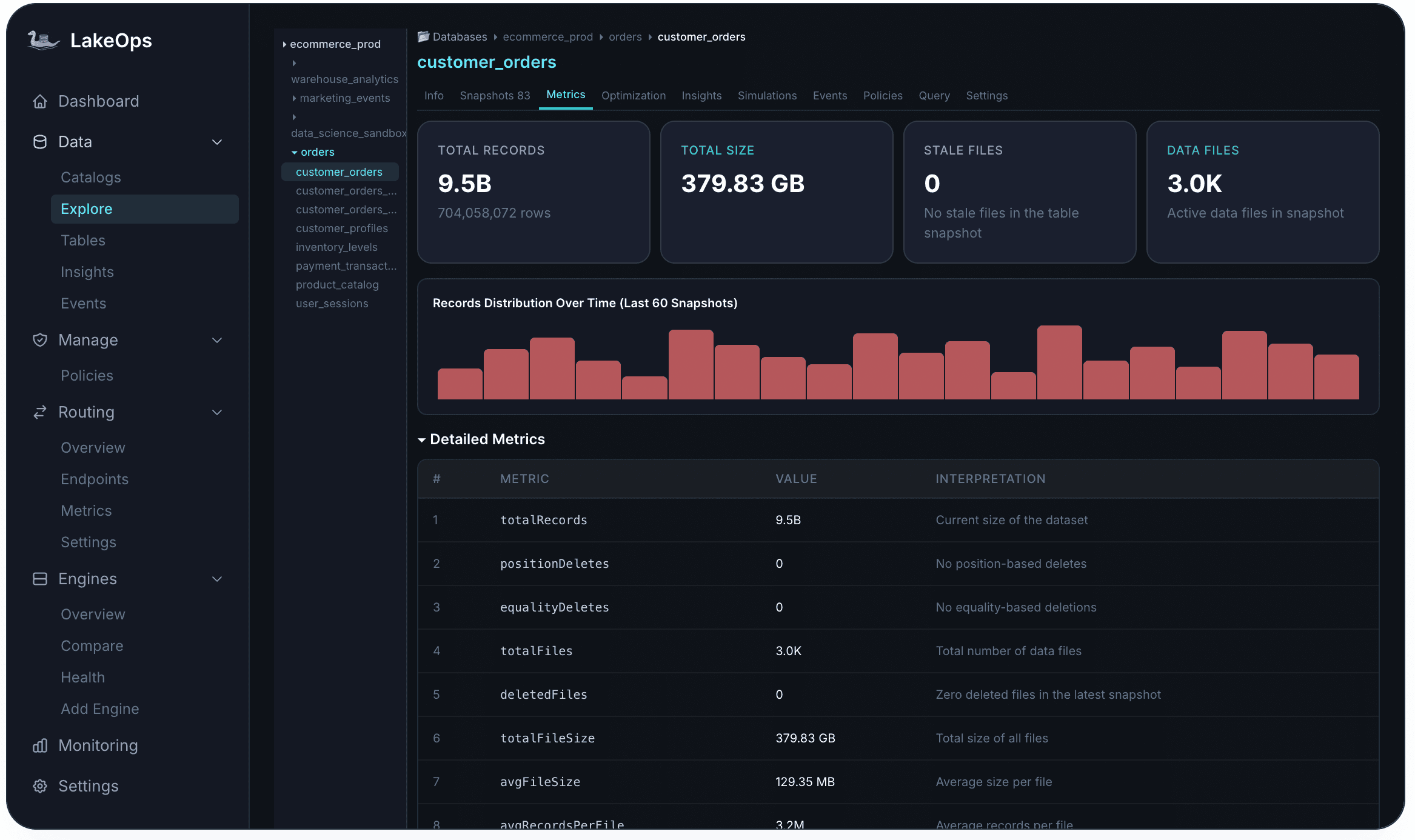

For any individual table, the Metrics tab exposes the structural detail: total records, total size, active data files, average file size versus the optimal 128–512 MB range, records distribution over the last 60 snapshots, and delete file counts. These metrics are the inputs that drive every optimization decision downstream.

Step 3: Compact and optimize file layout

Compaction is the single highest-impact optimization in an Iceberg lakehouse. Small files — the inevitable output of streaming pipelines, high-frequency appends, and micro-batch writes — are the root cause of most performance and cost problems. Each small file means an additional S3 GET request, an additional metadata entry for the planner, and less opportunity for columnar predicate pushdown. For a deep dive into why this matters, see Efficient Lakehouse Compaction at Scale.

LakeOps goes beyond simple file merging. The compaction engine observes actual query patterns — which columns appear in WHERE clauses, which partitions are accessed most frequently, which tables serve latency-sensitive workloads — and uses that telemetry to determine how data should be physically organized. When queries filter on `event_date` and `region`, the data is sorted on those columns so engines can skip entire row groups using min/max statistics. Every query against these tables — across Trino, Spark, Snowflake, Athena, DuckDB — runs faster and consumes less compute afterward.

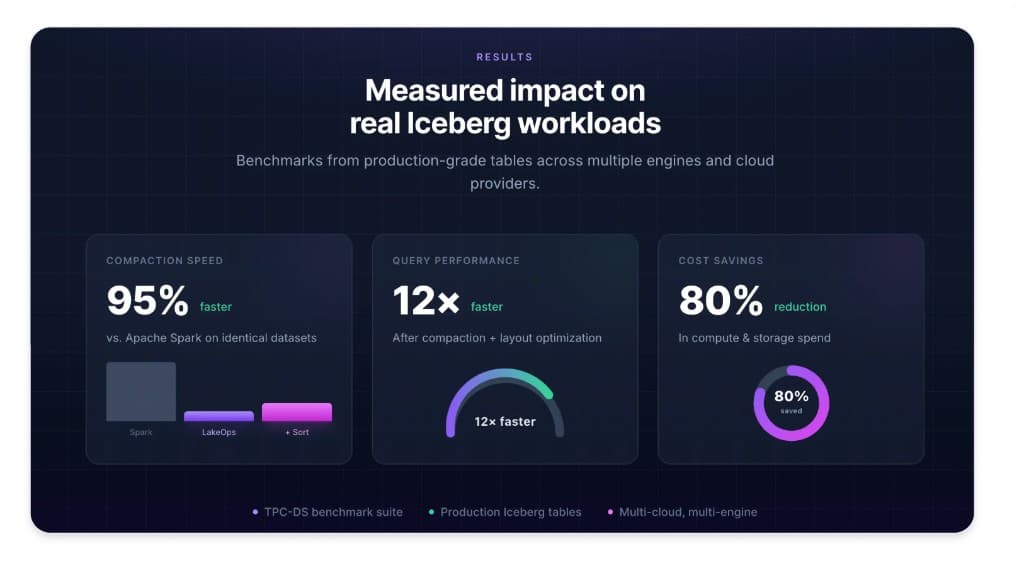

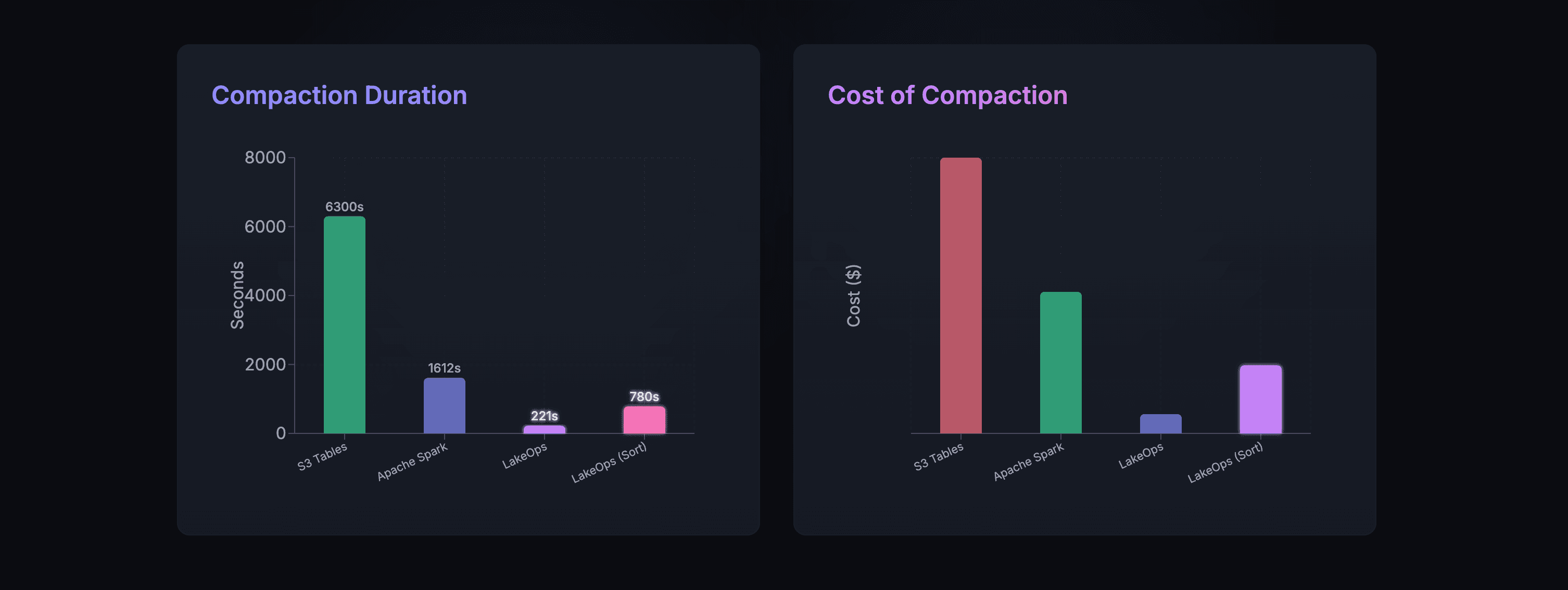

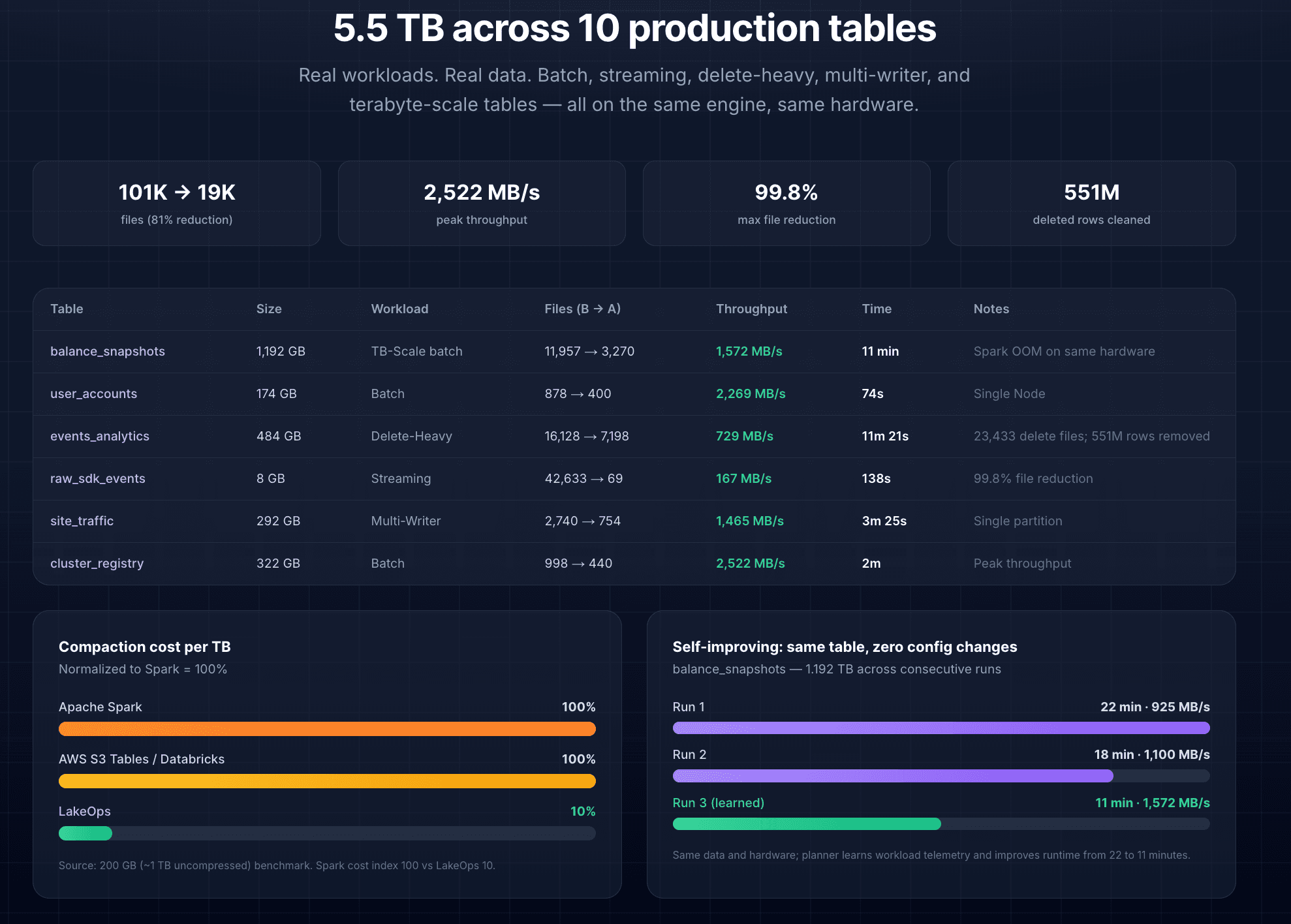

The compaction engine is built in Rust with Apache DataFusion, not JVM-based Spark. No garbage collection pauses, no executor provisioning, no idle cluster costs. In production benchmarks across 10 tables totaling 5.5 TB: binpack completed in 221 seconds versus 1,612 for Spark and 6,300 for S3 Tables — up to 28x faster at a fraction of the cost. On a 1.2 TB table that caused Spark to fail with an out-of-memory error, LakeOps completed compaction in 11 minutes on comparable hardware.

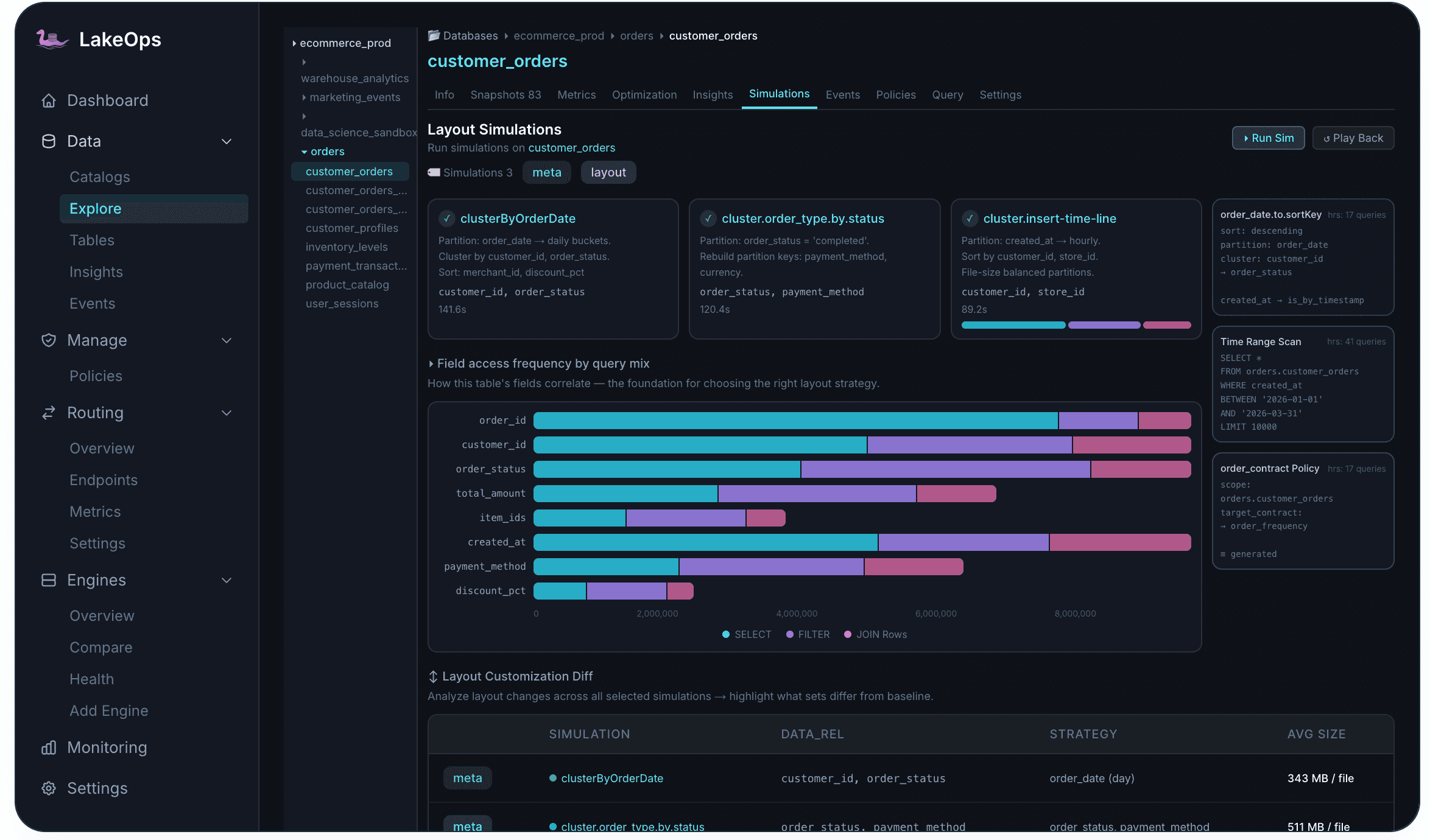

Before committing to a sort-order change on a large table, Layout Simulations let you test the impact safely. Simulations run on a real Iceberg branch created from the latest snapshot — the proposed layout is applied, actual query patterns are replayed, and results are compared against the current baseline. The branch is discarded afterward; no production data is modified.

Step 4: Manage the snapshot and metadata lifecycle

Every write operation in Iceberg creates a new snapshot. Without active expiration, snapshots accumulate indefinitely — each one retaining references to data files that cannot be garbage-collected, and deepening the metadata tree that query planners must traverse. At thousands of snapshots on a single table, planning overhead dominates query execution time. For more on how this interacts with overall cost, snapshot bloat is typically one of the four largest cost drivers.

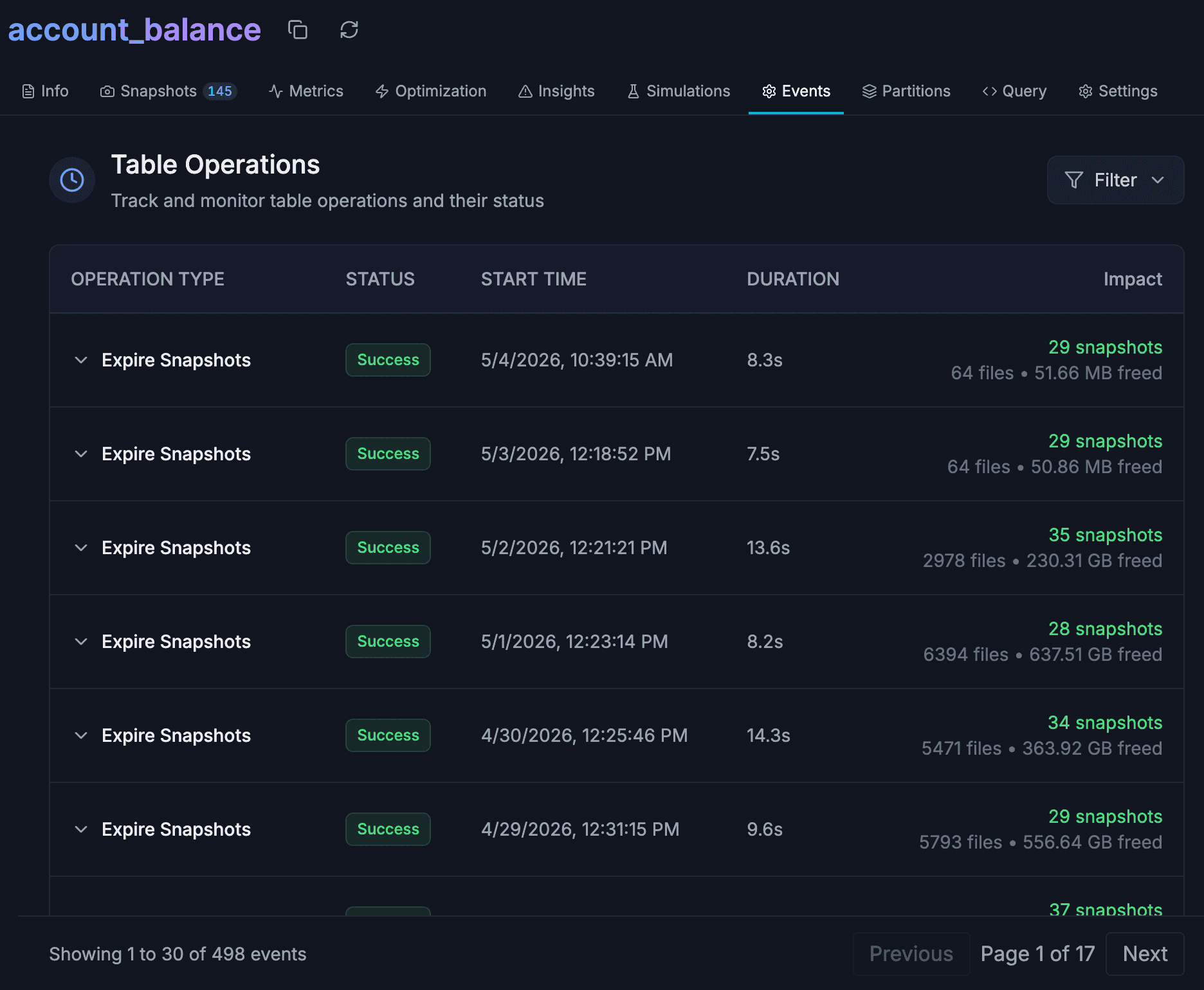

LakeOps manages snapshot lifecycle automatically. The Snapshots panel lists every snapshot with its ID, timestamp, and operation type. Retention policies run on configurable schedules, respecting both a time window (typically 7–30 days) and a minimum snapshot count for time-travel capability. Expiration is conflict-aware — it never removes a snapshot that an active reader depends on. You can also tag snapshots, create branches, or roll back to any previous version directly from the UI.

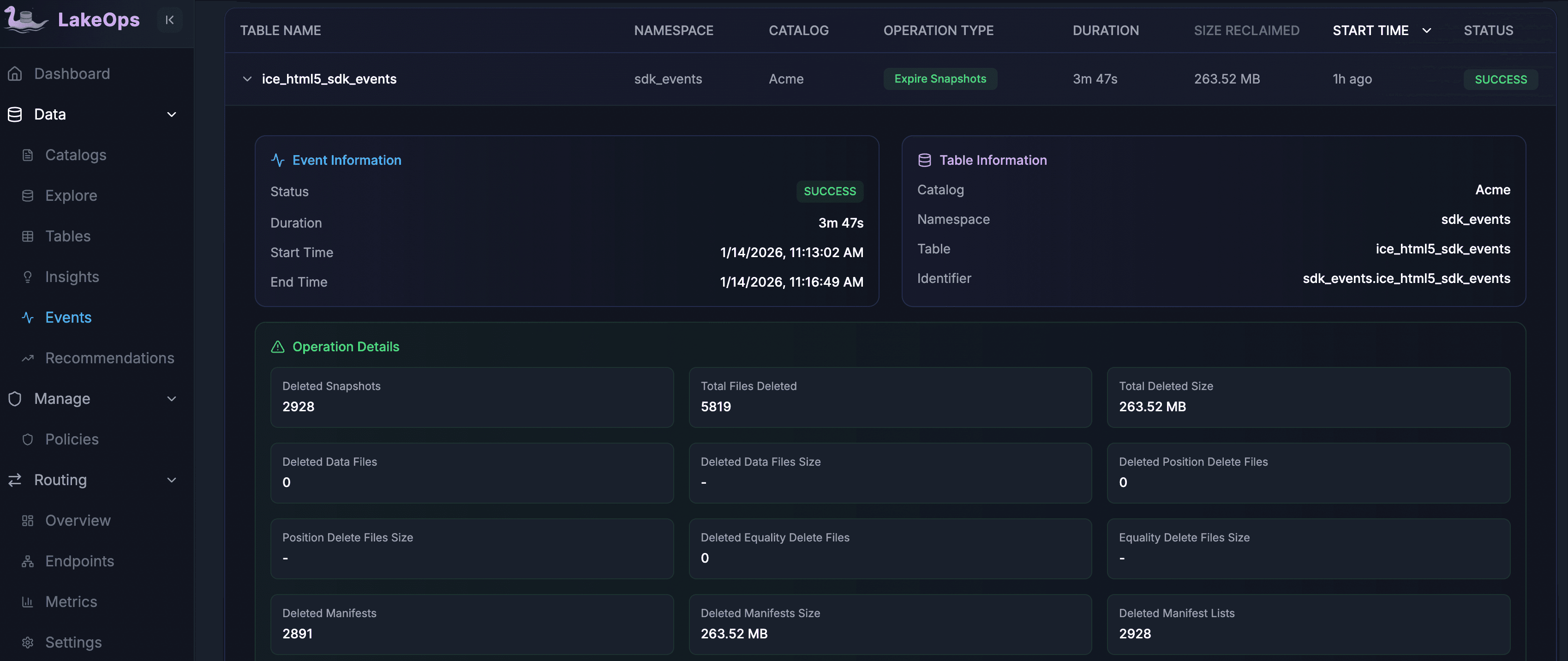

The impact at scale is dramatic. A single expiration run on one production table deleted 2,928 snapshots and 5,819 files, reclaiming 263.52 MB of manifest data in under 4 minutes. On another table, 22,034 snapshots and 675,510 files were expired, reclaiming 179.49 GB of storage in a single pass.

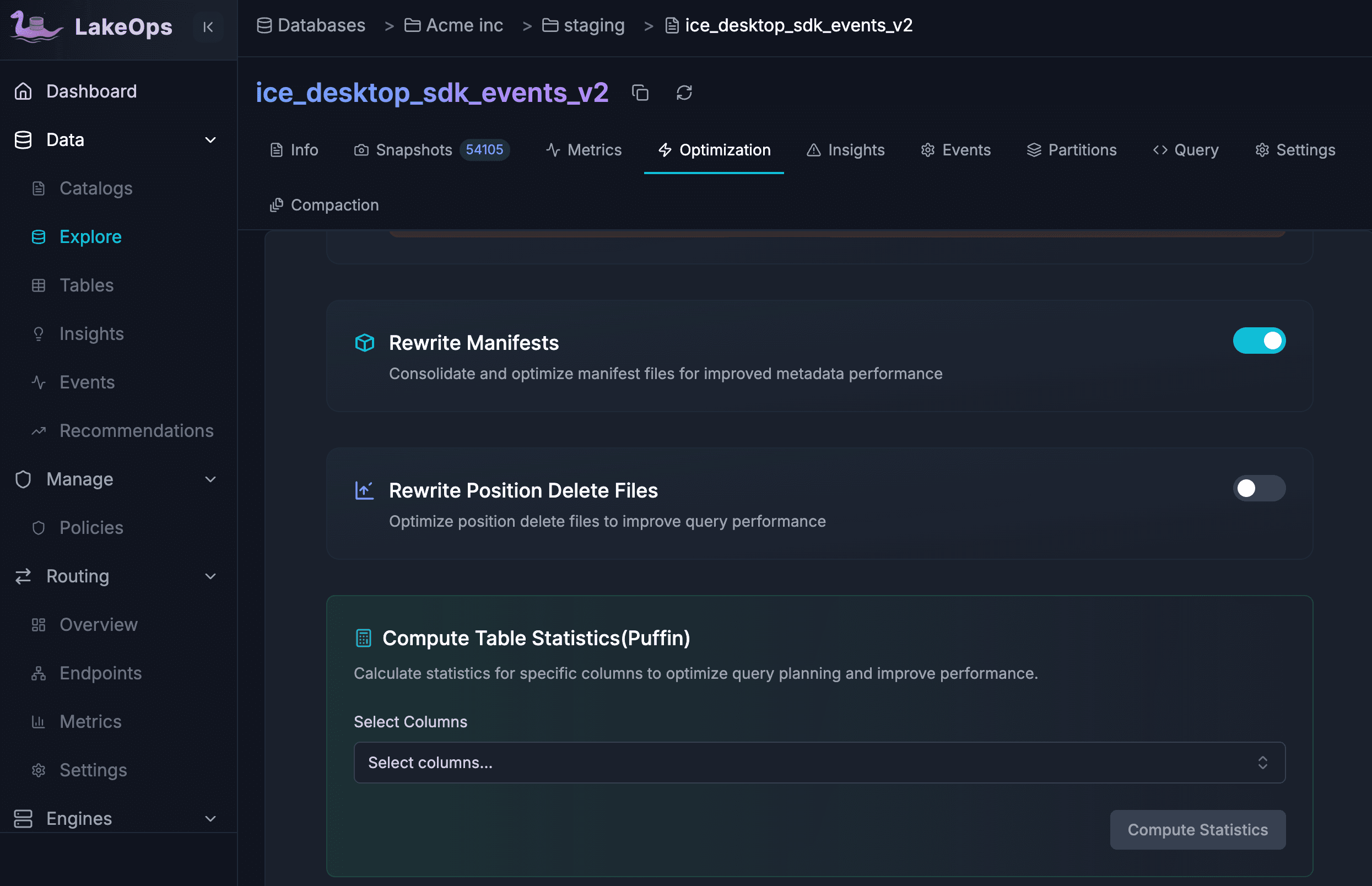

Manifest optimization runs alongside snapshot management. After many append and compaction cycles, a table might carry 200 manifest files where 30 would suffice — and every query planner opens all of them. LakeOps consolidates manifests automatically, rewrites position delete files to reduce read amplification, and computes Puffin column statistics so engines can prune row groups more aggressively. Each operation has its own toggle and schedule.

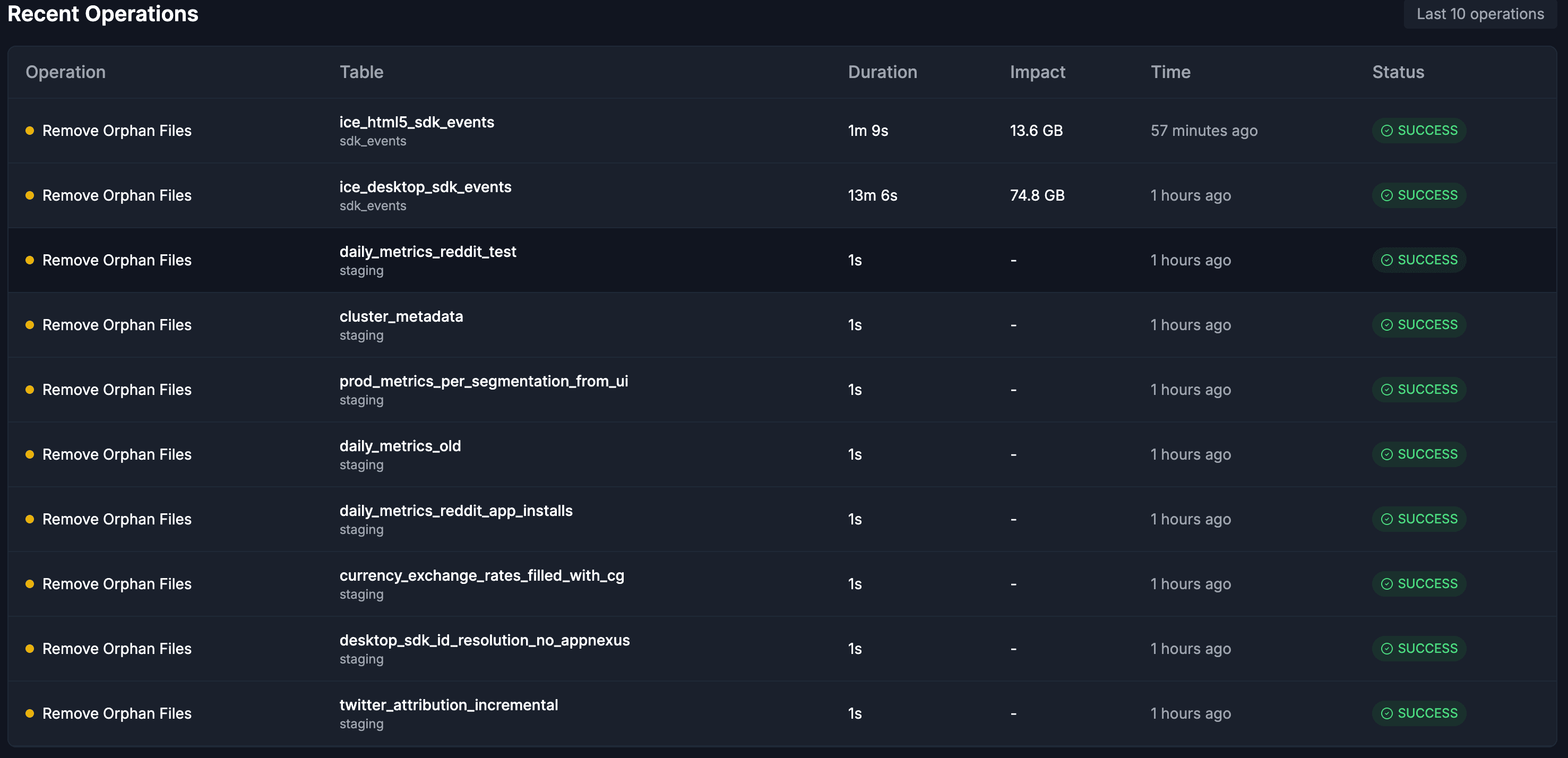

Orphan file cleanup completes the lifecycle. Orphan files — data objects that no live snapshot references, left behind by aborted writes, failed jobs, and interrupted compaction — accumulate silently on object storage. An age threshold (default 7 days) ensures only genuinely unreferenced files are removed. Cleanup runs after snapshot expiration by design, so that newly unreferenced files are captured in the same sweep. One fleet cleanup removed 59,831 files (74.8 GB) from a single table in 13 minutes.

The sequencing of these operations is critical and is covered in depth in Autonomous Iceberg Table Maintenance. Snapshot expiration runs first, releasing stale data references. Orphan cleanup follows, sweeping up the newly unreferenced files. Compaction then operates on the clean, current dataset. Manifest optimization runs last, consolidating metadata against the final compacted layout. Each operation's output becomes the next one's clean input — eliminating the redundant work that independently-scheduled scripts inevitably perform.

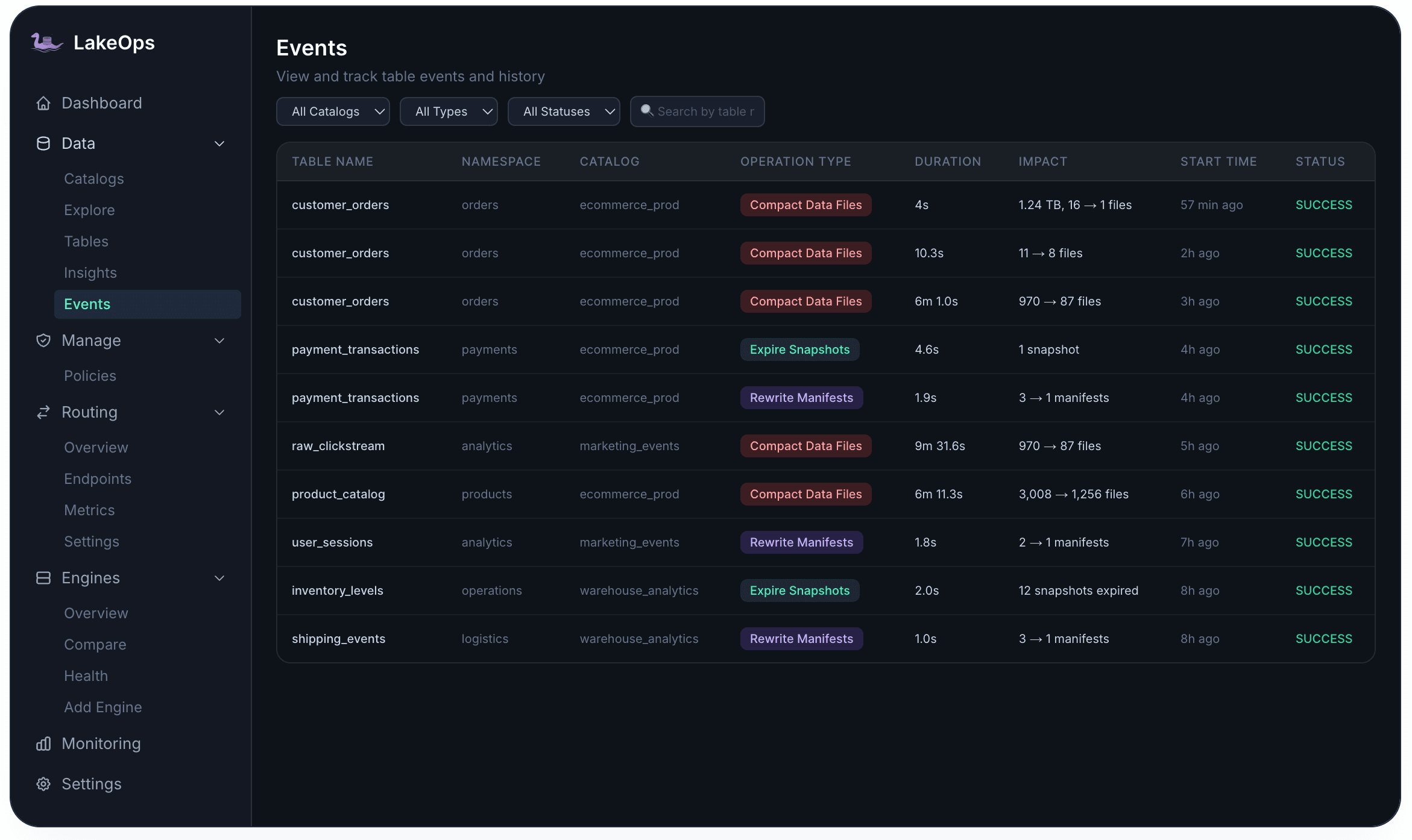

Every operation is tracked with full auditability. The Events tab provides a complete history per table — every compaction, snapshot expiration, orphan removal, and manifest rewrite with its duration, impact, and status.

Step 5: Route queries to the right engine

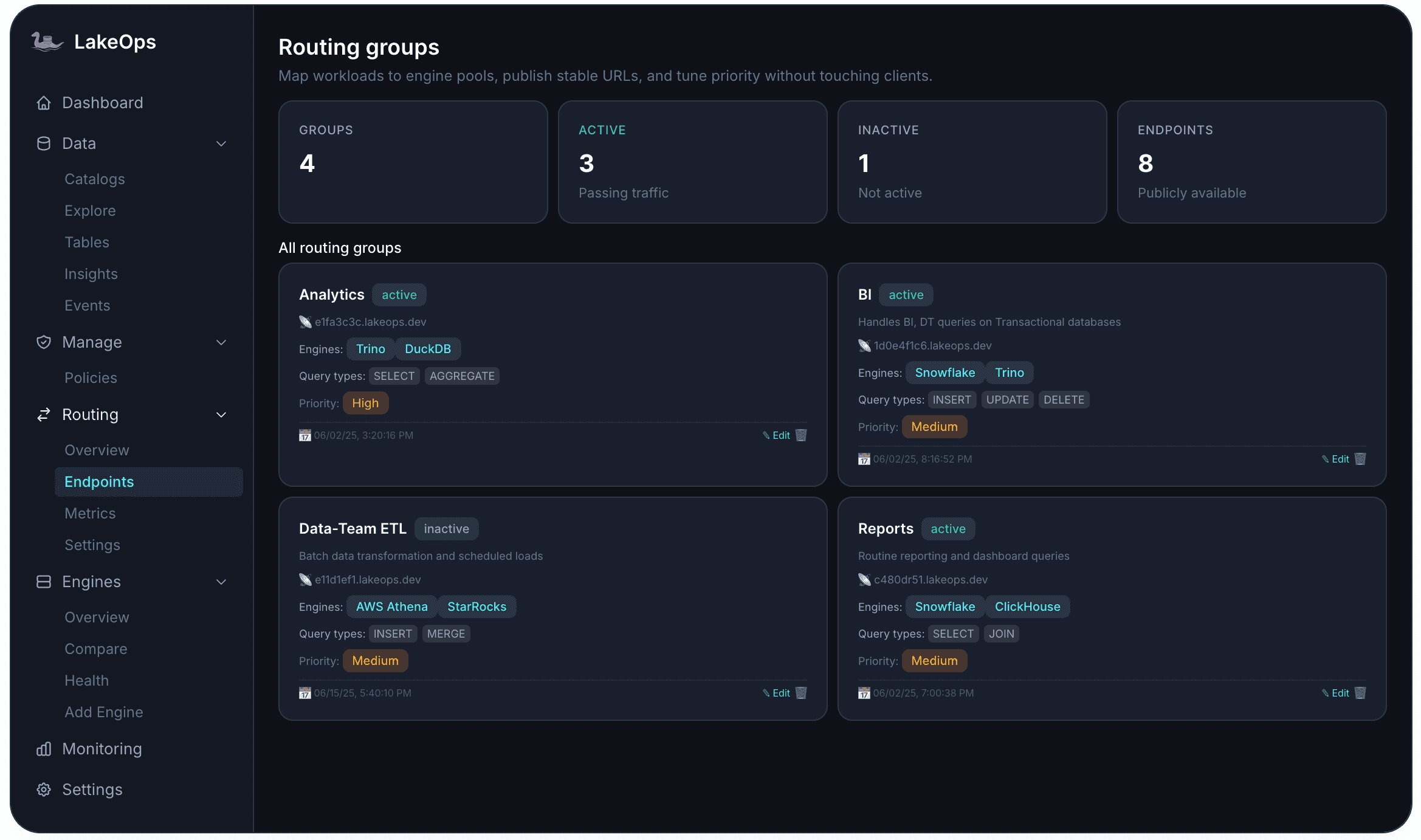

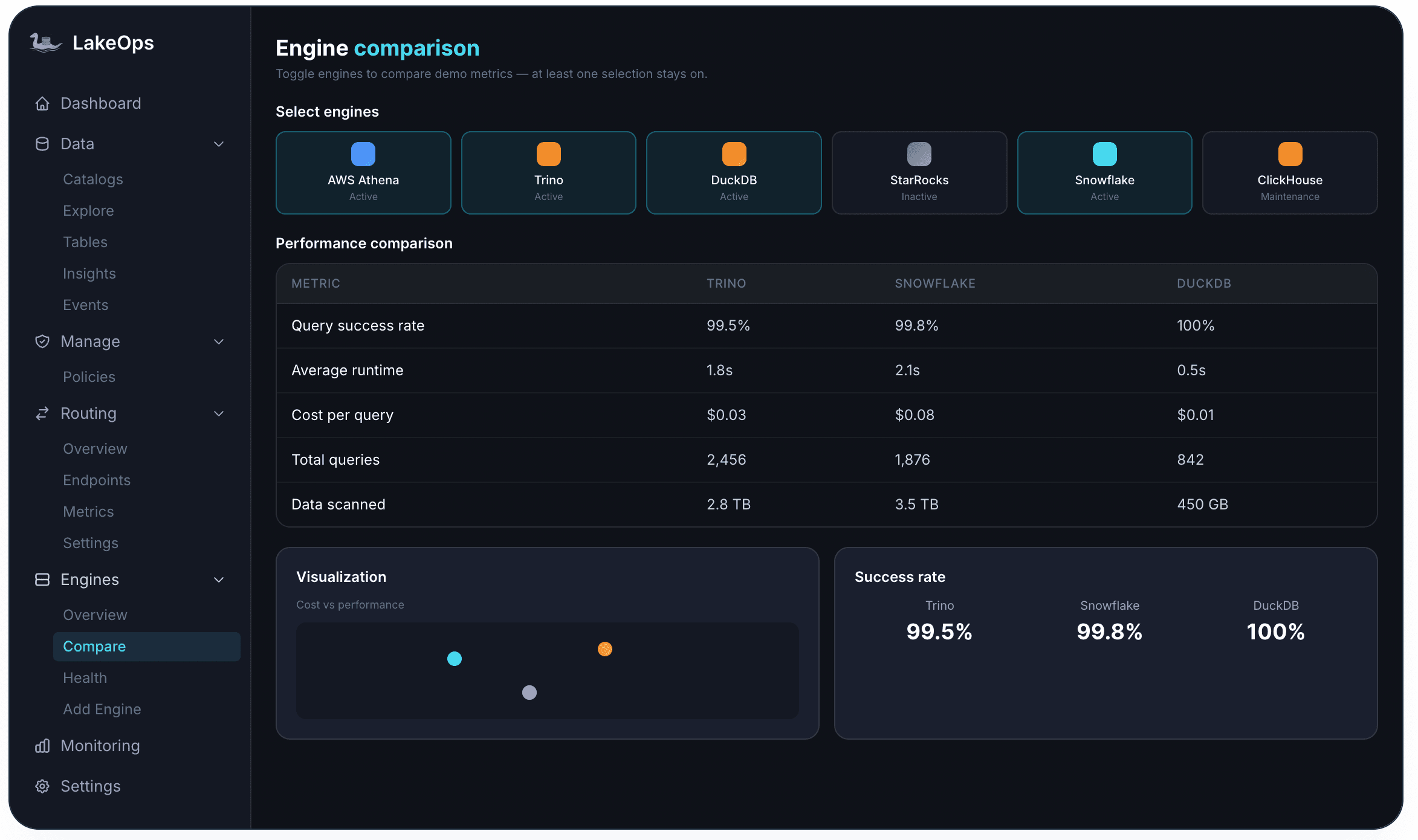

Production lakehouses rarely use a single engine. A typical deployment has Trino for interactive analytics, Snowflake for BI dashboards, Athena for ad-hoc exploration, DuckDB for local development, and Spark for batch ETL. Without a routing layer, each team picks the engine they know — regardless of whether it is the cheapest or fastest for that query shape.

The cost difference is not marginal. A simple point lookup that costs $0.01 on DuckDB costs $0.08 on Snowflake. A complex analytical join that costs $0.03 on Trino costs $0.08 on Snowflake. Across thousands of queries per day, the wrong routing decisions compound into significant overspend.

LakeOps connects all engines to a unified routing layer that makes per-query decisions based on cost, latency, table health, and historical performance. Routing Endpoints give each application or agent a stable URL with a defined engine pool, query-type scope, and priority level. An Analytics endpoint routes SELECT and AGGREGATE queries to low-latency engines. An ETL endpoint routes INSERT and MERGE to cost-optimized compute.

Step 6: Enforce policies at fleet scale

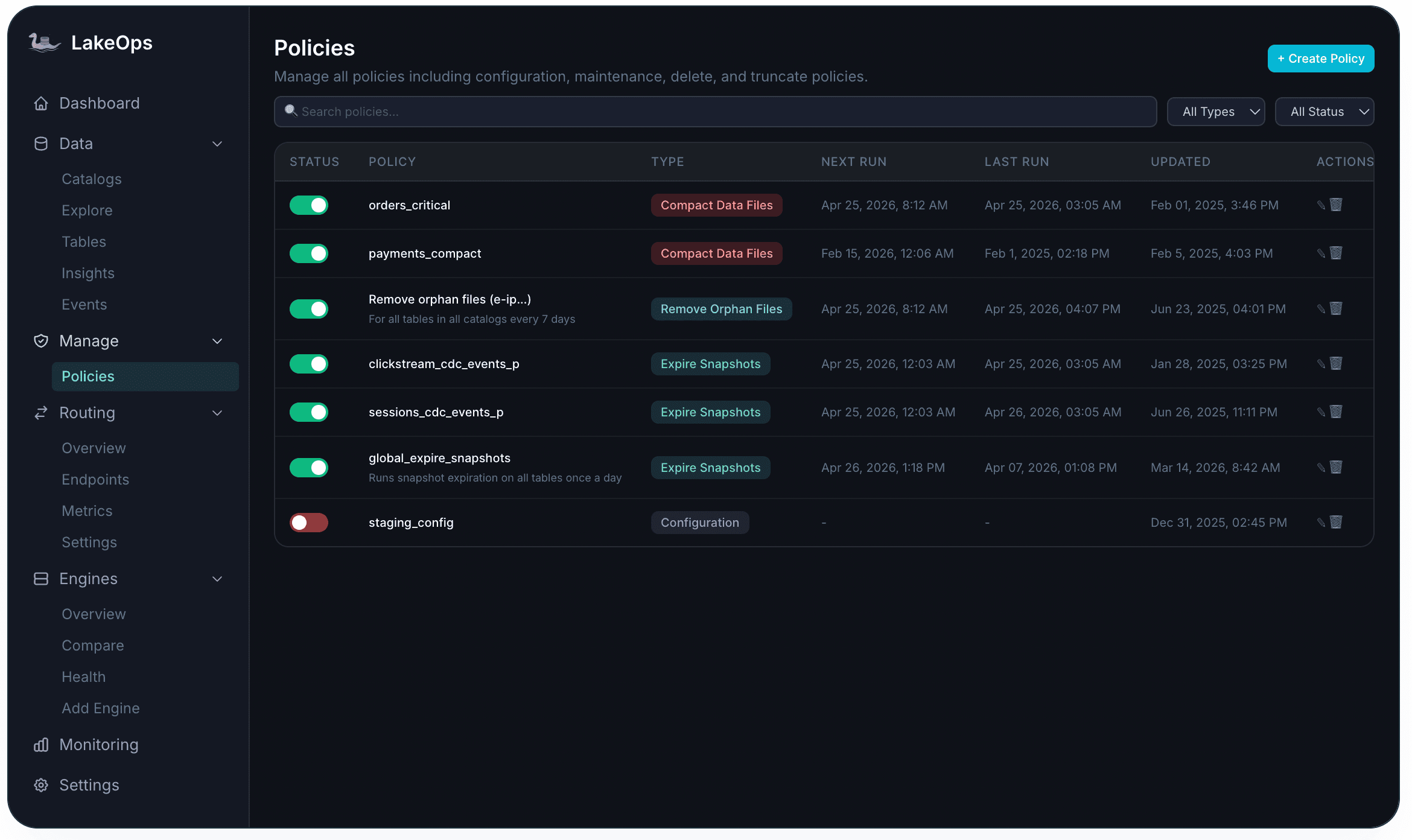

Optimizing tables individually does not scale beyond a few dozen. At hundreds of tables across multiple catalogs, with different teams expecting different retention windows and compaction cadences, maintenance rules need to be declared once and enforced everywhere.

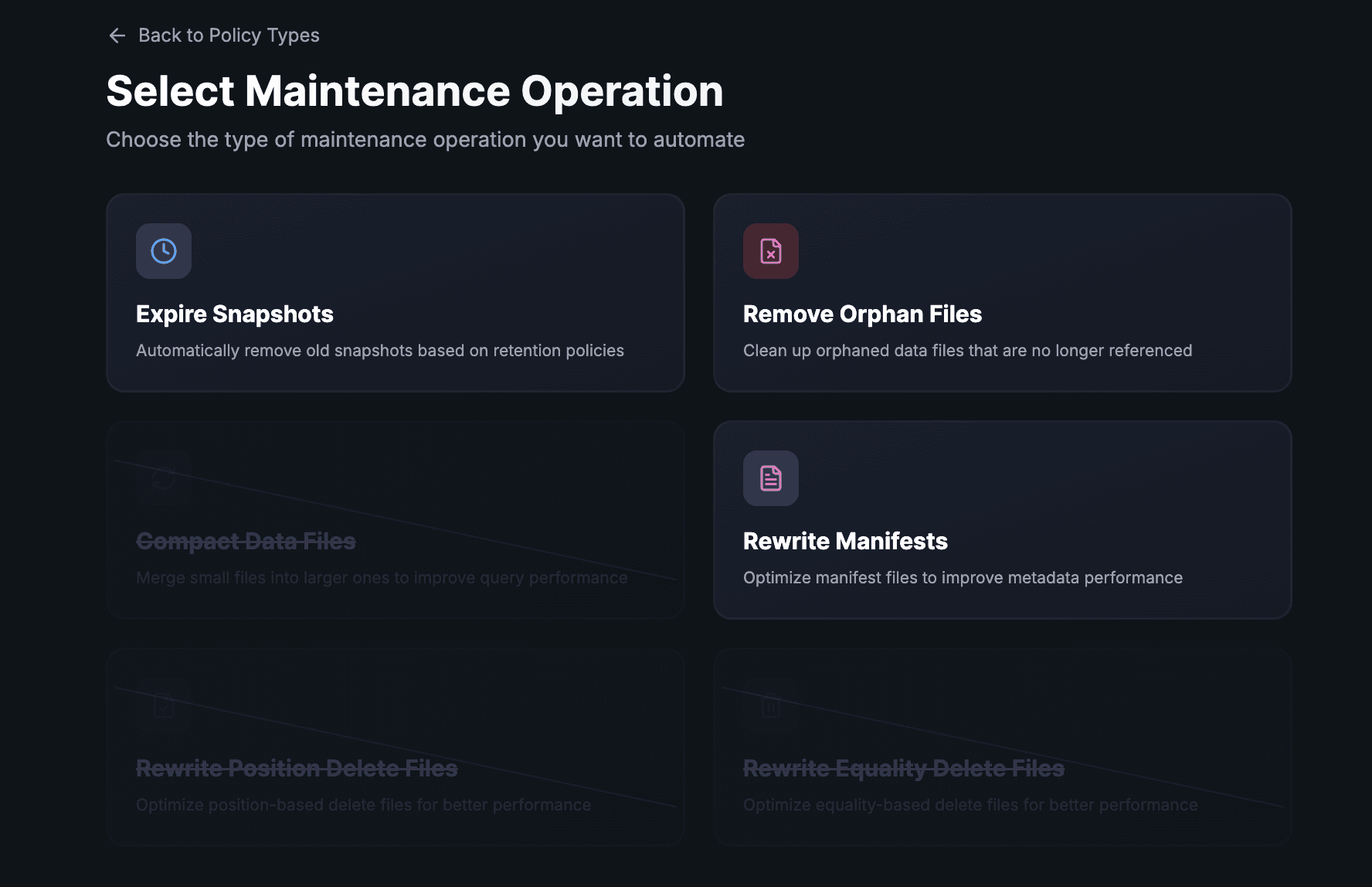

LakeOps provides a centralized policy engine with six maintenance operation types: Expire Snapshots, Remove Orphan Files, Compact Data Files, Rewrite Manifests, Rewrite Position Delete Files, and Rewrite Equality Delete Files. Policies are scoped to catalogs, namespaces, or individual tables, with a specificity hierarchy — table-level overrides namespace-level, which overrides catalog-wide baselines.

Scheduling follows best practices by default: expiration runs hourly, orphan cleanup daily after expiration, compaction during off-peak hours, manifest rewrites after compaction. Heavy operations are staggered to avoid resource contention. Every execution is logged with full before/after metrics — file counts, storage reclaimed, duration, and success/failure status.

Step 7: Prepare for agentic AI workloads

AI agents are becoming primary consumers of lakehouse data. They issue SQL iteratively inside tool-use loops, generate unpredictable query shapes, and require sub-second latency from tables designed for batch workloads. Without purpose-built infrastructure, agents hit uncompacted tables, scan excessive data, generate runaway costs, and potentially expose sensitive columns to LLM context windows.

LakeOps provides an agent-native MCP (Model Context Protocol) interface with schema discovery, async query support, and wire compatibility across PostgreSQL, MySQL, and Arrow Flight. Layered guardrails enforce safety at query level: ReadOnly blocks DDL and DML from agent sessions, CostEstimate rejects queries exceeding configurable scan thresholds, PIIMask hashes or excludes sensitive columns before results reach the model, and HumanApproval pauses high-stakes operations for review.

The architecture forms a closed loop. The compaction engine sees agent query telemetry alongside human query telemetry and adjusts table layouts accordingly. As agent usage grows, the tables they access most are compacted first, with sort orders aligned to the predicates agents actually use. The lake self-optimizes as AI adoption scales.

The compound effect: where the 80% comes from

The up to 80% reduction in CPU and storage costs is not from any single optimization. It is the compound effect of every layer working together — and critically, working in the right sequence.

Storage costs drop because orphan files are continuously removed, expired snapshots release their referenced data, and compaction consolidates thousands of small files into optimally-sized ones. Compute costs drop from three directions: the Rust compaction engine replaces JVM-based Spark clusters (86% cheaper per compaction run), sort-order optimization reduces the data every query scans (51% less on sorted vs unsorted tables), and intelligent routing sends each query to the cheapest appropriate engine. Query latency improves up to 12x through the combination of compaction, sort-order optimization, manifest consolidation, and Puffin statistics.

Engineering hours shift from reactive maintenance — debugging scripts, triaging alerts, firefighting at 2 AM — to proactive data platform development. Policies are defined once and execute continuously. Every action is logged, auditable, and reversible.

Getting started

LakeOps connects to your existing catalogs and object storage in roughly ten minutes. No agents to install, no data to move, no pipelines to modify. Your data stays in your account.

The onboarding flow: connect your catalog and storage, let the platform analyze table health from metadata and query patterns, enable automated optimization (or start in manual mode and review before acting), and start monitoring. Three guarantees apply: no vendor lock-in, no infrastructure changes, and no data leaves your environment.

If you are running cron-based Spark compaction, manually expiring snapshots, or not maintaining your tables at all — the tooling has caught up with the problem. Apache Iceberg is production-ready. Your table maintenance should be too.

Further reading

For a broader architecture view beyond the optimization workflow in this article, read From Databricks and Snowflake to an Open Data Platform. The deep dives on managed Iceberg, cost optimization, compaction, autonomous maintenance, and agentic AI are already linked in the relevant sections above.