Why compaction is the highest-impact operation in your lakehouse

Every Apache Iceberg guide explains compaction the same way: small files slow down queries, so you merge them into bigger ones. Run `rewrite_data_files`, set a target size, schedule it nightly, move on.

For a single table with a predictable batch workload, that description is accurate enough. But in a production lakehouse — hundreds of tables, streaming and batch mixed, multiple engines, and teams with different SLAs — compaction is not a file-merging task. It is a systems problem. When should it trigger? What physical layout should it produce? How should it coordinate with snapshot expiration and orphan cleanup? What engine should execute it, and how much should that engine cost?

This article breaks down what efficient compaction looks like at scale — the specific components that separate a cron-scheduled Spark job from a system that continuously optimizes every table across an entire Iceberg lakehouse.

The problem: why compaction breaks at scale

Iceberg's maintenance documentation describes `rewrite_data_files` with three strategies — binpack, sort, and z-order. The API is clean. The challenge is everything around it.

Small files accumulate faster than batch jobs can process them. A streaming pipeline checkpointing every 60 seconds against 100 active partitions creates 144,000 new files per day. A nightly Spark compaction job processes yesterday's output, but by morning the backlog has rebuilt itself. The table never reaches a healthy state.

Delete files compound the problem. Merge-on-read operations generate position and equality delete files that accumulate alongside data files. Until those deletes are physically applied during compaction, every read must reconcile them — scanning both the data files and the delete files, then filtering at query time. On tables with frequent updates, delete files can outnumber data files and dominate query latency.

File sizing is table-specific, not universal. The standard 128–512 MB target works for scan-heavy analytics, but a feature-store table serving point lookups benefits from smaller files. A high-cardinality event stream needs different partitioning and sizing than a slowly-changing dimension table. One size does not fit all.

Sort strategy requires workload knowledge. Binpack reduces file count but leaves data physically unordered — every query still scans more bytes than necessary. Sorting on the right columns enables row-group skipping through Parquet min/max statistics, but the 'right columns' depend on which queries actually hit the table. A sort order chosen at table creation may not reflect the queries running six months later.

Compaction conflicts with concurrent operations. A long-running compaction job can collide with writes, block snapshot expiration, or interfere with orphan cleanup. Without coordination, maintenance operations undermine each other.

JVM-based compaction is expensive. Running compaction through Spark means provisioning executor clusters, paying for JVM startup and garbage collection overhead, and keeping compute allocated even when actual processing takes a fraction of the reserved time. At hundreds of tables per day, the compaction compute bill becomes a significant line item on its own.

These are not edge cases. They are the default experience at production scale. The Iceberg ecosystem survey confirmed that most organizations still rely on custom scripts for compaction — and that operational complexity is the primary friction.

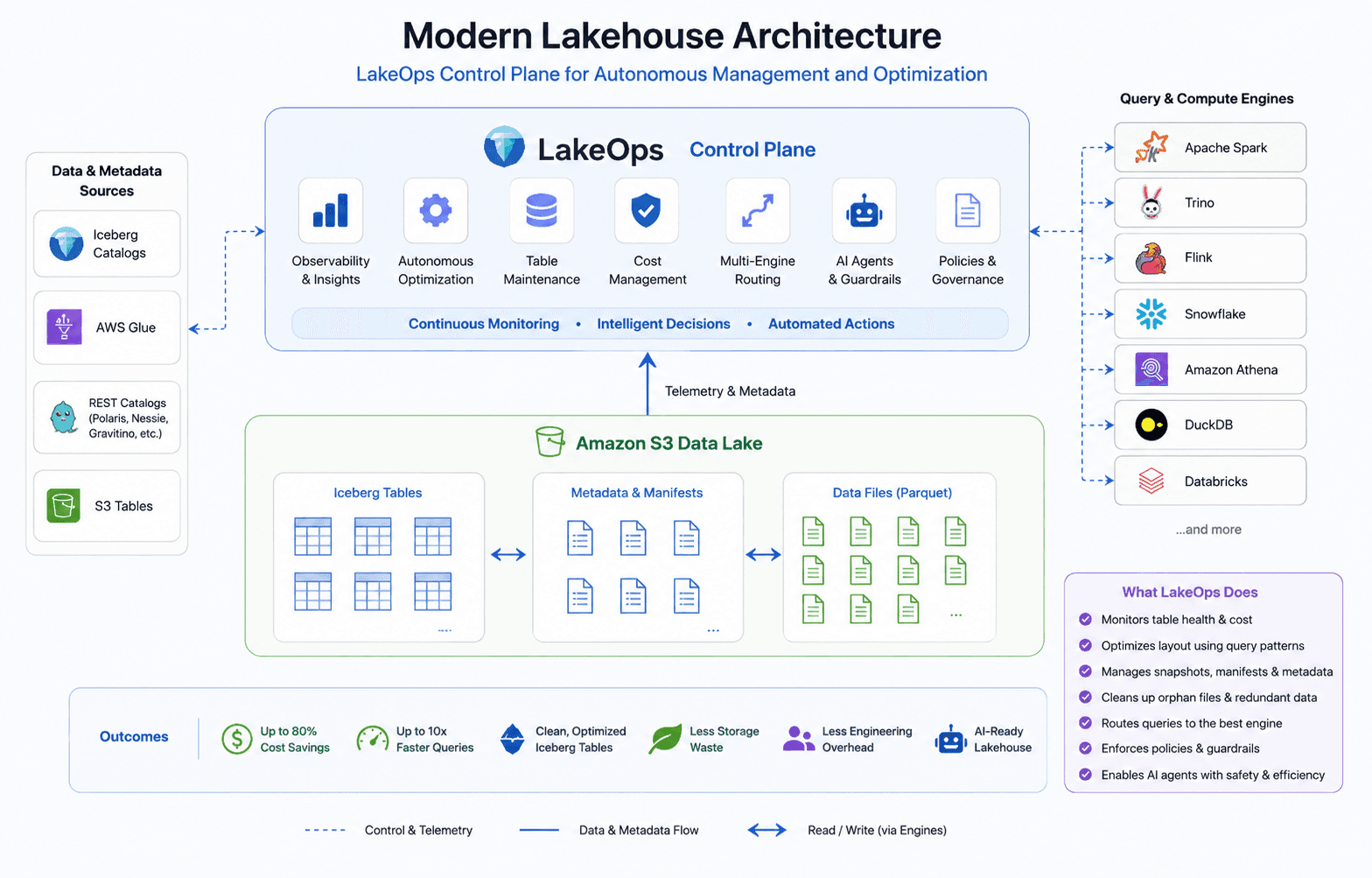

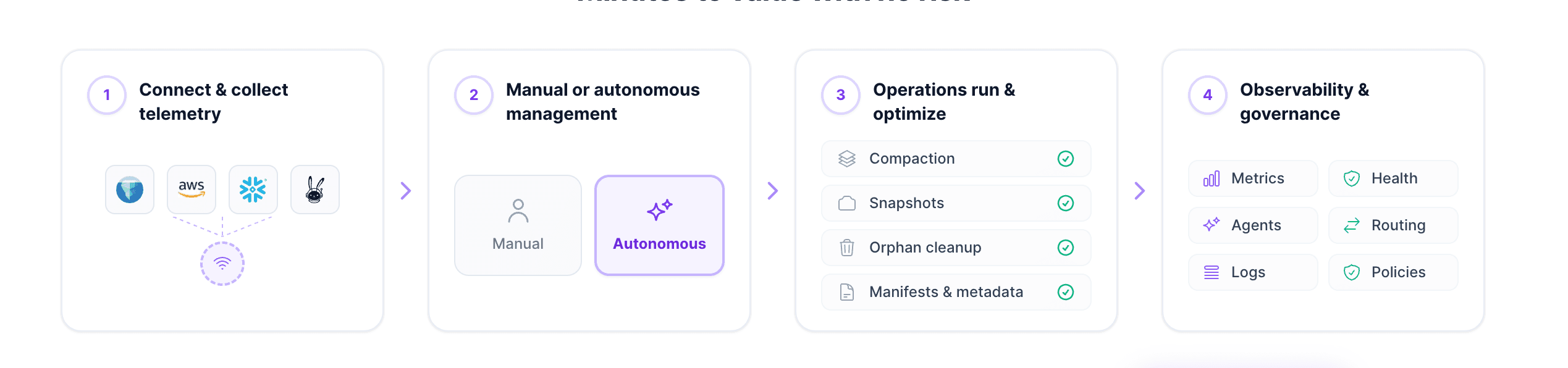

The solution: compaction as a control plane function

Solving compaction at scale means addressing each of these challenges as components of a single system — a lakehouse control plane that connects to your existing catalogs and storage, monitors table health, and runs compaction intelligently as part of a coordinated maintenance pipeline.

The sections below walk through each component of what efficient compaction requires.

1. Event-driven triggers instead of fixed schedules

Cron-scheduled compaction runs whether or not the table needs it. A nightly job might compact a table that received two writes, wasting compute, or skip a table that received ten thousand writes, leaving it degraded until tomorrow.

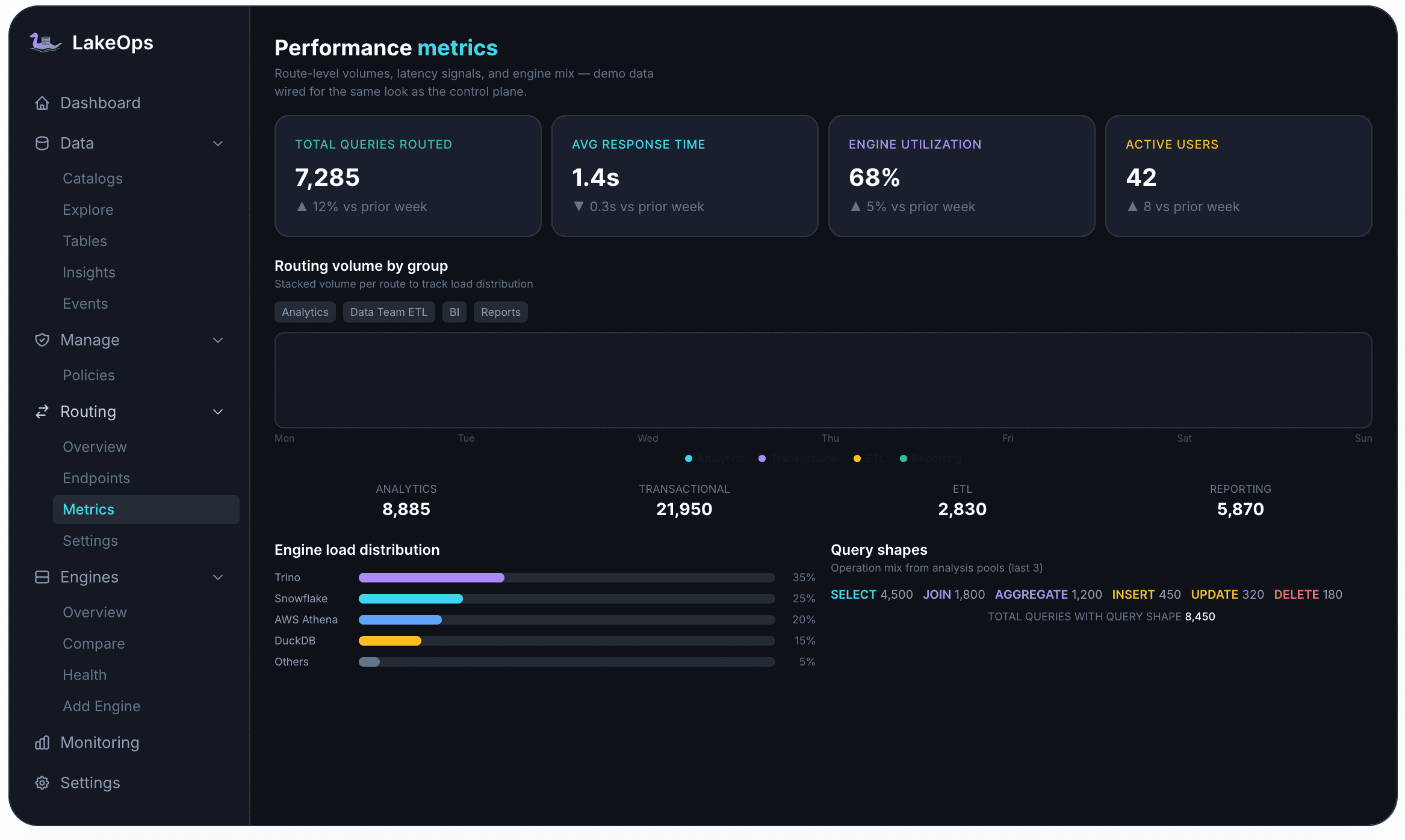

A control plane replaces cron with event-driven evaluation. The system continuously monitors each table's structural signals — file count per partition, average file size relative to the configured target, ratio of delete files to data files, and manifest depth. When any indicator crosses a configurable threshold, compaction is triggered for that specific table and partition.

Tables with heavy streaming ingest may compact multiple times per hour. Tables with weekly batch loads may compact once a week. The trigger matches the workload, not the clock. And because the control plane sees the full maintenance context, compaction also responds to other events — after a snapshot expiration frees data files, the system re-evaluates whether the remaining file set warrants a compaction pass. After a large batch write lands, newly created small files enter the evaluation queue immediately.

2. Query-aware sort strategies

Binpack compaction — merging small files into larger ones without reordering — solves the file-count problem. But the larger performance opportunity is in how data is physically arranged inside those files.

When Parquet files are sorted on columns that appear frequently in query predicates, engines use columnar min/max statistics to skip entire row groups without reading them. The scan volume drops, and compute consumption drops with it. The difference is substantial: on tables where queries filter on specific columns, sorted layouts can cut cumulative scan volume in half compared to unsorted data.

The challenge is knowing which columns to sort on. In a lakehouse with multiple engines and dozens of consuming teams, the access patterns for a given table are diverse and evolving.

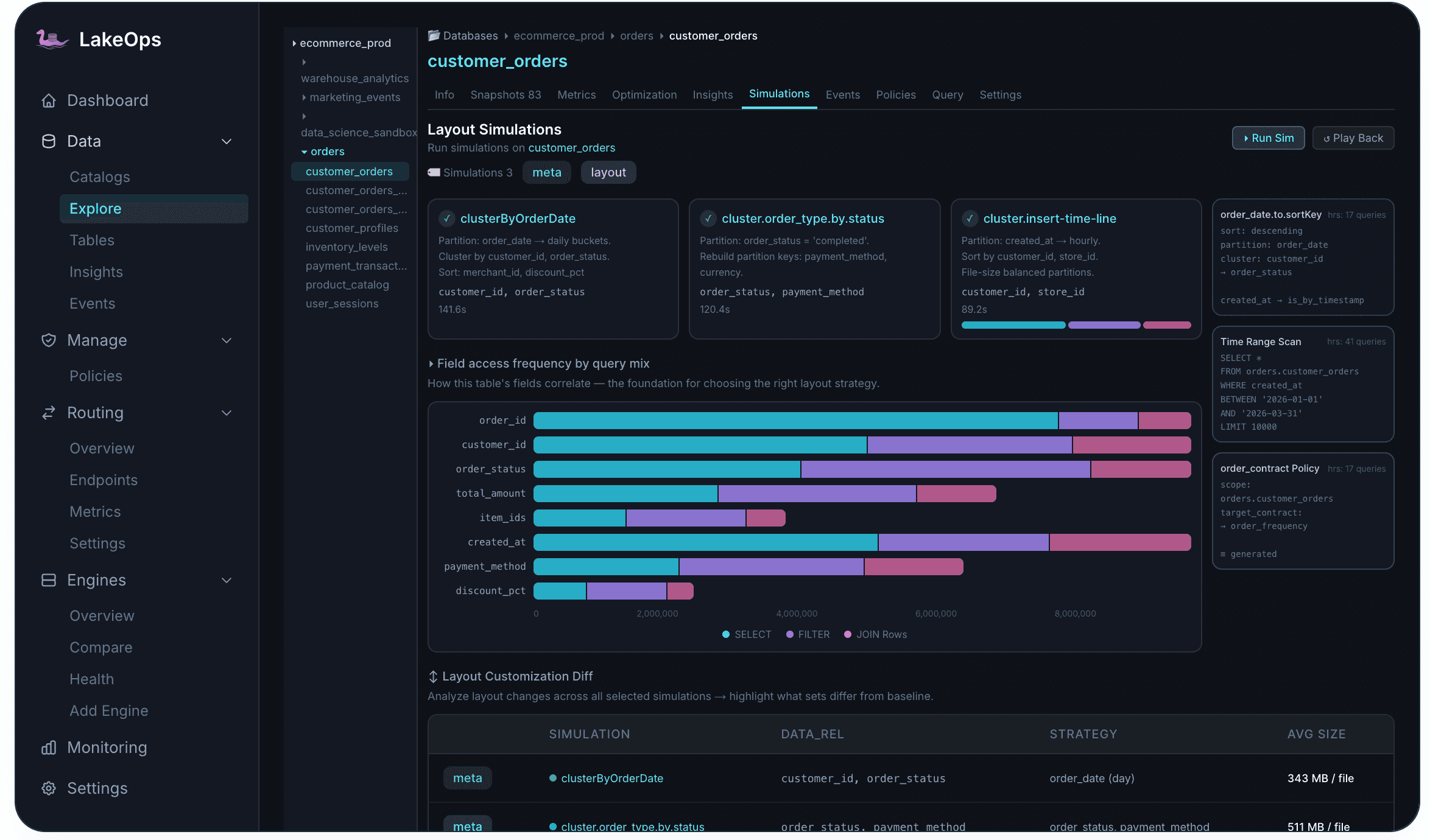

The control plane solves this by collecting query telemetry across all connected engines — Trino, Spark, Snowflake, Athena, DuckDB, Flink — and identifying which columns appear most frequently in WHERE clauses, join keys, and GROUP BY expressions for each table. The compaction engine then applies a sort order derived from actual usage rather than a static declaration. If dashboard queries increasingly filter on `customer_segment` alongside `event_date`, the sort order adapts to include both columns — and the row-group statistics tighten accordingly.

The control plane evaluates multiple approaches — single-dimension sort, multi-column sort, and z-order (which interleaves multiple dimensions for multi-predicate queries) — based on the actual query mix, and selects the strategy that produces the best data-skipping characteristics for each table independently.

3. Delete file handling during compaction

In Iceberg's merge-on-read model, updates and deletes produce position delete files (row-level markers) and equality delete files (predicate-based markers) rather than rewriting data files immediately. This keeps write latency low — but the accumulated delete files degrade every subsequent read. Each query must reconcile data files with their associated delete files, effectively scanning more data than the logical table contains.

Efficient compaction must handle delete files as a first-class concern. When the control plane rewrites data files, it physically applies pending deletes in the same pass — merging delete file contents into the new data files and eliminating the reconciliation overhead for all future reads. For tables with heavy update traffic, this can be the single biggest performance improvement compaction delivers.

LakeOps also supports standalone position delete rewriting and equality delete rewriting as separate operations, allowing platform teams to address delete file accumulation independently of full data file compaction when needed.

4. A purpose-built Rust execution engine

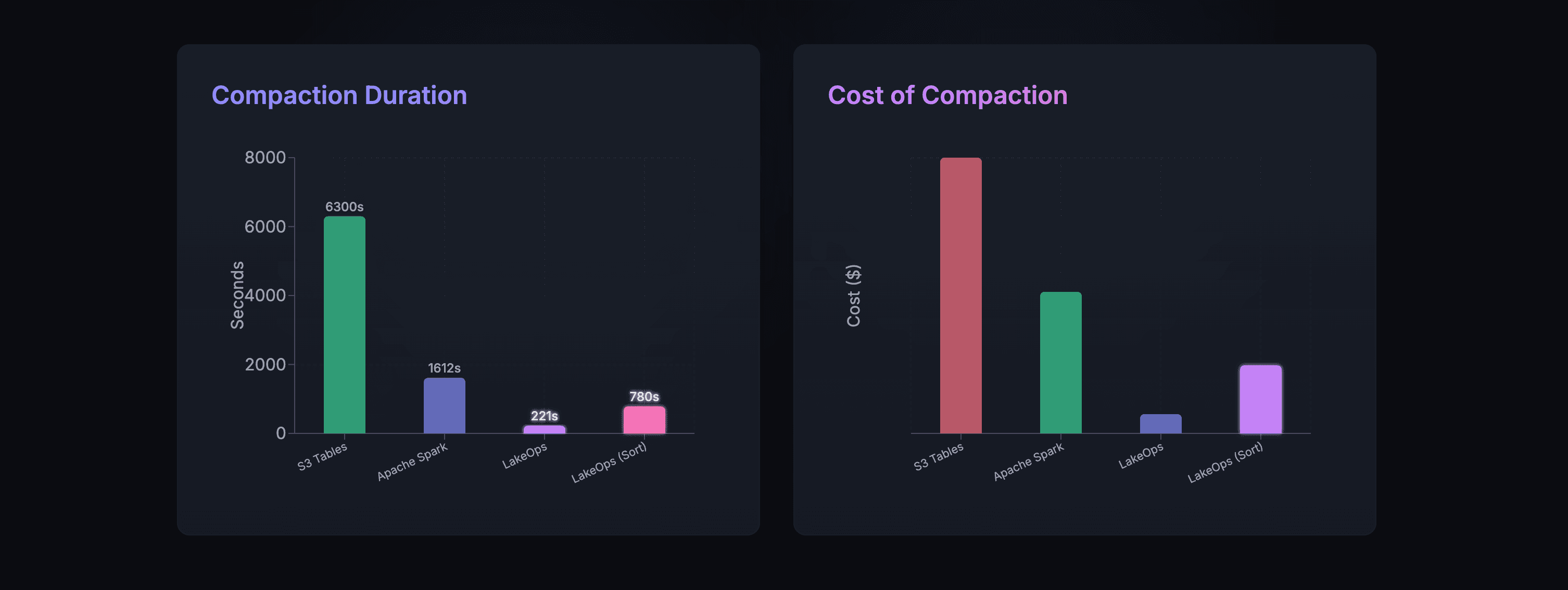

The execution engine matters as much as the strategy. Traditional compaction runs through Spark — provisioning JVM executors, distributing tasks across a cluster, and paying for the runtime overhead of garbage collection, serialization, and shuffle. For a single large table, Spark works. For hundreds of tables compacted daily, the aggregate JVM overhead dominates the cost structure.

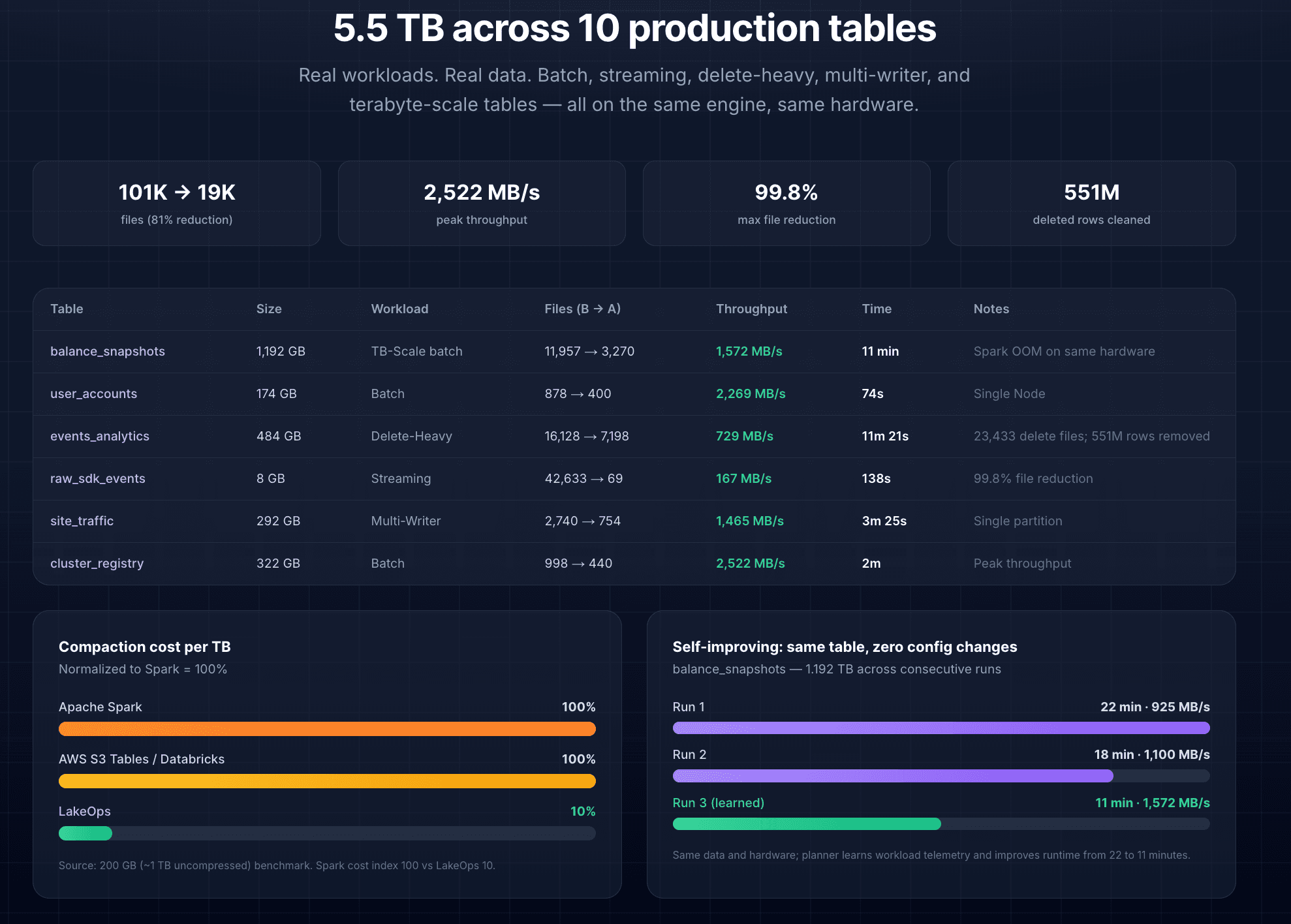

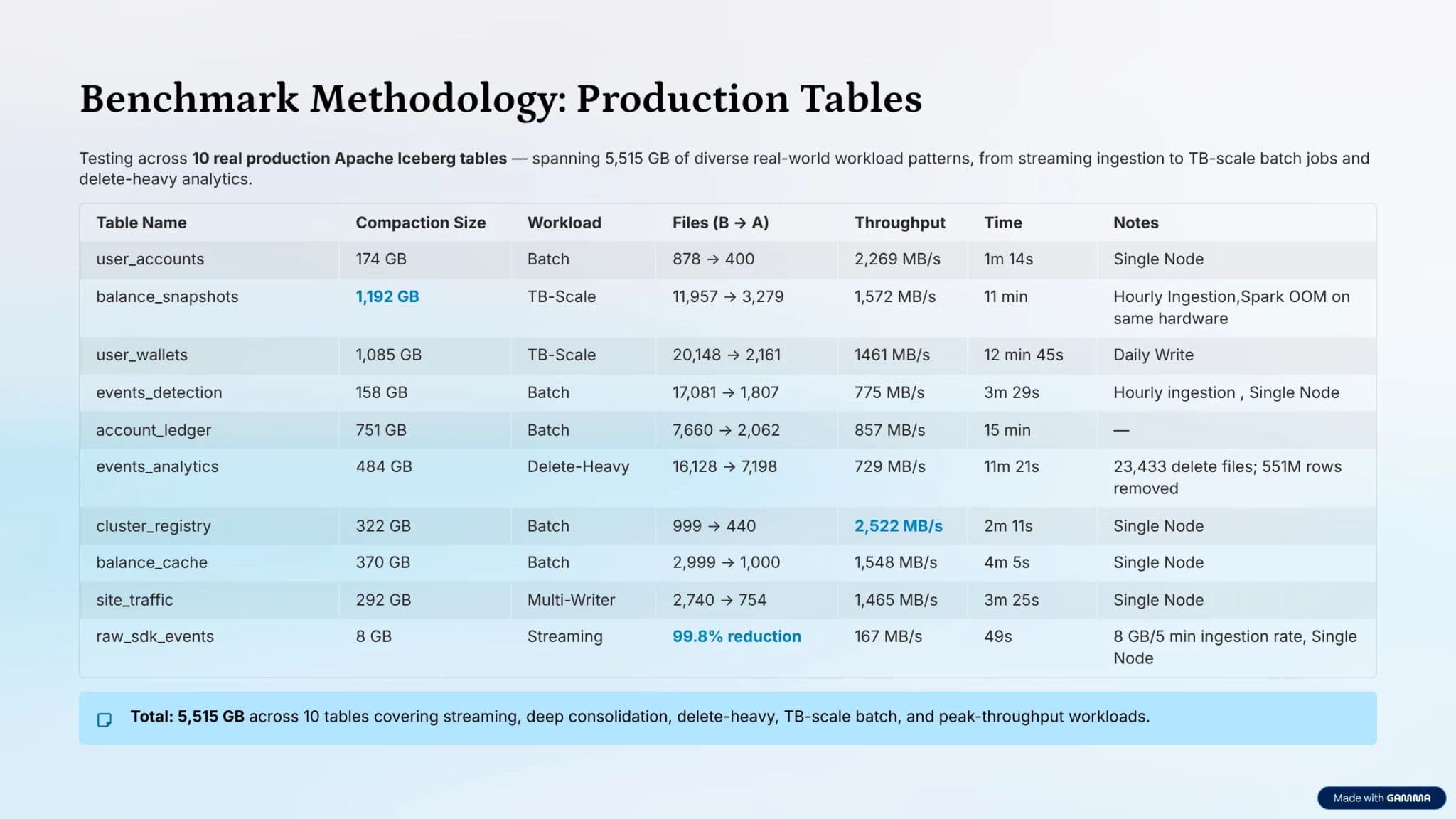

LakeOps runs compaction on an engine built in Rust on Apache DataFusion. The architecture processes Parquet data through Arrow columnar buffers with bounded memory, lock-free parallelism, no garbage collection, and no executor provisioning. The engine reads Iceberg metadata, identifies suboptimal partitions, rewrites files with the selected sort order, and commits atomically through Iceberg's optimistic concurrency control — all without a JVM in the path.

Every compaction commit is non-blocking: concurrent readers and writers are never interrupted. If a conflict is detected at commit time (because another process modified the same partition), the engine retries the affected partition on the next cycle — no data loss, no corruption, no blocked pipelines.

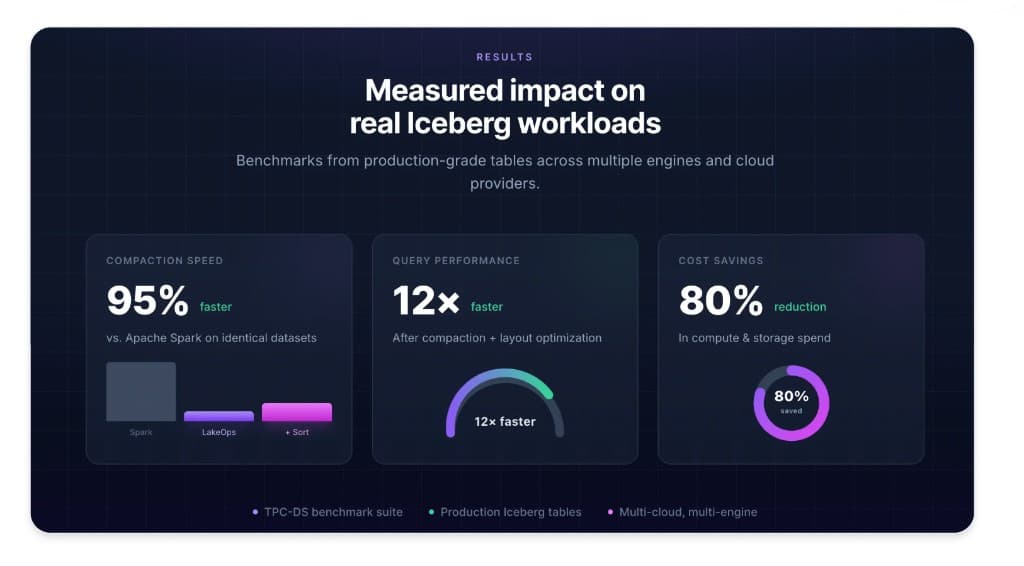

The practical difference is measured in both speed and cost. In production benchmarks across batch, streaming, delete-heavy, and multi-writer workloads, the Rust engine processes data at throughput levels that Spark cannot match on equivalent hardware. On one 1.2 TB table, Spark failed with an out-of-memory error while the Rust engine completed compaction successfully. The cost-per-terabyte drops to roughly a tenth of what JVM-based approaches require.

The engine also self-improves. Because it records per-table throughput, partition structure, and memory usage from each run, subsequent compaction passes on the same table execute faster — the planner has learned the workload characteristics and allocates resources more efficiently. In production, the same table has been observed compacting progressively faster across consecutive runs as the planner converges on optimal resource allocation.

5. Coordination with the maintenance stack

Compaction does not operate in a vacuum. Its efficiency depends on what happens before and after it — and the order matters.

If compaction runs before snapshot expiration, it may rewrite files that are about to be dereferenced — wasting compute on data that will be garbage-collected. If manifest optimization runs before compaction finishes, it targets an intermediate file layout that will change again at commit time. If orphan cleanup overlaps with an in-progress compaction, it risks deleting temporary files the engine has not yet committed.

A control plane eliminates these conflicts by treating maintenance as a coordinated pipeline. The sequence: (1) snapshot expiration trims the metadata tree and releases stale data → (2) orphan cleanup removes the files expiration just dereferenced → (3) compaction runs against the clean, current dataset — every file it rewrites is a file the lake actually needs → (4) manifest optimization consolidates metadata against the final compacted layout.

This sequencing improves compaction quality. When compaction operates on a dataset already pruned of expired and orphaned data, the rewrite produces a tighter output — fewer files, cleaner partitions, leaner manifests downstream. The lakehouse optimization guide covers the full pipeline in detail.

6. Per-table file size and threshold tuning

The default 512 MB target file size is a reasonable starting point, but it is not optimal for every table. The right file size depends on the table's read pattern, write frequency, and partition cardinality:

- Tables serving point lookups (feature stores, real-time scoring): smaller files (64–128 MB) let engines locate the relevant row group faster without scanning through a large file. - Tables serving full-partition scans (daily aggregation, BI dashboards): larger files (256–512 MB) reduce the number of S3 GET requests and planning overhead. - High-frequency streaming tables: may need different targets across partitions depending on per-partition data volume.

The control plane evaluates file size distribution per table and adjusts thresholds based on observed access patterns and write behavior. It monitors compaction efficiency — if a table's files consistently fall below the target shortly after compaction (because of high-frequency appends), the system tightens the trigger threshold or increases compaction frequency for that table. The goal is that every table converges toward its own optimal file distribution rather than being forced into a universal default.

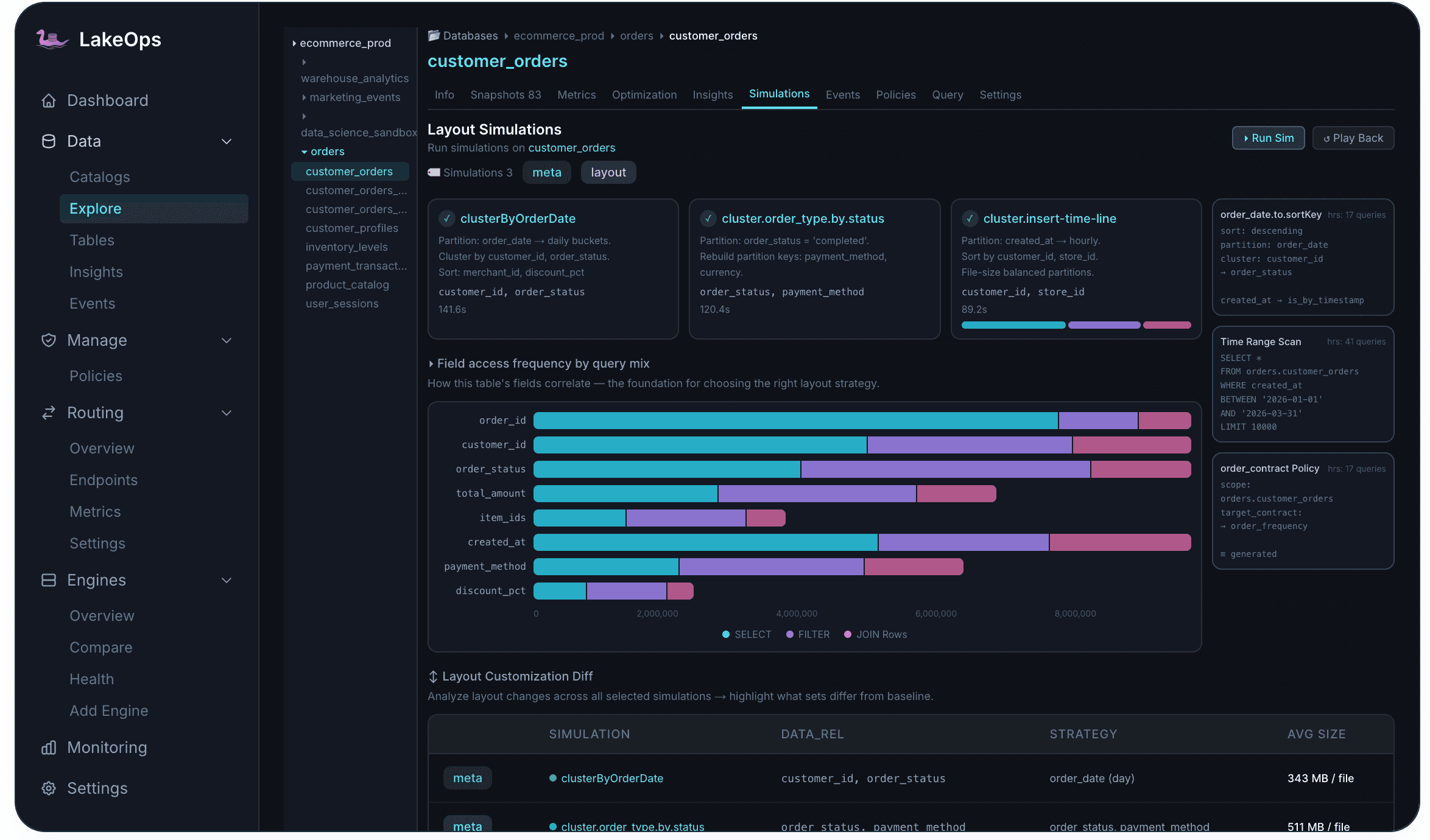

7. Simulating layout changes before committing

Changing a sort order or file size target rewrites every data file in the table. If the new layout turns out to be worse for the dominant query pattern, you have paid the full cost of a rewrite with negative returns.

Layout simulations solve this by testing changes on an Iceberg branch before applying them to production. The control plane creates a branch from the latest snapshot, applies the proposed layout change, replays the table's actual query patterns against both the baseline and the candidate layout, and compares scan volumes, file pruning rates, and estimated query costs. The branch is discarded afterward — no production data is modified.

This is particularly valuable for compaction strategy changes. Before switching a table from binpack to sorted compaction, or changing the sort columns, you can see the projected impact on real queries. Before adjusting the target file size, you can verify that the new distribution actually improves the read-to-write cost ratio.

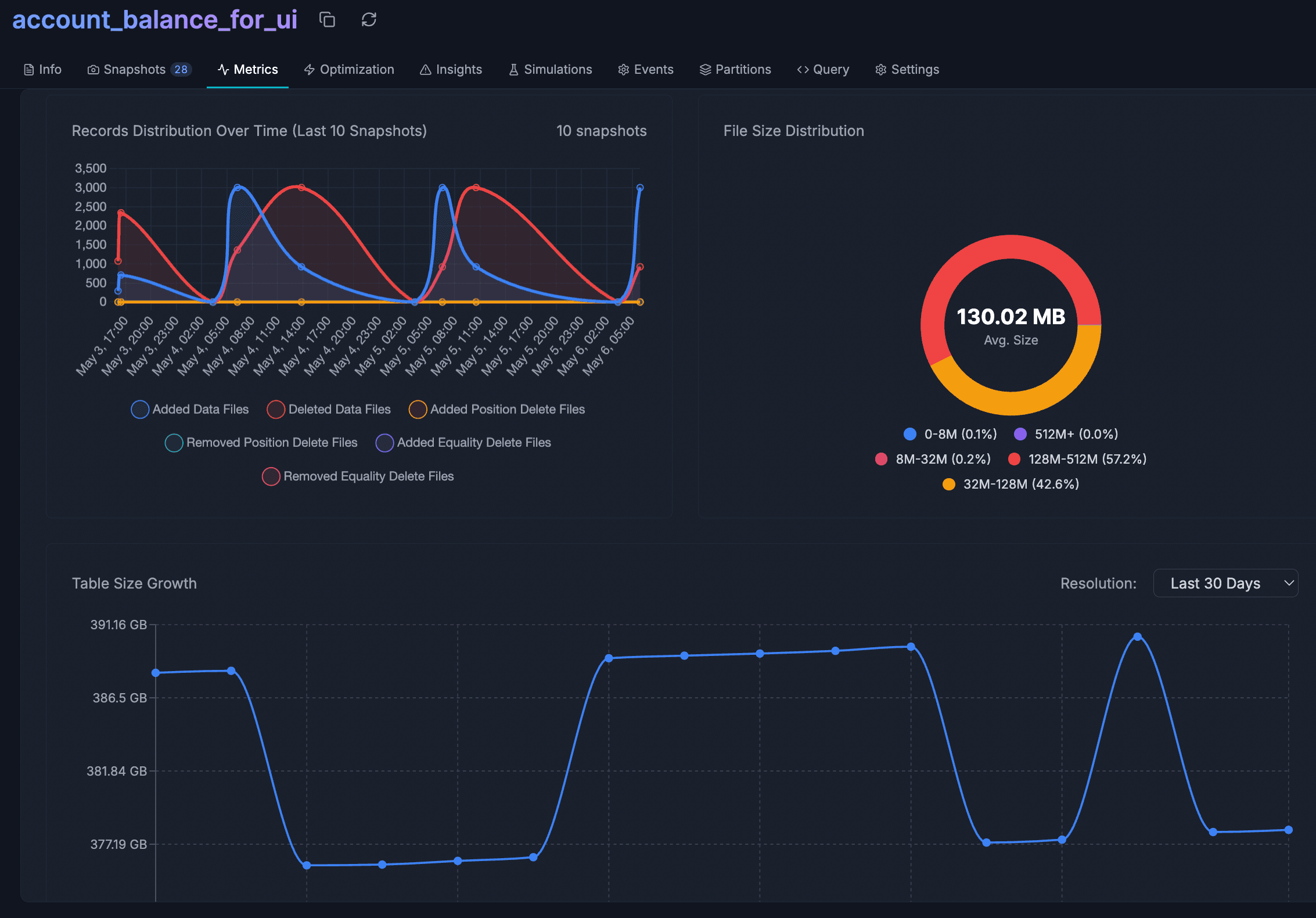

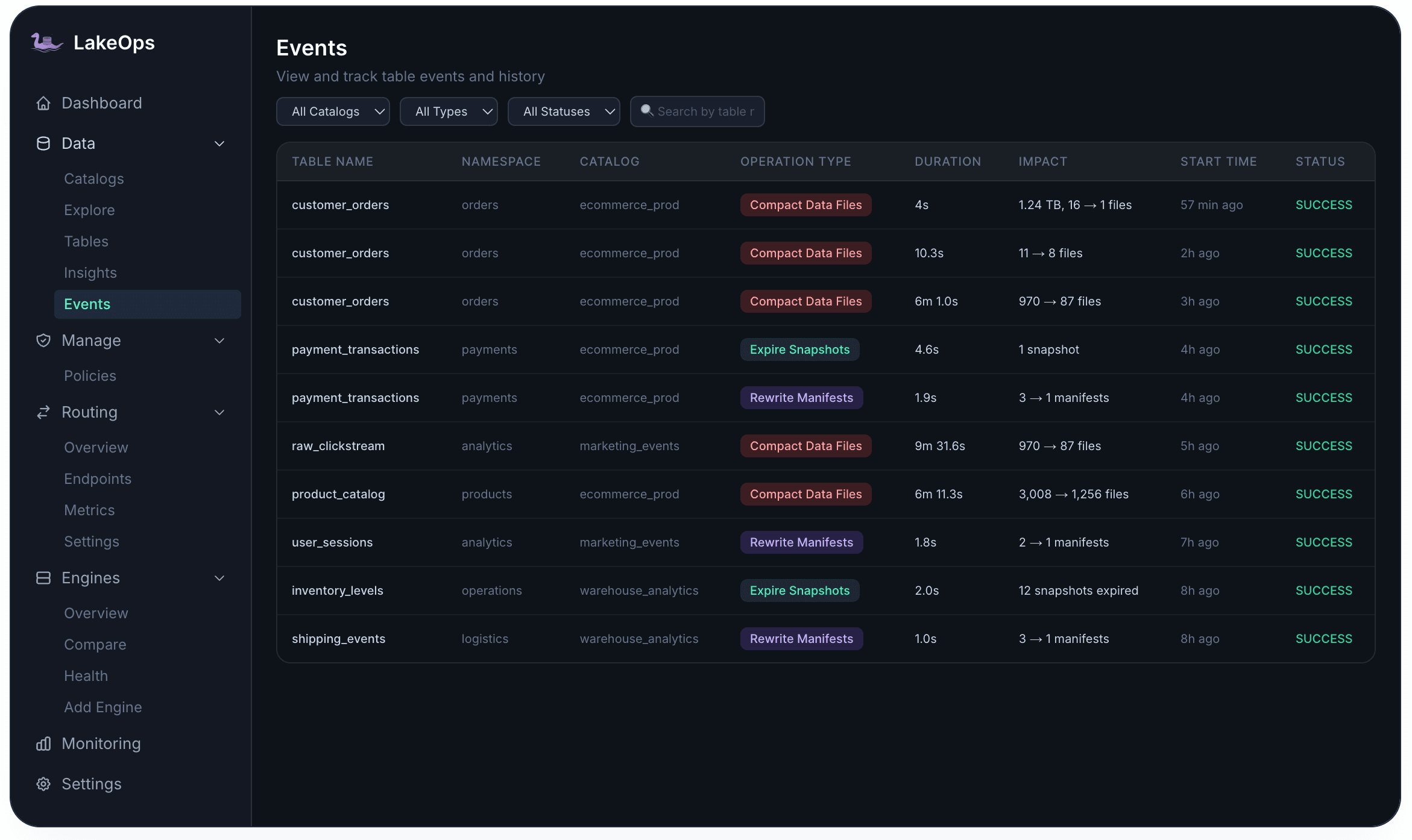

8. Full observability into compaction health

Compaction produces measurable outcomes — and those outcomes need to be visible. Without observability, platform teams cannot tell whether compaction is keeping up with write volume, whether the sort strategy is effective, or whether compaction costs are justified by query-side savings.

The control plane provides visibility at both the lake and table level. The dashboard shows aggregate compaction activity — total operations, files consolidated, data volume processed, and how query acceleration has changed over time. At the table level, every compaction operation is logged in the Events tab with its duration, before/after file counts, and data volume — providing a full audit trail for compliance and debugging.

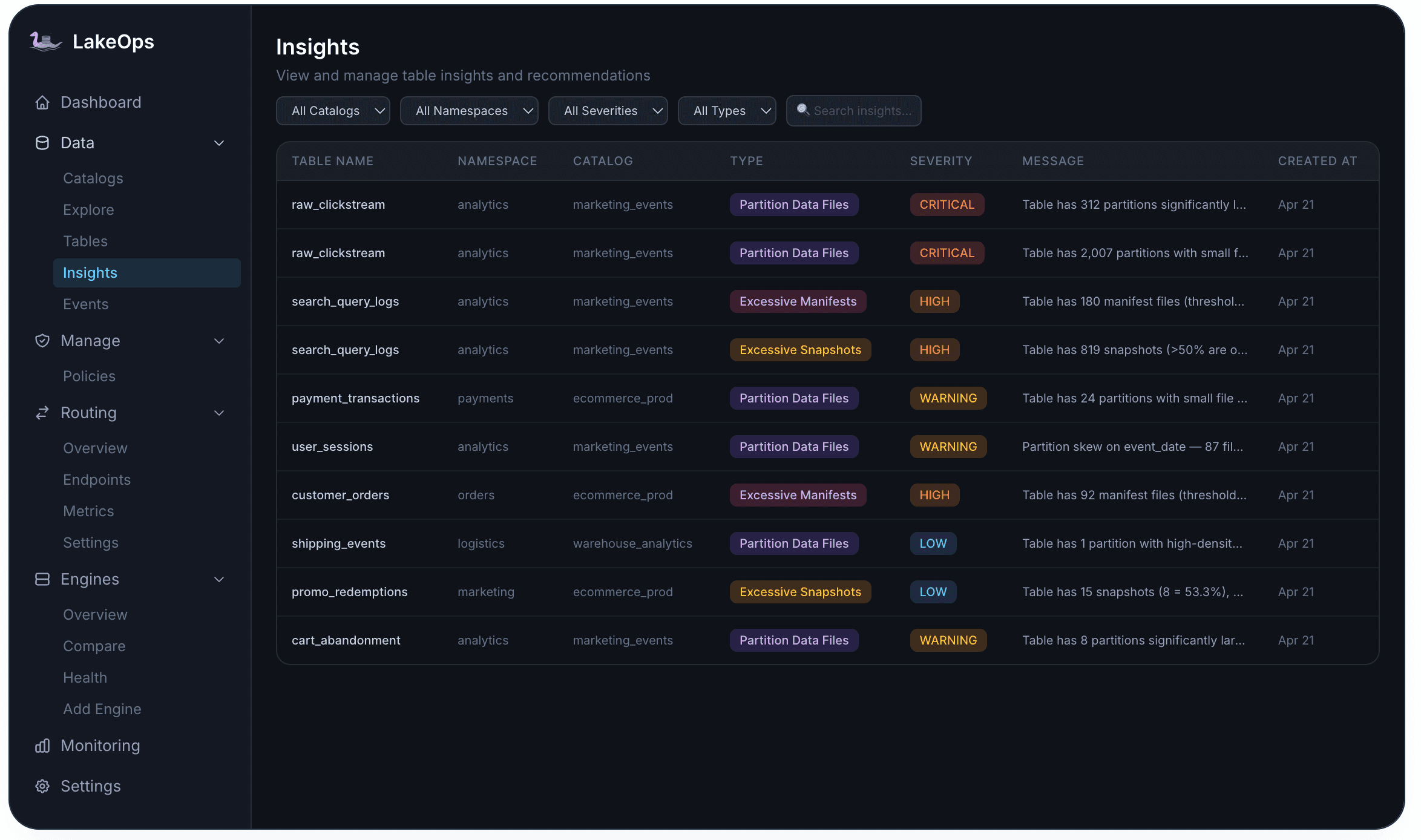

Proactive alerts complement the metrics. When a table's file count exceeds a healthy threshold, or when average file size drifts below the target range, the Insights engine raises an alert at the appropriate severity level — CRITICAL, HIGH, WARNING, or LOW — linked directly to the affected table. This turns compaction from a fire-and-forget cron job into a continuously monitored optimization surface.

9. Fleet-wide compaction policies

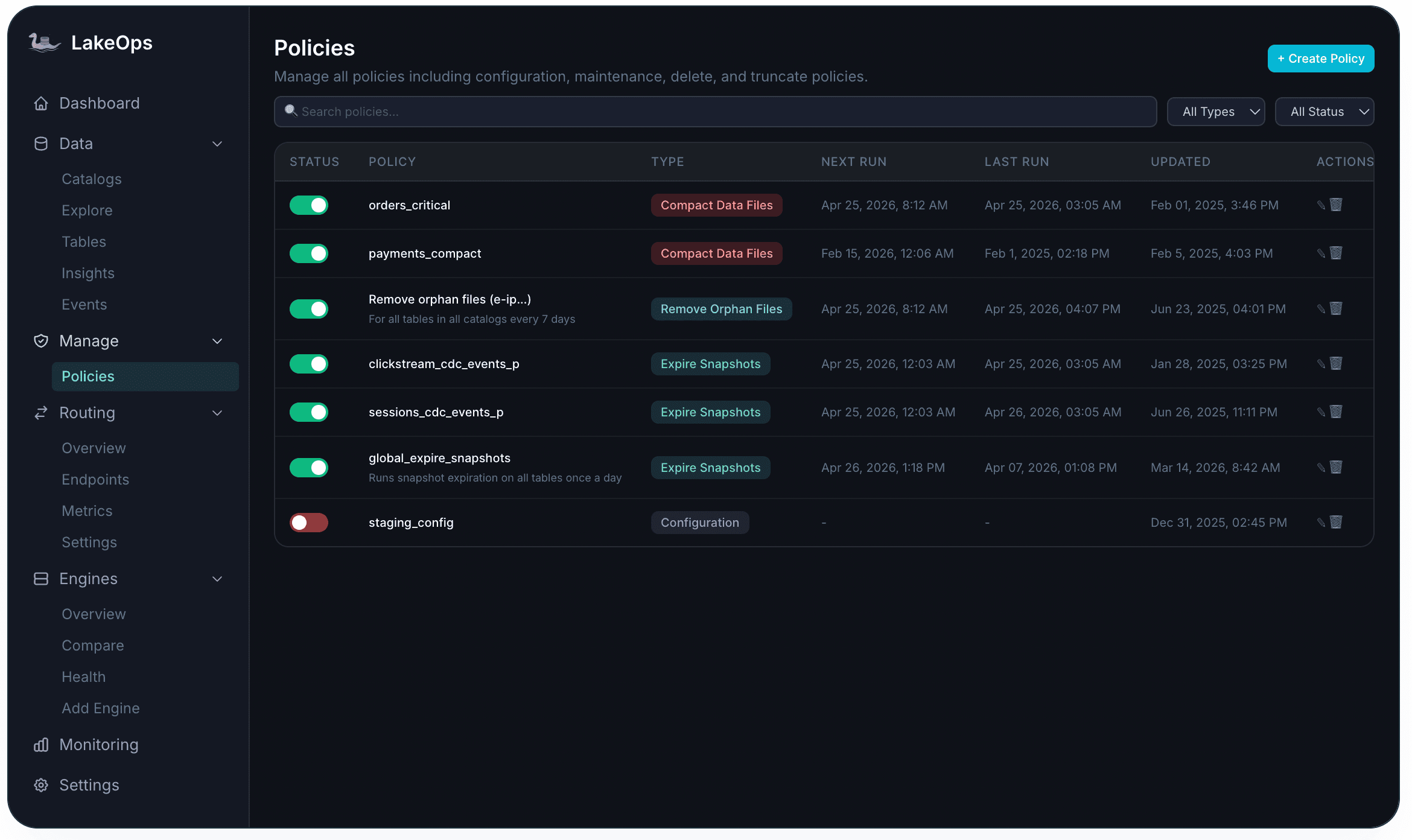

At scale, compaction rules need to be declared as policy — not configured per table through ad-hoc scripts. The control plane lets you define compaction policies that apply across catalogs, namespaces, or specific table patterns. Each policy specifies the trigger conditions, the strategy (binpack, sorted, or auto-select based on query telemetry), the schedule constraints (off-peak windows, concurrency limits), and the scope.

Scope resolution follows a specificity hierarchy: a table-level policy overrides a namespace default, which overrides a catalog-wide baseline. This lets platform teams set sensible defaults for the entire lake while allowing targeted overrides for tables with unusual access patterns or SLAs. Policies are versioned with a full audit trail — every change is tracked with who made it and when.

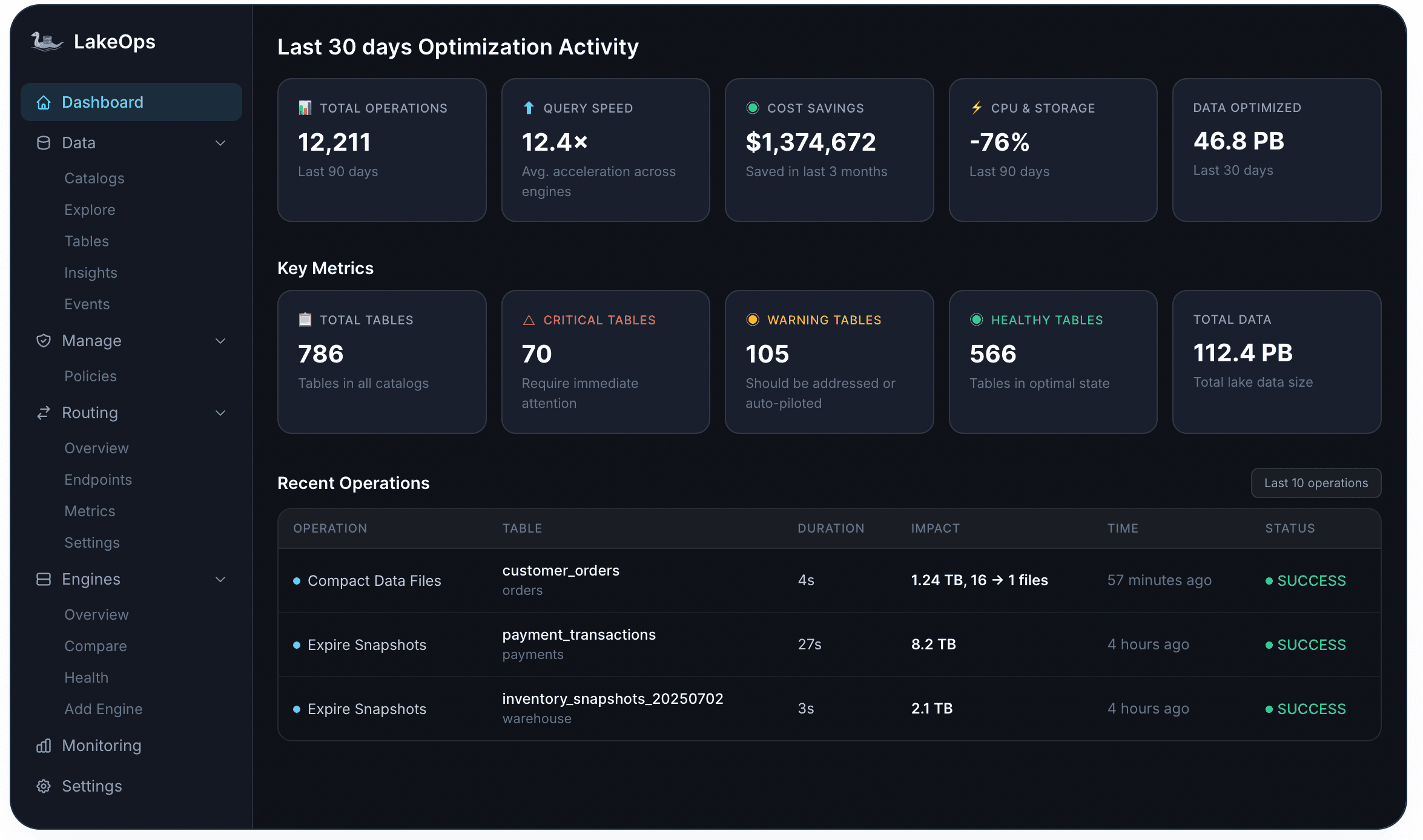

The outcome: compaction as a continuous optimization loop

When these components work together — event-driven triggers, query-aware sorting, delete file handling, a fast Rust engine, coordinated sequencing, per-table tuning, simulation-validated layout changes, full observability, and fleet-wide policies — compaction stops being a maintenance chore and becomes a continuous optimization loop.

Every write that lands on the lake is evaluated. Every table's file layout is compared against its current access patterns. Every compaction run produces a tighter, better-aligned dataset than the one before. And every subsequent query — across every engine, from every consumer — benefits from reduced scan volumes, faster planning, and lower compute costs.

The lake does not drift toward degradation between compaction runs. It converges toward an optimal state, continuously, table by table.

To see how compaction fits into the broader operational stack — observability, snapshot lifecycle, manifest health, orphan cleanup, routing, and AI readiness — read Iceberg Lakehouse Optimization — The Right Way or explore the LakeOps platform.