AI agents are becoming primary consumers of SQL infrastructure. They query data iteratively, issue the same query shapes repeatedly with different parameters, run SQL in tool-use loops without human review, and need richer context about what data exists before writing a query. The infrastructure they hit — Apache Iceberg tables sitting on object storage — was designed for scheduled ETL and dashboard refresh, not for autonomous agents issuing dozens of queries per reasoning step.

The gap shows up immediately. An agent answering a customer support ticket queries user history, recent orders, product metadata, and support logs. Each step generates new queries based on previous results. A single user interaction can produce 30–50 SQL statements. Multiply that across hundreds of concurrent agent sessions and the workload profile looks nothing like the BI traffic your lake was sized for.

This post covers what actually breaks, why, and the infrastructure required to make an Iceberg lake perform well under agentic workloads — drawing from the QueryFlux design document for the SQL middleware layer and from production deployment patterns for the storage optimization layer.

What breaks when agents query Iceberg tables

The failure modes are structural, not incidental. They compound each other and cannot be fixed by tuning a single parameter.

Query amplification. Traditional query planning assumes tens of concurrent queries per second. Agents produce orders of magnitude more. A research agent exploring a dataset generates schema discovery queries, sample queries, aggregation queries, and validation queries — all within a single tool-use loop. Infrastructure sized for 100 concurrent dashboard users suddenly sees 5,000 agent-generated queries per minute. The I/O subsystem, metadata catalog, and query engine concurrency limits were never designed for this fanout.

Latency compounds through reasoning chains. When an agent runs 12 sequential queries in a reasoning loop, each 3-second query delay adds up to 36 seconds of wall-clock time for a single interaction. Users perceive this as the agent being broken. The latency tolerance for agent SQL is fundamentally different from batch or dashboard workloads — sub-second p95 is the target, not a nice-to-have. On uncompacted Iceberg tables with thousands of small files and fragmented manifests, even a simple partition-pruned scan can take 5–10 seconds due to S3 GET amplification and manifest listing overhead.

Repetitive template execution. Research on production query workloads shows that roughly 80% of queries are repeated templates — the same SQL structure with different literal values. Agents amplify this pattern further because tool-use frameworks execute parameterized queries in loops. The same `SELECT * FROM orders WHERE customer_id = ? AND status = ?` runs thousands of times per day with different values. Without a system that recognizes and optimizes for template repetition, every execution pays full planning cost.

Unpredictable access patterns. BI dashboards have stable query shapes. Agents improvise. They generate novel SQL based on conversation context, explore unfamiliar tables, and access columns no one anticipated. Partition strategies and sort orders tuned for known dashboard filters may be irrelevant to agent access patterns — or worse, actively harmful if they cause full scans on agent-typical predicates.

Safety failures at machine speed. A human analyst who accidentally runs an expensive query learns not to do it again. An agent in a loop repeats the mistake indefinitely, compounding costs every iteration. Without enforcement at the SQL layer, a single misconfigured agent can scan petabytes of data, expose PII in query results passed back to an LLM context window, run DDL against production tables, or generate unbounded compute spend — all within minutes, with no human in the loop to notice or intervene.

The five-layer architecture

Fixing these problems requires a control plane between agents and your Iceberg tables. The architecture has five layers, each targeting a specific failure mode. They work independently but compound when deployed together.

1. MCP: Agent-native connectivity

The Model Context Protocol (MCP) is the emerging standard for how AI agents interact with external tools and data sources. It fills the same role for agent-to-infrastructure communication that REST catalogs fill for engine-to-catalog communication — a standardized, schema-aware interface that any compliant client can connect to without custom integration code.

A well-designed MCP interface for Iceberg access exposes discrete, purpose-built tools rather than a single "run anything" endpoint. This design constraint is intentional. Agents that get a generic `execute_sql` tool tend to skip schema discovery and hallucinate table names. Forcing discovery through separate tools improves query accuracy.

The tool decomposition that works in practice:

list_schemas returns all available schemas and databases across connected engines. Agents call this before constructing queries to avoid guessing at namespace structures.

describe_table returns column names, types, partition specs, sort orders, and optionally sample rows in a single call. This eliminates the multiple round-trips agents typically make to understand a table's shape — reducing both latency and token cost.

execute_query runs SQL through the full routing and optimization pipeline. It accepts an optional `engine_hint` for cases where the agent has preference, and enforces `max_rows` by default to prevent unbounded result sets from flooding the LLM context window.

explain_query returns estimated cost, row count, and execution plan without running the query. Agents use this for pre-flight cost checks and to decide whether to proceed or narrow their query.

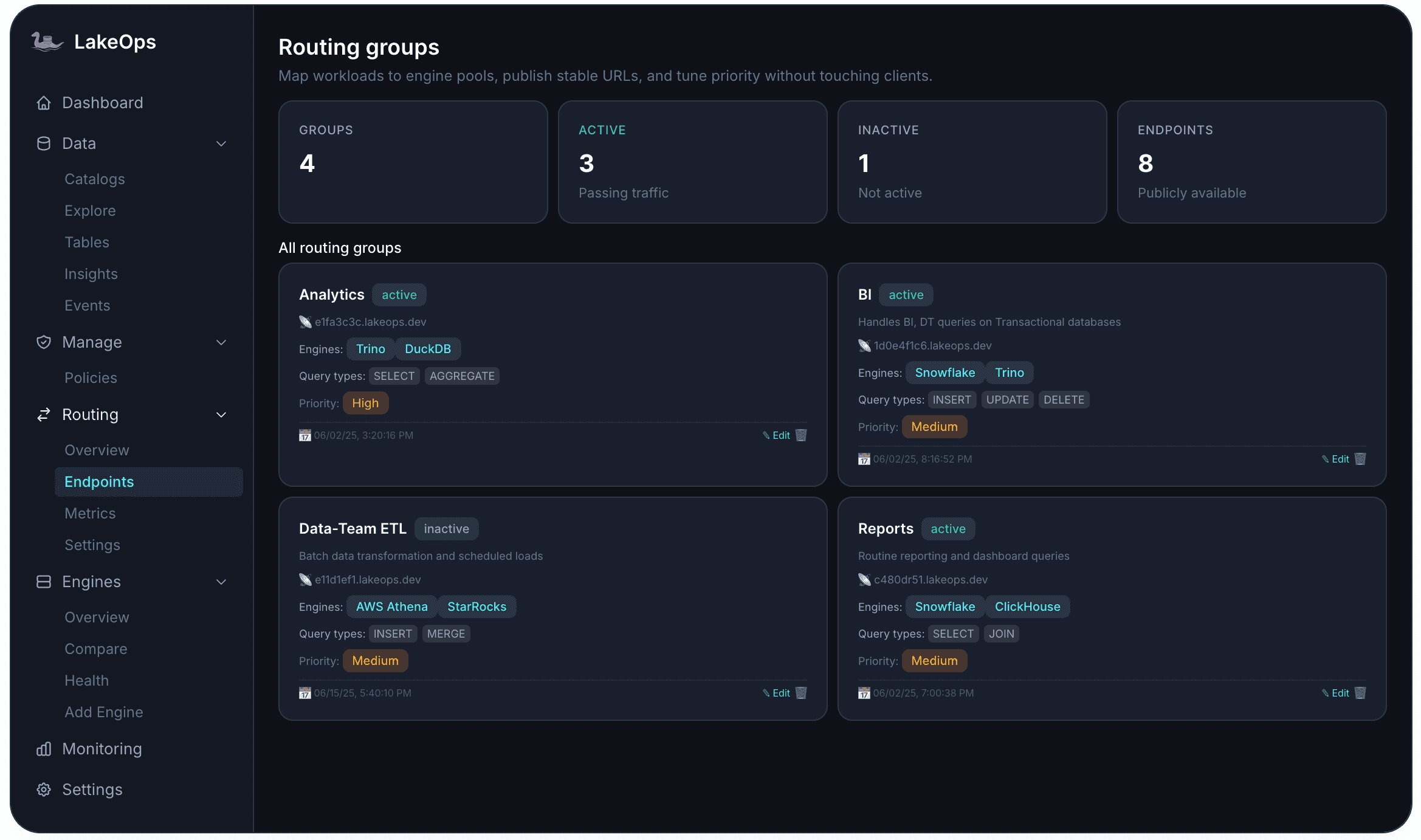

Each routing endpoint gets a stable URL that agents connect to directly — e.g., `storefront-analytics.lakeops.dev`. The endpoint defines which engines are available, what query types are allowed, and what priority level applies. Agents inherit the full policy stack of their endpoint (guardrails, routing rules, cost limits) without per-agent configuration.

Compatible agent frameworks include Claude, LangChain, LlamaIndex, and any custom MCP client. The key property is zero integration code — agents connect via standard MCP protocol, call `list_schemas` to discover what is available, and start querying.

An often-overlooked requirement: async query support. Agents run in tool-use loops. A synchronous execute call that blocks for 10 minutes on a large scan ties up the entire reasoning chain and risks gateway timeouts. The MCP layer needs submit/poll/cancel semantics with SSE streaming so agents can monitor progress and handle long-running queries gracefully.

2. Query guardrails

Giving agents SQL access to production data requires enforcement at the query level, not at the application level. The guard chain sits between routing and dispatch — every query passes through it regardless of which frontend or agent issued it.

Guards form an ordered chain evaluated sequentially. The first non-Allow result wins. The ordering matters: cheaper checks gate expensive ones.

ReadOnlyGuard blocks DDL and DML statements (INSERT, UPDATE, DELETE, DROP, CREATE, ALTER, TRUNCATE) for agent-originated sessions. Implementation uses SQL parsing (sqlparser-rs or equivalent) rather than string matching — catching CTEs that wrap mutations, function calls with side effects, and other circumvention patterns that naive blocklists miss.

RowLimitGuard injects `LIMIT N` when a query lacks one. Agents in reasoning loops often forget to limit results, leading to multi-gigabyte result sets that overflow LLM context and generate massive egress costs. The guard wraps the query: `SELECT * FROM ({original_sql}) __limited LIMIT {max_rows}`. Configurable per routing group.

CostEstimateGuard issues EXPLAIN before execution and rejects queries where estimated scanned bytes exceed a configurable threshold. This is the primary defense against the cartesian join and missing-WHERE patterns that agents in tool-use loops frequently generate — queries that pass syntax checks but would scan terabytes at runtime. The guard activates only for engines that return structured EXPLAIN output (Trino, DuckDB); for others it falls back to warn rather than hard-reject.

PIIMaskGuard rewrites queries to exclude or hash columns tagged as sensitive in the catalog configuration. Three strategies: Exclude (removes column from `SELECT *`), Hash (wraps in `SHA256(CAST(col AS VARCHAR))`), or NullOut (replaces with `NULL`). This prevents PII from ever entering the LLM context window — a compliance requirement for any agent with access to tables containing user data.

HumanApprovalGuard pauses high-stakes queries, sends a webhook notification (Slack, email, or custom), and waits for human approval or timeout. The trigger can be pattern-based, cost-threshold-based, or table-sensitivity-based. Timeout behavior is configurable: auto-reject, auto-approve, or queue indefinitely.

Guards are composable. A typical agent-facing routing group stacks: `read_only → row_limit → cost_estimate → pii_mask`. A data engineering agent gets: `cost_estimate → human_approval` for DDL operations. The configuration is per routing group, not per agent — the endpoint defines the trust boundary.

1groups:2 - name: agent-analytics3 guards:4 - type: read_only5 applies_to: agent_sessions6 - type: row_limit7 max_rows: 50008 inject_if_missing: true9 - type: cost_estimate10 max_scanned_bytes: 10_000_000_00011 - type: pii_mask12 sensitive_columns:13 "users.email": hash14 "users.ssn": exclude15 "payments.card_number": null_outEvery guard action is recorded in query history — what fired, what was rewritten, what was rejected — giving full auditability over what agents attempted and what the system allowed.

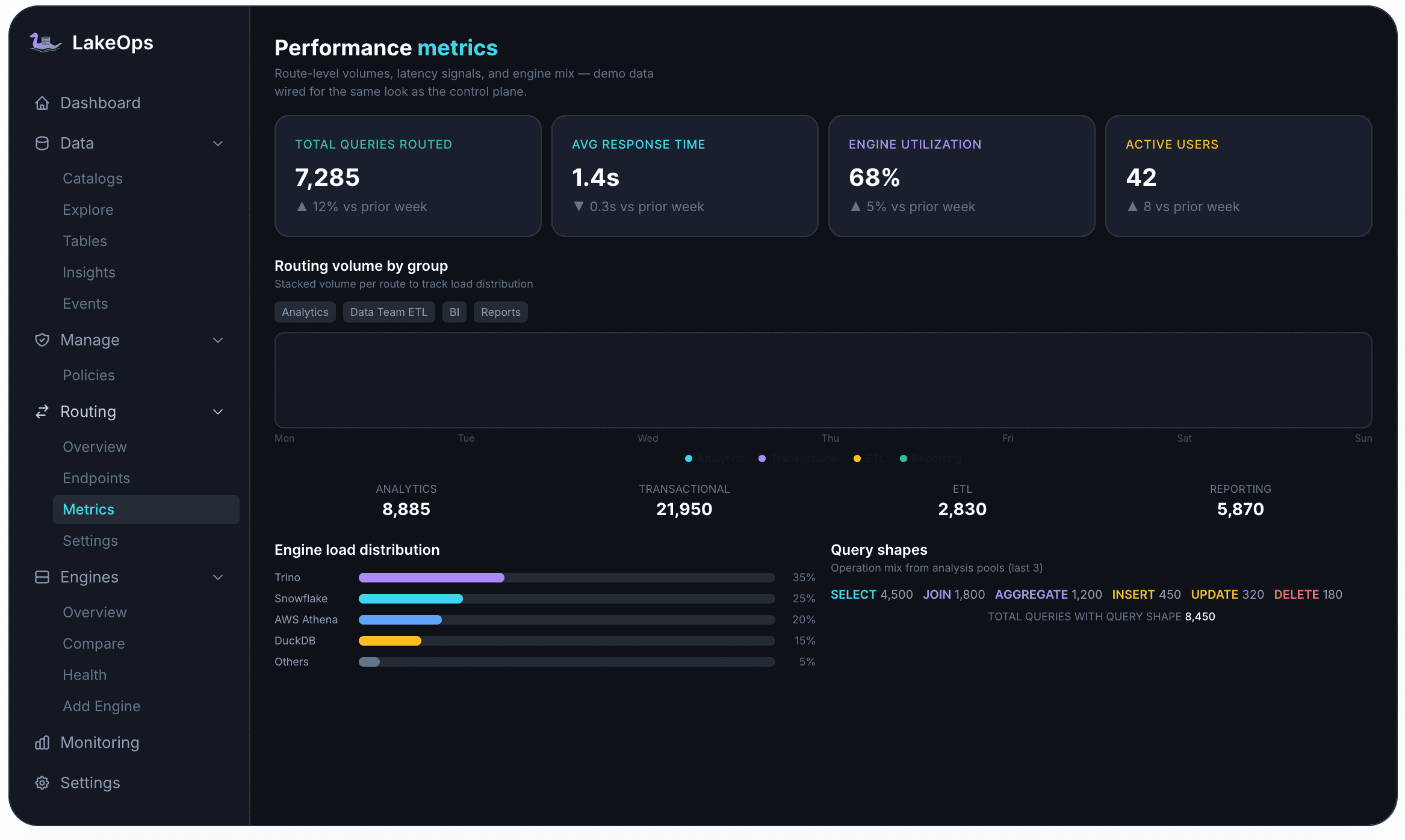

3. Three-router stack

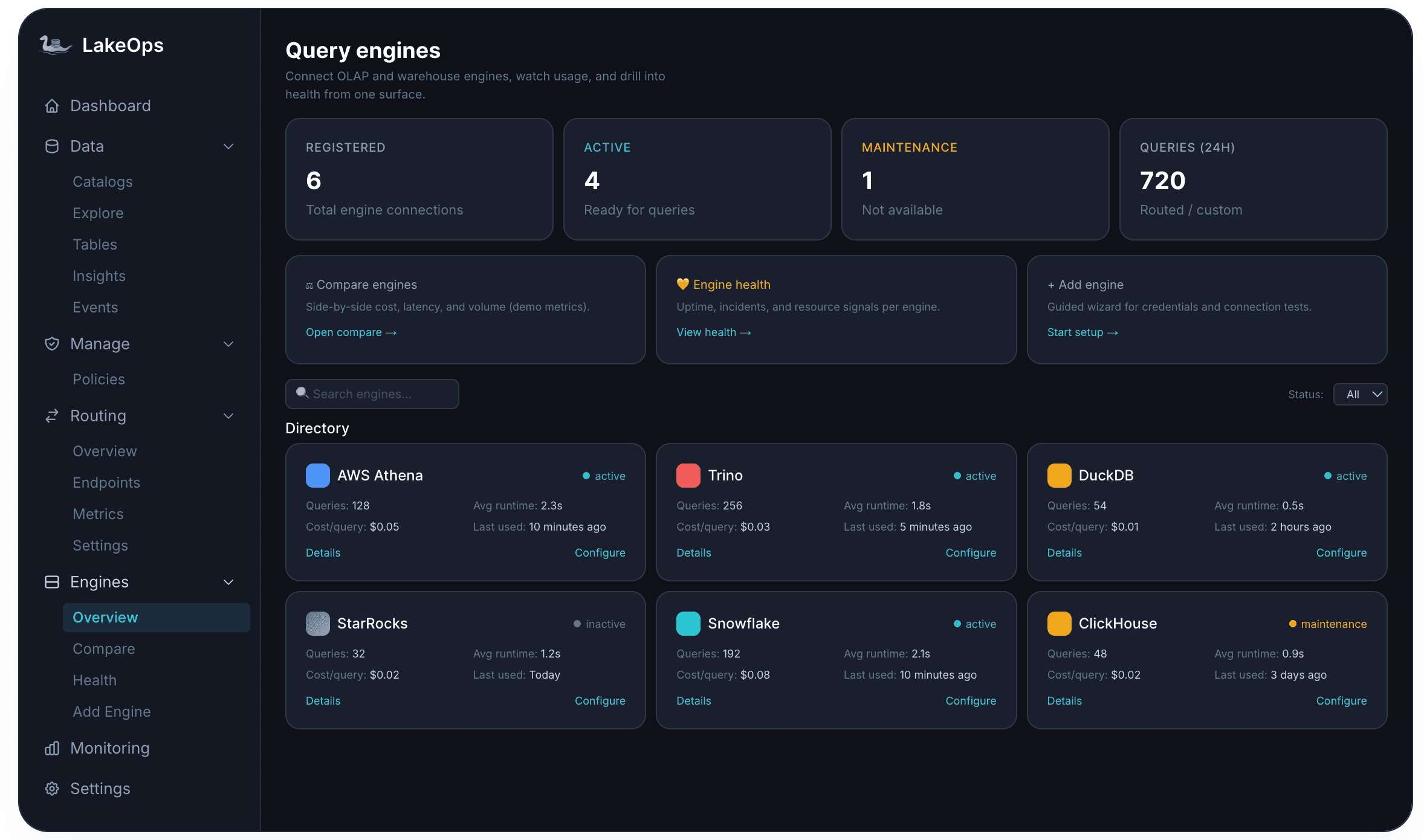

Production Iceberg deployments rarely use a single engine. A typical setup has Trino for interactive analytics, DuckDB for lightweight lookups, Snowflake for BI, Athena for ad-hoc exploration, and Spark for batch ETL. The wrong routing decision at agent scale is expensive: a simple metadata lookup that costs $0.001 on DuckDB costs $0.05 on Snowflake. Across thousands of agent interactions per day, that 50x difference in per-query cost determines whether the agent workload is economically viable.

The routing challenge for agents is that their query shapes are both repetitive (80% templates) and unpredictable (20% novel). A single router type cannot handle both efficiently. The solution is a three-router stack where each router handles what it is best at, and they fall through in sequence:

1. Adaptive router — pure statistics over query history. For any `parameterized_hash` with 20+ observations across multiple engines, it routes to the engine with the best p50 latency if the gap exceeds 25%. No LLM, no embeddings — just percentile math over execution history. Decision cost: 0ms (in-memory cache). Handles the 80% of traffic that consists of repeated templates.

2. LLM router — for novel query shapes that the adaptive router has never seen. Uses a language model (Claude Haiku or equivalent) with live table statistics and engine capabilities to reason about optimal placement. The LLM call is cached by `parameterized_hash`, so subsequent executions of the same template shape skip the LLM entirely. Handles novel patterns that need reasoning about query intent and engine strengths.

3. Semantic router — local embedding similarity against a library of known query shapes. When the LLM router's confidence is below threshold (< 0.60), the semantic router finds the most similar previously-routed query and uses its routing decision. Decision cost: ~1ms. Handles the long tail of rare query shapes.

4. Default group — fallback for queries that no router claims.

The critical property for agent workloads: the routing system improves automatically. As agents run queries and the feedback collector records execution metrics, the adaptive router builds confidence in routing decisions for repeated templates. New query shapes start at the LLM router, get cached, and eventually accumulate enough history to move to the adaptive router. The system converges toward optimal routing without manual configuration.

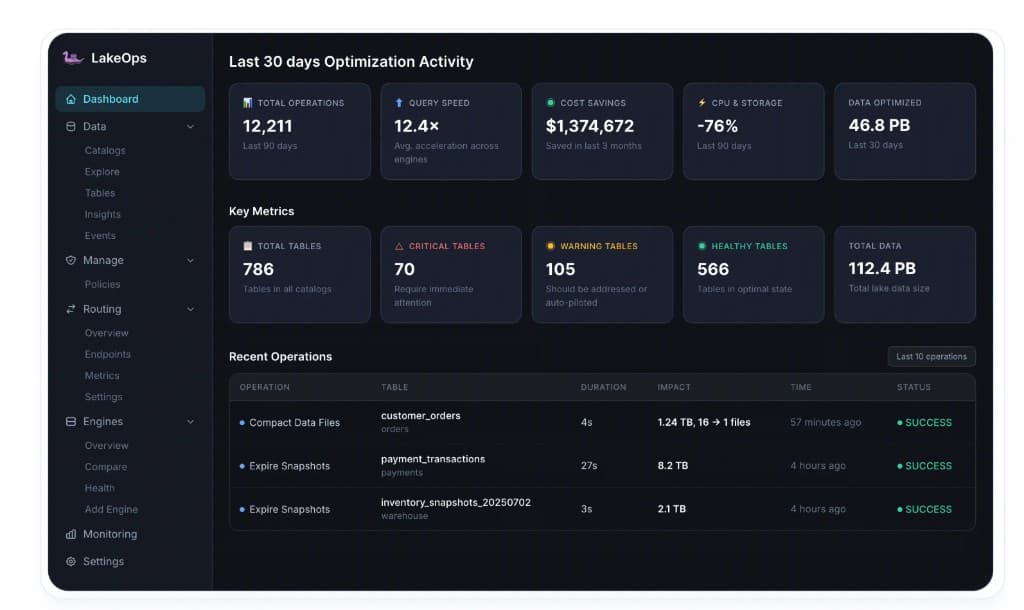

4. Self-optimizing storage

Routing gets queries to the right engine, but the engine still has to read data from object storage. If the underlying Iceberg table has thousands of small files (common after streaming ingestion), fragmented manifests (common after months of appends without maintenance), or sort orders that do not match agent filter patterns, even the best engine choice produces slow queries.

The storage optimization layer observes agent query patterns and continuously adjusts the physical layout:

Compaction with query-aware sort orders. Standard Iceberg compaction merges small files into larger ones. Query-aware compaction goes further — it analyzes field access frequency from actual queries (including agent queries) and adjusts sort order to match. If agents predominantly filter on `event_timestamp` and `user_id`, the compaction engine re-sorts data on those columns so predicate pushdown eliminates irrelevant row groups before any data is read from S3.

Manifest consolidation. Every Iceberg table accumulates manifest files over time. Each manifest must be read during query planning — a table with 500 manifests forces 500 S3 GET requests just to build the query plan, adding seconds of latency before any data is scanned. Manifest optimization consolidates these into a smaller set, keeping query planning fast regardless of table age.

Target file size adaptation. Agents doing point lookups benefit from smaller files (faster to open, less data read per file). Agents doing wide aggregations benefit from larger files (fewer S3 requests, better columnar compression). The optimization system observes query granularity and adjusts target file size per table based on actual access patterns.

Puffin statistics freshness. Column-level statistics (min/max values, null counts, distinct counts) allow query engines to skip entire files without reading them. Stale or missing statistics mean the engine reads more data than necessary. The system keeps statistics current as data arrives and compaction runs, ensuring data skipping works correctly for agent queries.

Priority scheduling. Tables receiving heavy agent traffic get compacted more frequently. The system monitors query latency and data freshness per table, and automatically elevates compaction priority for tables where agents are experiencing degraded performance.

The compound effect: as the storage layer optimizes for agent patterns, query latency drops, which makes the routing decisions more effective (faster engines become available for more query shapes), which generates more performance data, which further improves optimization decisions. The lake gets faster the more agents use it.

5. Agent context propagation and observability

The standard observability stack — query duration, bytes scanned, engine used — is not enough for agent workloads. You need to answer: which agent session produced this query? What step in its reasoning chain? Which tool call? What was the conversation context? Without this, debugging agent behavior and tuning guardrails is guesswork.

Agent context propagation carries structured metadata through the entire query pipeline:

1agent_context:2 agent_id: "customer-support-agent-v3"3 conversation_id: "conv_8f3a2b1c"4 step_index: 75 tool_call_id: "tc_9d4e5f6a"6 agent_framework: "langchain"This context attaches to every QueryRecord in the persistence layer, enabling three capabilities that do not exist without it:

Session replay. Given a `conversation_id`, reconstruct the entire sequence of SQL queries an agent issued during a single interaction, ordered by `step_index`. This turns query history into an agent reasoning debugger — you can see exactly why an agent reached a particular conclusion by inspecting the data it queried at each step.

Per-agent cost attribution. Aggregate compute cost by `agent_id` and `conversation_id`. Know exactly how much each agent (and each conversation) costs in query compute. Identify which agents are expensive, which conversations hit cost guardrails, and where optimization would have the highest ROI.

Feedback loop closure. The adaptive router uses execution metrics grouped by `parameterized_hash` to improve routing decisions. With agent context, you can also group by agent type — an agent that always runs aggregations routes differently from one that always does point lookups, even on the same table.

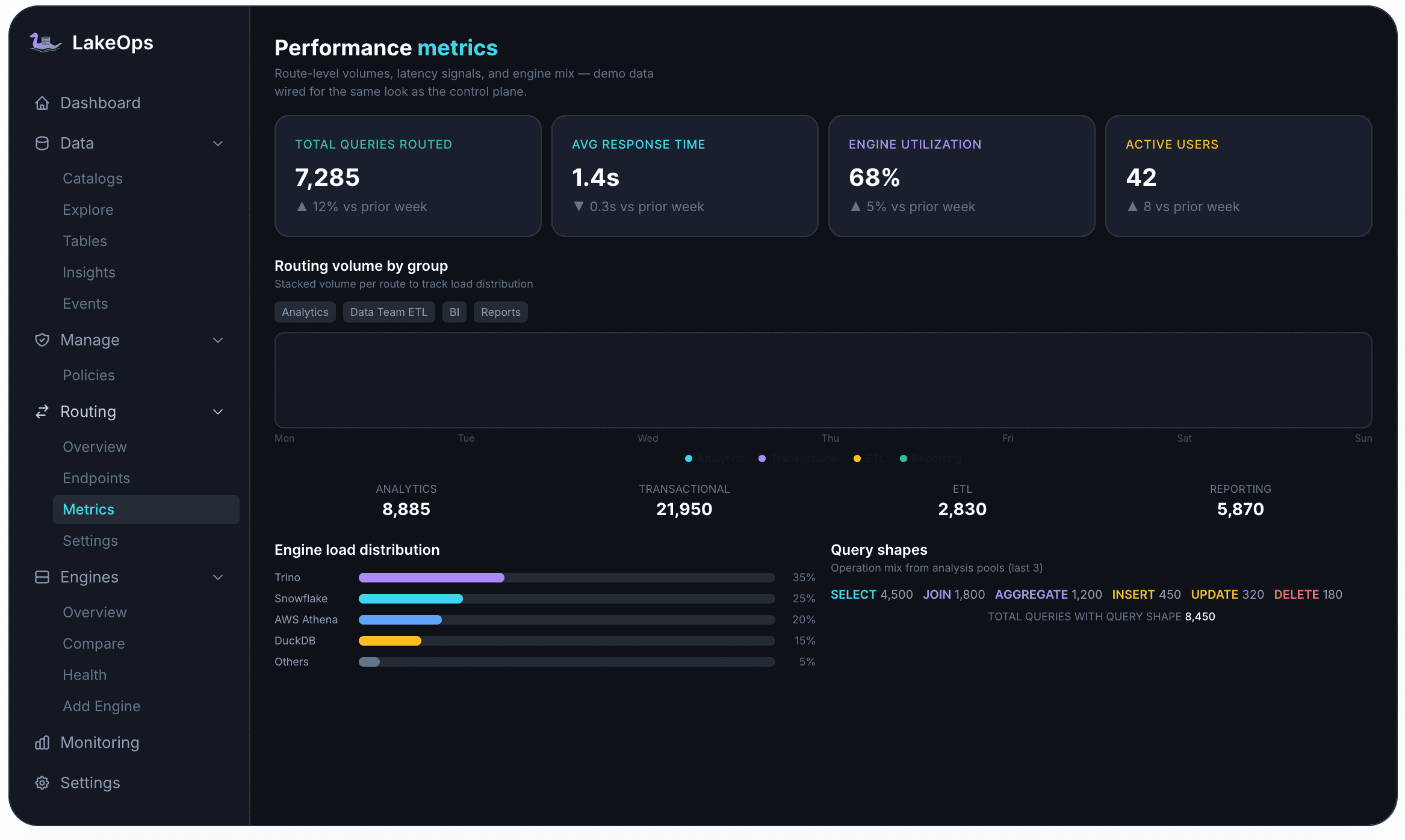

The observability layer surfaces this through four dimensions: routing metrics (query volume, latency distribution, engine utilization per agent), guardrail audit logs (what fired, what was rewritten, what was rejected), query shapes (parameterized template analysis showing what types of queries each agent produces), and cost tracking (per-agent, per-conversation, per-table spend attribution).

Agentic query patterns worth optimizing for

Beyond the infrastructure layers, certain query patterns unique to agent workloads deserve explicit attention because they recur across every deployment:

Temporal queries dominate. Agents frequently ask "what did I know about X as of time T?" rather than "what is the current state of X?" Iceberg's time-travel semantics support this natively, but the storage layer must be optimized for it — snapshot retention policies that balance time-travel depth against metadata overhead, and compaction strategies that preserve temporal access efficiency.

Cross-agent joins. Multi-agent systems produce queries like "What did Agent A know when it delegated to Agent B?" — temporal joins across two agents' activity records. These require sub-100ms latency because the calling agent waits on the result before taking its next action. Sort order on `(agent_id, timestamp)` and aggressive compaction of the activity log tables are prerequisites for acceptable performance.

Budget attribution queries. "How much did each agent spend during this task?" requires fast aggregation by `(agent_id, task_id, time_window)` over the cost records table. This is a well-defined, high-frequency template that the adaptive router should recognize and route to the cheapest analytical engine (typically DuckDB for this shape).

Discovery-heavy workloads. Agents spend significant query budget on schema exploration — listing tables, describing columns, sampling data. These queries are lightweight individually but occur at high frequency. The MCP layer should handle them from metadata cache without hitting the query engine at all, reducing both latency and cost to near-zero for this traffic.

Workload profiles and routing configuration

Different agent types produce fundamentally different workload shapes. Configuring routing groups, guardrails, and compaction priorities per workload type — rather than treating all agent traffic as homogeneous — is where the largest performance and cost gains materialize.

Interactive analytics agents (customer support, sales intelligence, conversational BI). These agents operate in human-facing loops where response time directly affects user experience. They issue 10–30 queries per interaction, mostly point lookups and narrow aggregations with tight filters. Latency target: sub-500ms p95. Routing strategy: DuckDB for single-table lookups, Trino for multi-table joins. Guard configuration: `read_only + row_limit(1000) + pii_mask`. Compaction priority: high, with sort orders aligned to common filter columns (customer_id, timestamp, status).

Data pipeline agents (ETL orchestration, data quality validation, schema evolution). These agents run in background loops without latency pressure but generate high query volume — schema introspection, row counts, distribution checks, and validation queries across many tables. Latency target: sub-5s p95. Routing strategy: Athena or Spark for wide scans, DuckDB for metadata queries. Guard configuration: `cost_estimate(50GB) + human_approval` for DDL. Compaction priority: medium, compaction scheduling aligned to pipeline cadence.

Feature store agents (ML feature retrieval, embedding generation, model training data). These agents run highly repetitive parameterized queries — the same template shape executed millions of times with different entity IDs. The adaptive router converges quickly on these patterns. Latency target: sub-200ms p95. Routing strategy: DuckDB or StarRocks for point lookups at scale. Guard configuration: `read_only + row_limit(500) + cost_estimate(1GB)`. Compaction priority: critical — these tables must be perfectly sorted on the entity key with small target file sizes for fast point reads.

Research and exploration agents (data scientists' assistants, report generation, ad-hoc analysis). These agents generate novel, diverse SQL shapes — joining unfamiliar tables, running aggregations at unusual granularity, exploring data distributions. The LLM and semantic routers handle most of this traffic since the adaptive router lacks history for novel shapes. Latency target: sub-10s p95. Routing strategy: Trino for complex joins, Snowflake for wide aggregations, DuckDB for exploratory sampling. Guard configuration: `read_only + row_limit(5000) + cost_estimate(100GB) + pii_mask`. Compaction priority: standard.

Multi-agent orchestration systems (agentic pipelines where agents delegate to other agents). These produce the temporal join and budget attribution patterns described above. The key requirement is fast writes (each agent step generates a record) and fast temporal lookups across agent activity logs. Latency target: sub-100ms for activity log queries. Routing strategy: StarRocks or DuckDB for time-series-optimized lookups. Guard configuration: `cost_estimate(10GB)` with write access to designated activity tables. Compaction priority: continuous — activity log tables need compaction every few minutes to prevent small-file accumulation from high-frequency writes.

Putting it together

The five layers interact as a closed system. MCP provides the connection interface. Guardrails enforce safety boundaries. Routing selects the optimal engine for each query shape. Storage optimization adapts to serve agent patterns faster. Observability closes the feedback loop — every metric feeds back into routing decisions, compaction priority, and guardrail tuning.

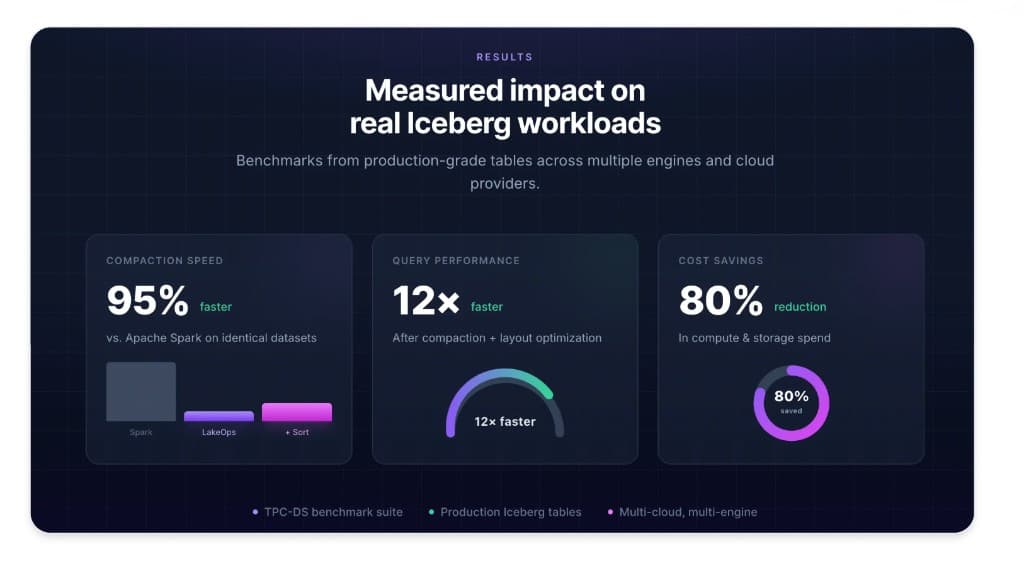

The measurable outcomes on production deployments: agent query p95 latency drops from 5–10 seconds to under 500ms as tables are compacted and sorted for observed access patterns. Per-query compute cost drops 65% through intelligent routing (DuckDB for lookups, Trino for analytics, Spark for batch). Guardrail interventions prevent an average of 3.2 petabyte-scale scans per day per deployment that agents would otherwise have executed in tool-use loops. And the system improves autonomously — no manual query log analysis, no sort-order tuning by hand, no routing rule maintenance.

The infrastructure investment is recoverable in weeks, not months. An agent issuing 50 queries per interaction at $0.05 per query on a mis-routed engine costs $2.50 per interaction. Routed optimally with compacted tables, the same interaction costs $0.15. At 1,000 interactions per day, that is $2,350 saved daily — more than covering the infrastructure in the first week.