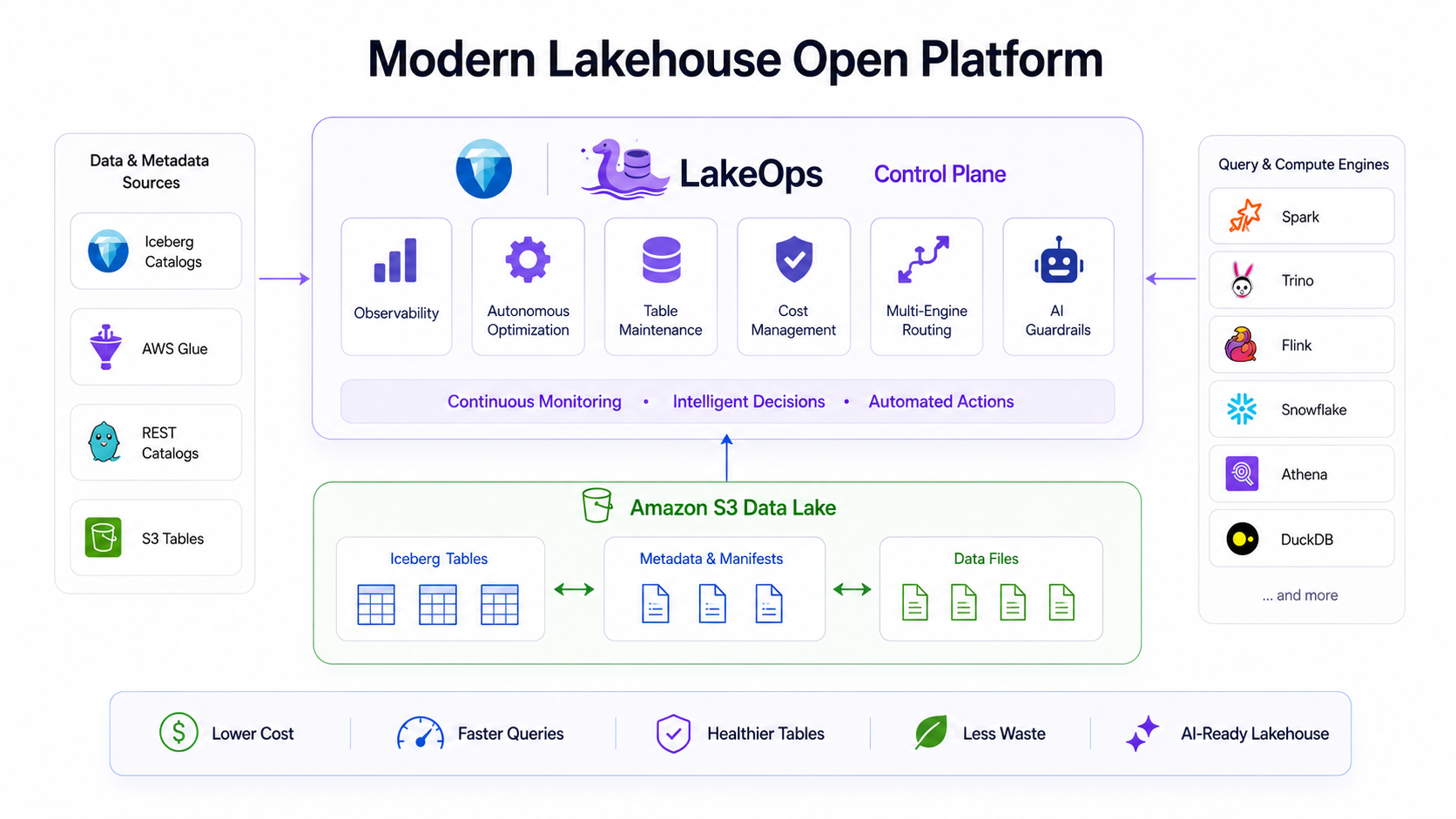

Autonomous Lakehouse Control Plane

Connect catalogs, metadata, storage, and query engines through one autonomous control layer for optimization, governance, and cost-efficient scale.

Runs on your stack

Full Iceberg benefits.

Snowflake-level ease.

Monitor health, run compaction and maintenance—across catalogs and engines—and manage policies from a single view.

Last 30 days Optimization Activity

Key Metrics

Recent Operations

Last 10 operations| Operation | Table | Duration | Impact | Time | Status |

|---|---|---|---|---|---|

| Compact Data Files | customer_orders orders | 4s | 1.24 TB, 16 → 1 files | 57 minutes ago | SUCCESS |

| Expire Snapshots | payment_transactions payments | 27s | 8.2 TB | 4 hours ago | SUCCESS |

| Rewrite Manifests | raw_clickstream analytics | 1.9s | 3 → 1 manifests | 5 hours ago | SUCCESS |

| Compact Data Files | product_catalog products | 6m 11.3s | 3,008 → 1,256 files | 6 hours ago | SUCCESS |

| Remove Orphan Files | user_sessions analytics | 13m 6.9s | 59,831 files, 74.81 GB freed | 7 hours ago | SUCCESS |

Table Status Distribution

Top 5 Tables Needing Optimization

| Table Name | Table Size | Status | Last Scan |

|---|---|---|---|

| analytics.raw_clickstream | 4.6 TB | CRITICAL | 2 hours ago |

| analytics.search_query_logs | 3.2 TB | CRITICAL | 3 hours ago |

| analytics.user_sessions | 1.9 TB | CRITICAL | 4 hours ago |

| orders.customer_orders | 1.24 TB | CRITICAL | 1 hour ago |

| payments.payment_transactions | 860 GB | CRITICAL | 2 hours ago |

Minutes to value with no risk

Connect & collect telemetry

Manual or autonomous management

Operations run & optimize

Observability & governance

Capabilities

Managed Data. Optimized Ops.

Agentic AI ready.

Every layer of your lakehouse — from compaction and metadata to engines, observability, and policy enforcement — managed from one control plane.

Compaction Duration

Seconds

Cost of Compaction

Cost ($)

Compaction

Intelligent Compaction

Rust-based compaction engine for Iceberg — analyzes query patterns and access frequency to optimize file layout at scale. Run more compactions in less time with minimal resource footprint, so your lake stays performant without blocking writes or queries.

- 95% faster engine with Rust and AI

- Organize data by real query usage to cut IO

| SNAPSHOT ID | TIMESTAMP | OPERATION | MANIFESTS | ADDED | ACTIONS |

|---|---|---|---|---|---|

| 6847201938742 | Mar 15, 2026 12:18 PM | Append | 12 | +4 | 🔍⇄⏱ |

| 6847201938740 | Mar 15, 2026 11:45 AM | Append | 11 | +2 | 🔍⇄⏱ |

| 6847201938738 | Mar 15, 2026 10:30 AM | Append | 10 | +6 | 🔍⇄⏱ |

| 6847201938736 | Mar 14, 2026 08:00 PM | Append | 8 | +3 | 🔍⇄⏱ |

Version History & Time Travel

Total Snapshots

154

Retention Policy

30 days

Latest Operation

Append

Management

Snapshot Lifecycle Management

Automated retention, expiration, and version history for every table. Set policies once — LakeOps expires old snapshots safely with full awareness of concurrent readers. Time-travel to any point, compare snapshots, and roll back without manual intervention.

Rewrite Manifests

Consolidate manifest files for faster query planning

Rewrite Position Deletes

Optimize position delete files to improve read performance

Compute Statistics (Puffin)

Calculate column stats to optimize query planning and pruning

Manifest & Metadata Optimization

Consolidate and rewrite manifest files so query planning stays fast at any scale. Smaller manifests mean faster planning and fewer metadata scans for Trino, Spark, Flink, and every engine that touches your lake. Includes position delete file optimization and Puffin statistics computation.

Remove Orphan Files Policy

Clean up files no longer referenced by any table

Basic Information

Name and priority

Target Scope

Where this policy applies

Execution Schedule

When the policy runs

Orphan File Configuration

How orphans are identified

Orphan File Detection & Cleanup

Detect and safely remove files no longer referenced by any table. Eliminate storage drift from failed jobs, aborted commits, and legacy tables. Configurable retention thresholds, catalog-wide or per-table scope, and scheduled execution — reclaim capacity without risking data integrity.

Engine Load Distribution

Trino

Active- Queries: 256Avg: 1.8s

Snowflake

Active- Queries: 192Avg: 2.1s

AWS Athena

Active- Queries: 128Avg: 2.3s

DuckDB

Active- Queries: 64Avg: 0.5s

Engines and AIs

Multi-Engine Query Routing

Connect Trino, Spark, Snowflake, Athena, DuckDB, and Flink to one routing layer. Intelligent query routing optimizes for cost, latency, or throughput automatically. Compare engine performance, monitor health, and add new engines — all without engine-specific scripts or duplicate tooling.

Groups

4

Configured endpoints

Active

3

Passing traffic

Inactive

1

Paused groups

Endpoints

8

Publicly available

All routing groups

Interactive customer behavior and funnel exploration workloads.

Operational writes and near-real-time checkout event ingestion.

Nightly catalog transformations and product availability sync jobs.

Scheduled BI reports and financial dashboard refresh workloads.

Agentic AI Enablement

Built for AI and ML pipelines — optimized metadata, layout, and table structure for agents, feature stores, and autonomous data workflows. Run simulations on file layout changes before applying them. Fast, consistent access to table state and history so AI pipelines get the data they need without extra glue.

Active Engines

4/6

Avg Latency

1.2s

↓ 15% vs yesterday

Throughput

2.4K q/h

↑ 8% vs last week

Error Rate

0.02%

↓ vs last week

Recent Alerts

Table optimization completed for orders.customer_orders

2 min ago

Snapshot expiration policy executed — 12 snapshots cleaned

15 min ago

High query latency detected on Trino endpoint

1 hr ago

Orphan file cleanup completed — 847 MB reclaimed

3 hrs ago

Observability

Full Lake Observability

Continuous analysis of table structure, file health, and optimization opportunities. Monitor active engines, query latency, throughput, and error rates. Cross-system telemetry from S3, GCS, ADLS, and every engine — view, alert, and act from one place.

Policies

Manage all policies including configuration, maintenance, delete, and truncate policies.

| Status | Policy | Type | Next | Actions |

|---|---|---|---|---|

Orders compaction | Manifests | Mar 16, 02:00 | ||

Catalog manifest rewrite | Manifests | — | ||

Payments orphan cleanup | Orphan Files | Mar 16, 03:00 | ||

Warehouse snapshot expiry | Snapshots | Mar 16, 01:00 | ||

Loyalty stats refresh | Config | — |

Governance

Governance and Policies

Define and enforce compaction, retention, orphan cleanup, and maintenance policies across catalogs and tables. Set schedules, priorities, and target scopes — then let LakeOps execute continuously. Every policy is auditable, versioned, and controllable with one toggle.

Why LakeOps

The control plane

for your lakehouse

From cost and performance to AI readiness — one platform that covers every dimension of lake operations.

Managed Iceberg

Autonomous compaction, snapshots, manifests, and orphan cleanup for every table.

Explore Managed IcebergAgentic AI readiness

Agent-native MCP interface, guardrails, and a self-optimizing lake for AI workloads.

Explore AI enablementCost reduction

Eliminate small files, orphans, and over-provisioned compute automatically.

Explore cost optimizationQuery performance

Adaptive data layout, lean manifests, and optimized file sizes for faster reads.

Explore performance impactMulti-engine routing

Route queries across Trino, Spark, Snowflake, and more — optimized per workload.

Explore routingLakehouse observability

Table health, engine metrics, and cross-system telemetry from one control plane.

Explore observabilityResults

Measured impact on

real Iceberg workloads

Benchmarks from production-grade tables across multiple engines and cloud providers.

Compaction speed

vs. Apache Spark on identical datasets

Query performance

After compaction + layout optimization

Cost savings

In compute & storage spend

Get in touch

See LakeOps in action

Get a personalized walkthrough of the LakeOps platform with your data. Short call, your architecture.

No commitment · Typically 30 min