Every data platform team that adopts Iceberg eventually discovers the same thing: the table format is excellent, but keeping tables healthy at scale is a full-time job that nobody signed up for.

Iceberg provides the primitives — compaction, snapshot expiration, manifest rewriting, orphan removal — but none of them run themselves. Somebody has to decide when to compact, what sort order to use, which snapshots to expire, how long to retain orphan candidates, and how to sequence all of it safely across hundreds of tables. At 50 tables, scripts and Airflow DAGs work. At 500 tables across multiple catalogs and engines, they become the bottleneck.

This article explains what autonomous table maintenance actually means — from telemetry collection through each core operation, fleet-wide policies, observability, and continuous self-improvement — and how a control plane approach eliminates maintenance toil while keeping every table in your lake healthy.

Why manual maintenance breaks

The first Spark compaction job is easy to write. You specify a target file size, point it at a table, schedule it in Airflow, and move on. The problem is everything that comes after.

You need to handle concurrency — a compaction job that conflicts with an active writer will fail, and without OCC-aware retry logic it simply drops the run. You need to handle failures gracefully — a Spark executor that OOMs mid-compaction leaves partial files on storage that become orphans. You need to handle heterogeneous workloads — the sort order that accelerates your analytics queries might degrade your ETL pipelines. And you need to handle scale — the engineering time spent maintaining these scripts grows linearly with table count, while the team does not.

Most teams end up with a fragile patchwork: compaction runs nightly on the hottest tables, snapshot expiration exists for maybe half the lake, orphan cleanup was written once and nobody is sure it still works correctly, and manifest optimization is something everyone agrees they should do but nobody has prioritized. Nobody has real observability into what these operations actually cost or whether they are improving anything. Nobody routes queries to the cheapest engine because there is no layer to do it.

The result is a lake where maintenance is partial, reactive, and expensive. You discover degradation after users complain, not before. Costs compound silently. And the engineers who should be building data products are instead debugging cron jobs at 2 AM.

What autonomous means in practice

Autonomous table maintenance is not a single feature — it is a closed-loop system with five components: telemetry collection, health classification, coordinated operations, policy governance, and continuous improvement.

The system must continuously observe the state of every table without requiring manual instrumentation. It must classify which tables need attention and what kind of attention they need. It must execute the right operations in the right sequence with safety guarantees. It must do all of this according to policies that the platform team defines once. And it must learn from its own execution history to get better over time.

Telemetry: the foundation of automation

You cannot automate what you cannot measure. The first requirement of autonomous maintenance is continuous telemetry collection from three sources: Iceberg metadata, object storage, and query engines.

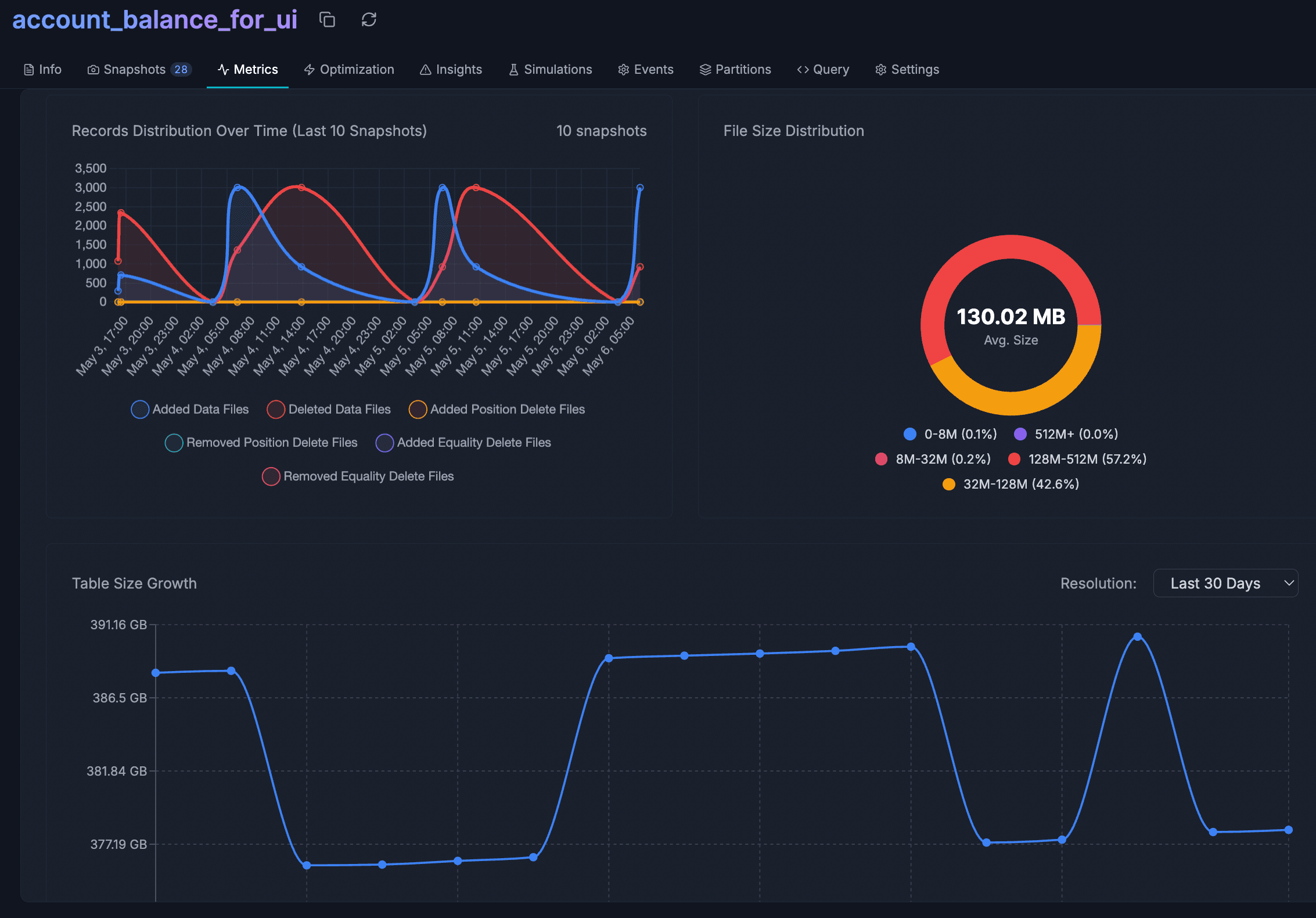

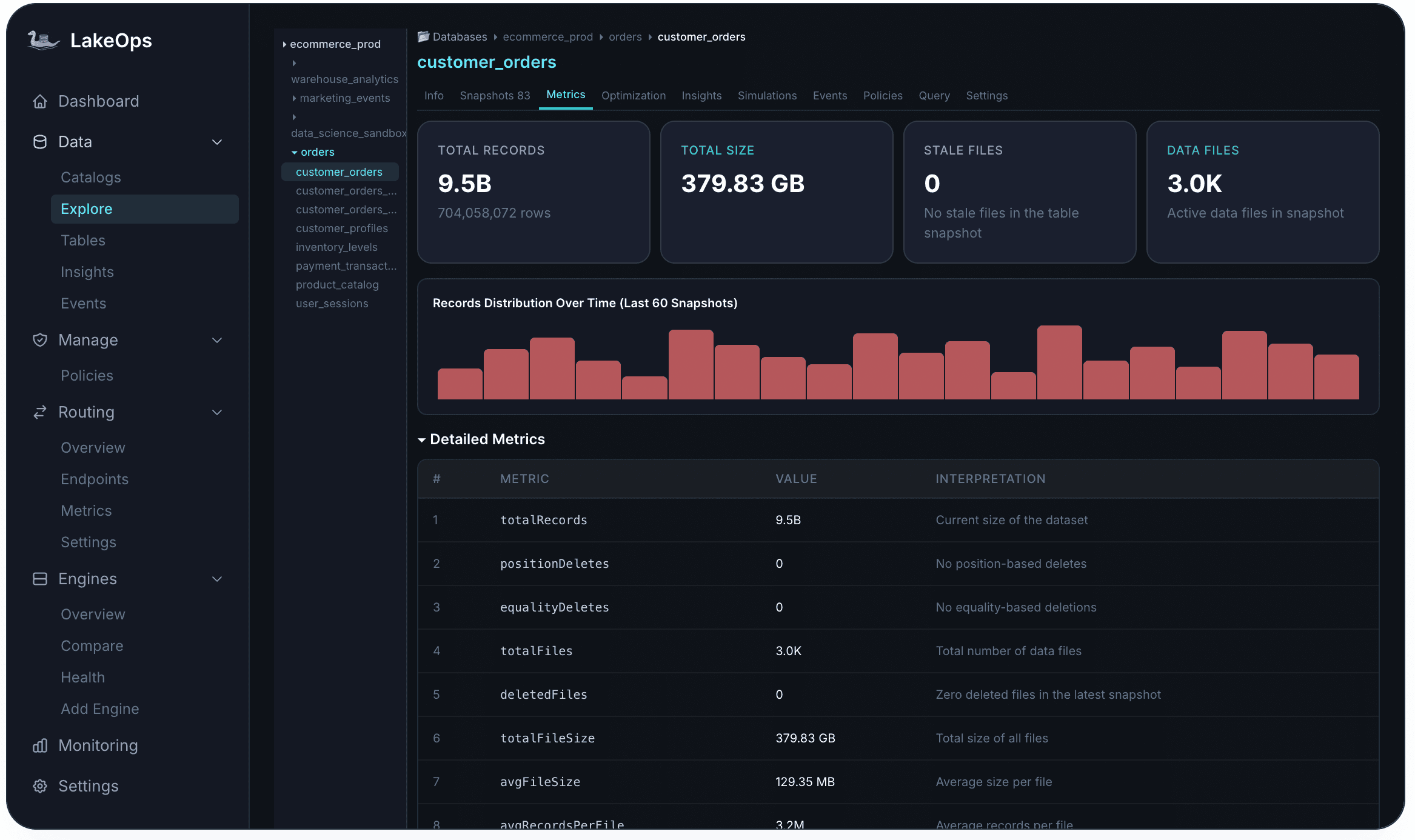

From Iceberg metadata, the system reads snapshot history, manifest structure, partition layout, file counts, file sizes, and column statistics freshness. This tells you the structural health of each table — how many small files exist, how fragmented manifests are, how many snapshots are accumulating, whether sort orders are stale.

From object storage (S3, GCS, ADLS), it tracks file counts per prefix, access patterns, and total size. This reveals orphan files that no snapshot references and identifies storage growth trends that indicate maintenance is falling behind.

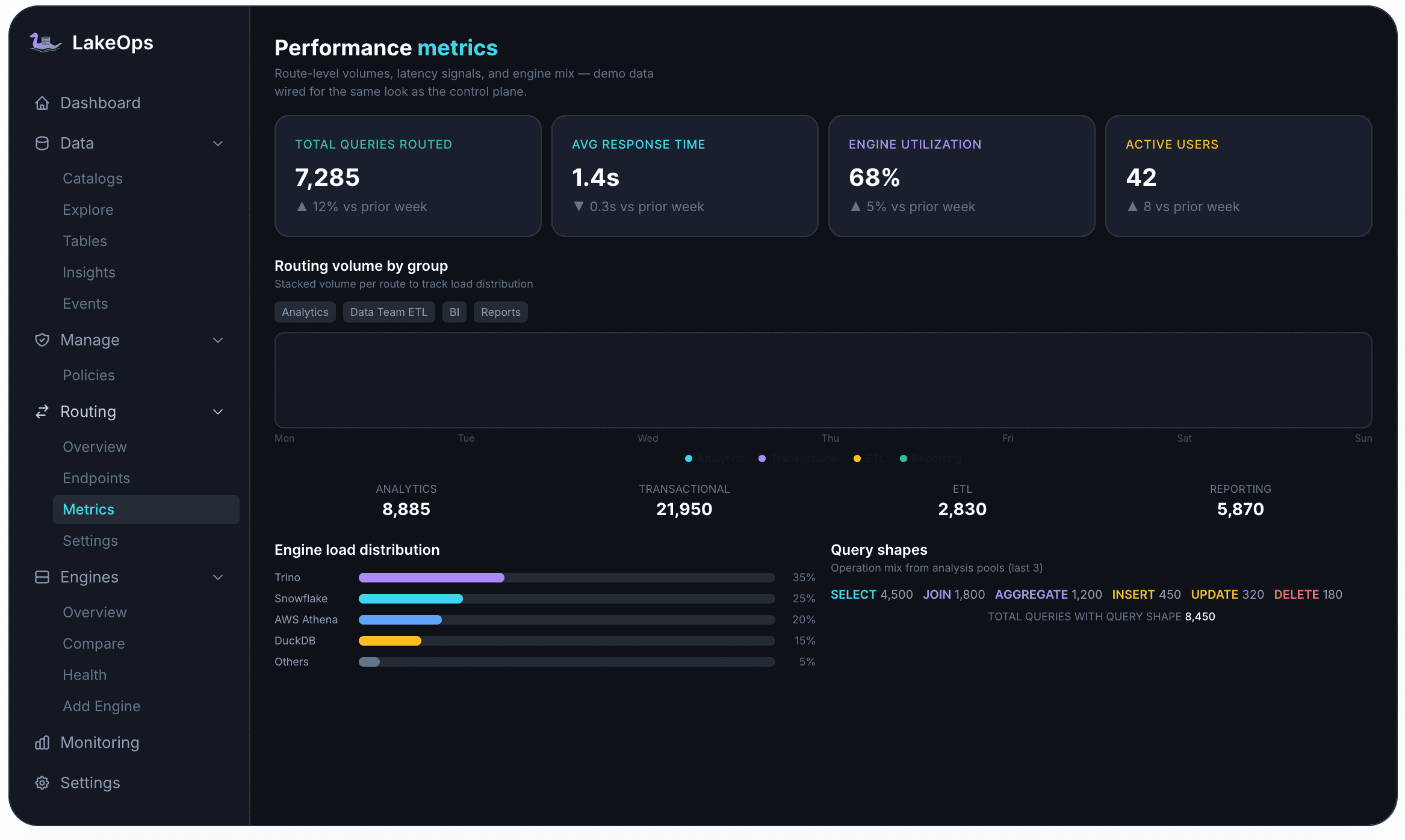

From query engines — Trino, Spark, Athena, Snowflake, DuckDB, Flink — it collects query shapes, filter columns, join columns, scan volumes, and latency. This is what transforms maintenance from blind scheduling into intelligent optimization. When you know which columns queries actually filter on, you can sort data to match. When you know which tables are queried most heavily, you can prioritize their maintenance.

Health classification and prioritization

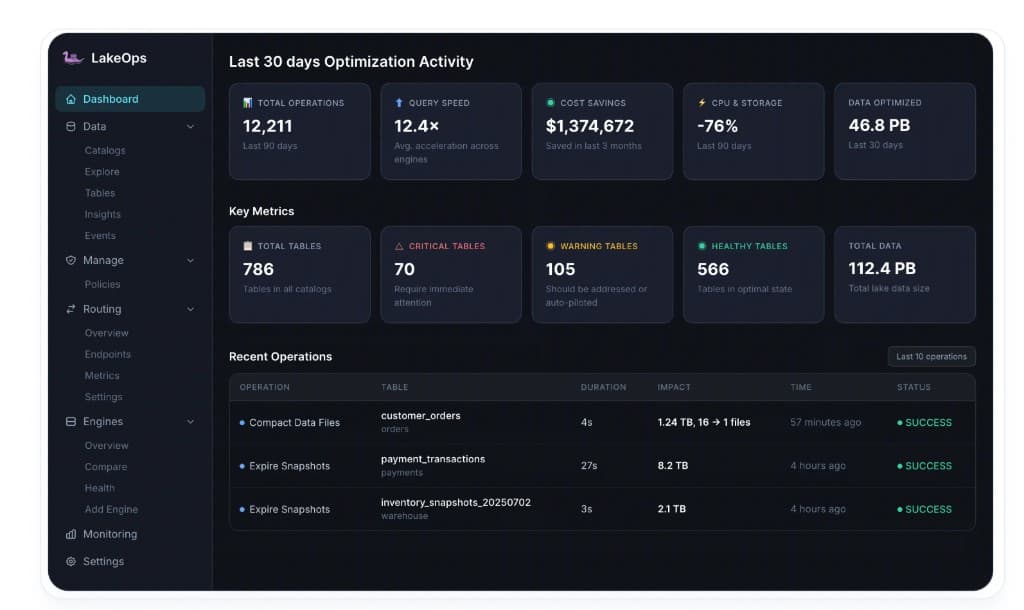

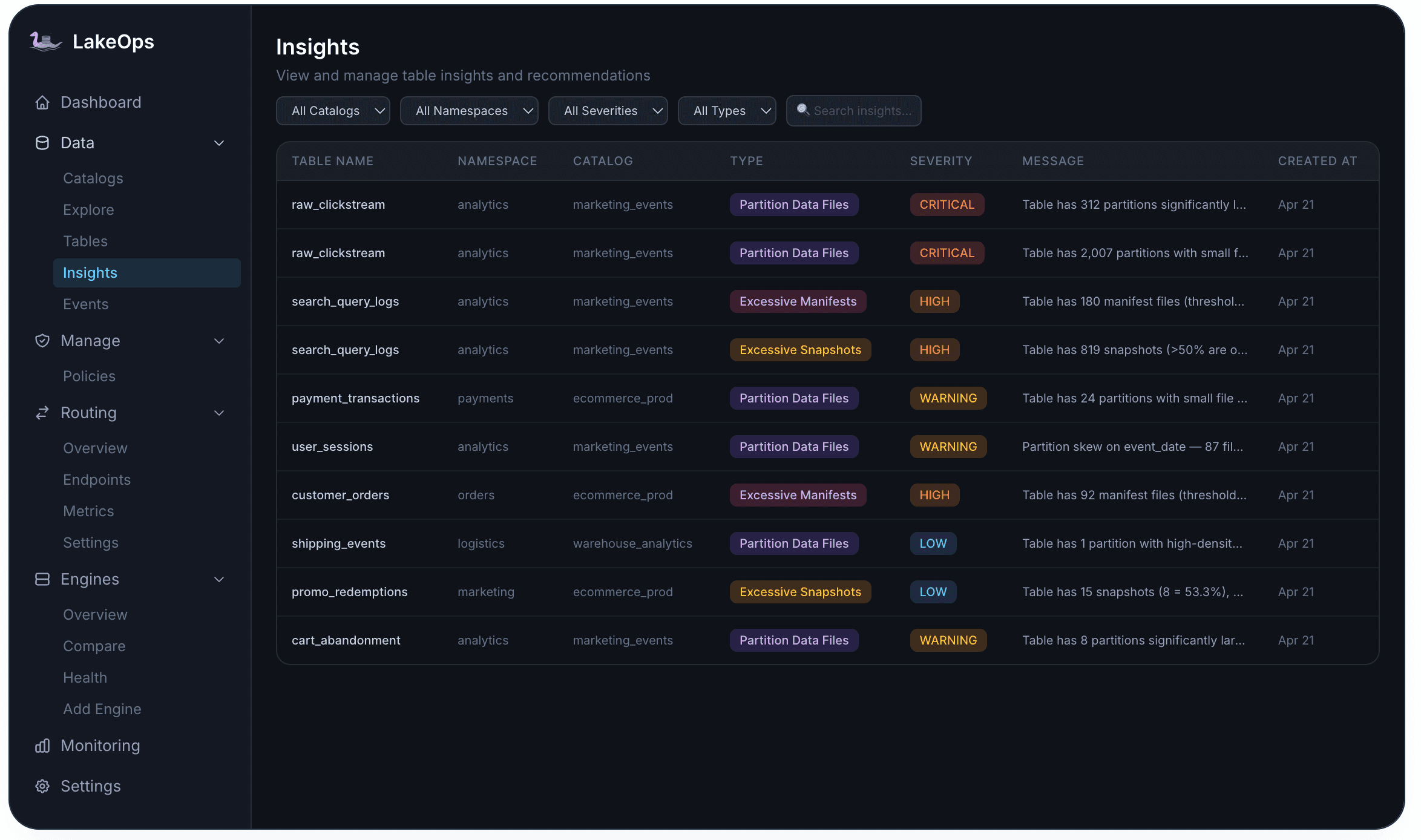

With telemetry flowing, the system classifies every table into health tiers: critical, warning, or healthy. Classification is based on multiple signals — file count relative to target, manifest fragmentation, snapshot accumulation rate, orphan file presence, sort order staleness, and query latency trends.

Critical tables have severe structural problems — extreme file fragmentation, manifests well beyond threshold (for example, 92 manifests where 50 is the threshold), or orphan counts consuming significant storage. These get immediate attention. Warning tables show early degradation — file sizes trending below target, manifests approaching threshold, snapshots accumulating faster than expiration can keep up. Healthy tables are within all thresholds and require only periodic maintenance to stay that way.

This classification drives prioritization. A control plane with finite concurrency slots processes critical tables first, then warnings, then healthy tables on their regular schedule. This ensures that the tables causing the most pain get fixed fastest.

Operation sequencing: why order matters

The core operations are compaction, snapshot expiration, manifest optimization, and orphan cleanup. What makes autonomous maintenance different from manual scripts is that these operations run in a coordinated sequence with awareness of each other.

Snapshot expiration runs first. Expiring old snapshots dereferences data files that are no longer needed. If orphan cleanup ran before expiration, it would miss these newly unreferenced files and require another pass later.

Orphan cleanup runs after expiration. With snapshots expired and their file references released, orphan detection can accurately identify files that no live snapshot references. An age threshold ensures files from in-flight writes are never accidentally deleted.

Compaction runs on the cleaned state. There is no point compacting files that will be garbage-collected after expiration. By running compaction after the cleanup passes, every file rewrite produces final, long-lived output.

Manifest optimization runs after compaction. Compaction changes the file set, so manifests rewritten before compaction would be immediately stale. Running manifest consolidation on the post-compaction file set produces the cleanest metadata state.

Teams that schedule each operation independently — compaction at 2 AM, expiration at 3 AM, orphans on weekends — end up doing wasted work: compacting files that will be expired, expiring snapshots after orphan cleanup already ran, or rewriting manifests that compaction immediately invalidates.

Compaction: query-aware file optimization

Compaction in an autonomous system goes far beyond merging small files to a target size. It uses query telemetry to make layout decisions that directly reduce compute cost on every subsequent read.

Binpack compaction merges small files toward a target size (typically 512 MB) without reordering data. This addresses the small-file problem directly: fewer files means fewer API calls to open, less metadata for planners to parse, and less scan overhead. It is fast, safe, and the right default for tables where access patterns are diverse or not yet observed.

Sort-order compaction physically reorders data by the columns your queries actually filter and join on. The system continuously tracks field access frequency — which columns appear in WHERE clauses, JOINs, and projections — and uses that telemetry to determine optimal sort order. When data is sorted by these columns, query engines can skip entire row groups using column statistics. Queries that used to scan gigabytes now scan megabytes, and compute cost drops proportionally.

The impact on both storage and compute is significant. Sorted data compresses better — a 1 TB table compresses 9% smaller when sorted. Fewer files means fewer LIST/GET API calls. And every query against a sorted, properly-sized table uses less CPU because engines scan less data and open fewer file handles.

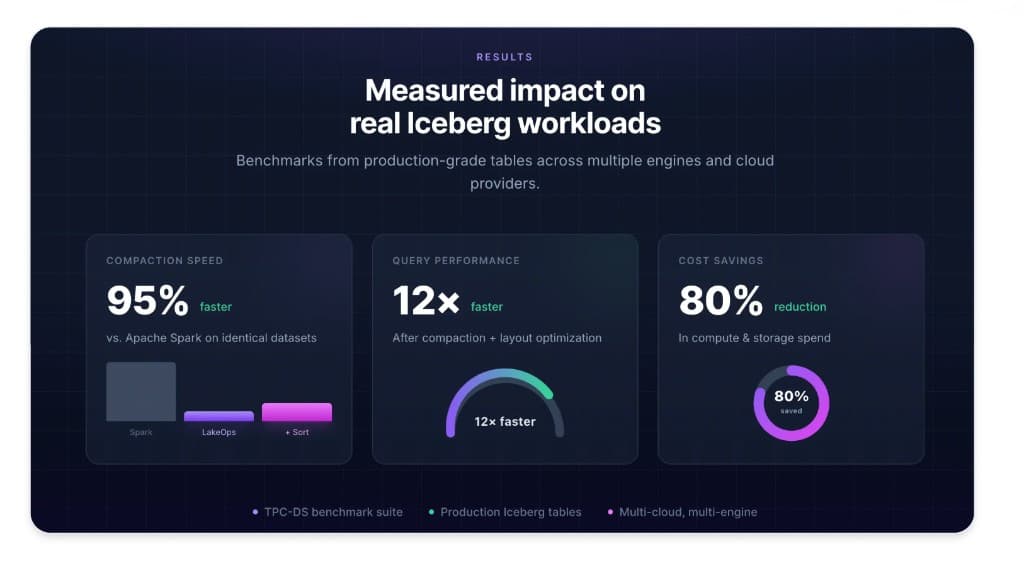

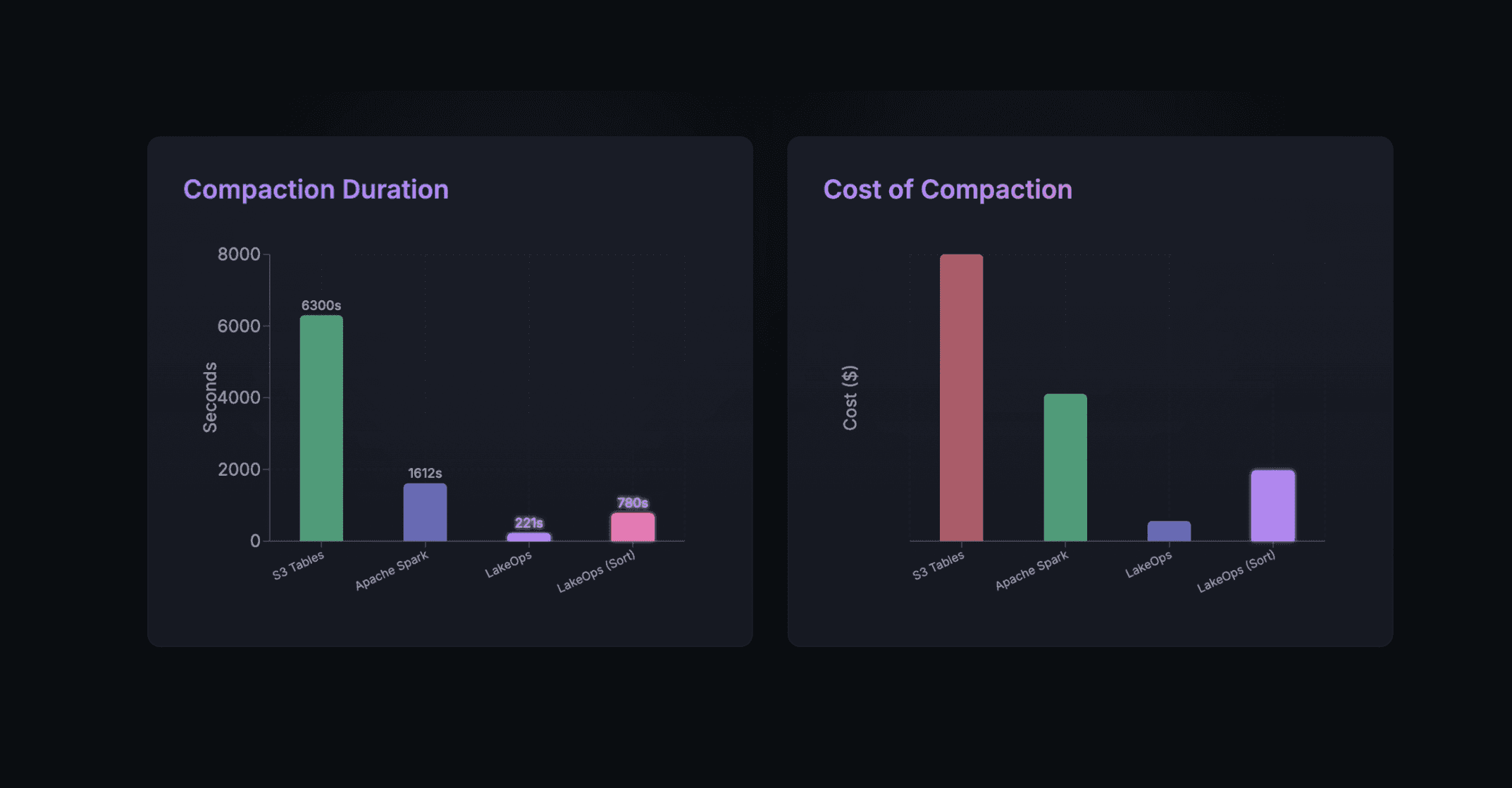

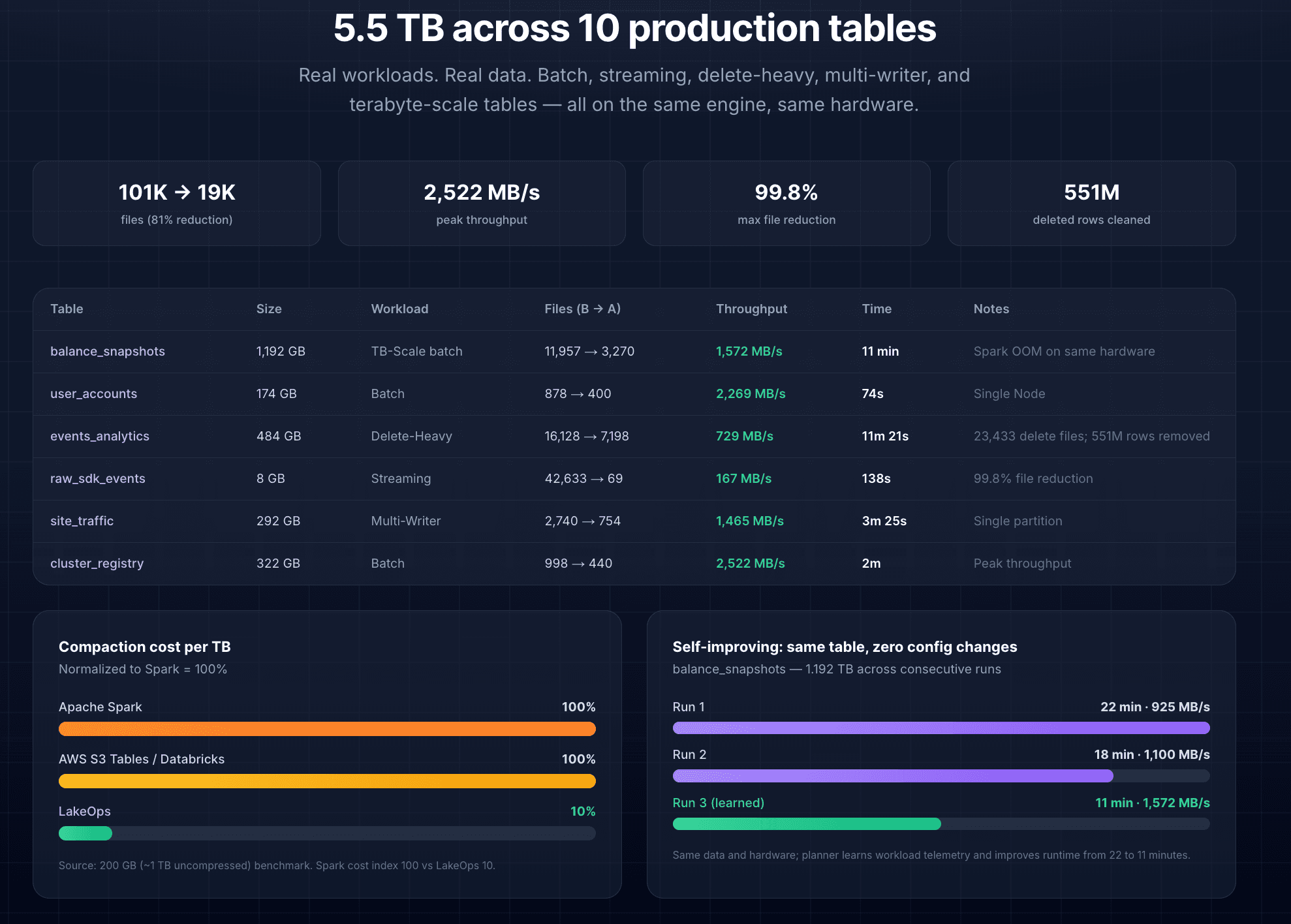

The compaction engine matters as much as the strategy. LakeOps runs compaction on a purpose-built Rust engine with Apache DataFusion — no JVM overhead, no garbage collection pauses, no executor provisioning. It commits atomically via Iceberg optimistic concurrency control and retries affected partitions on conflict. No data loss, no corruption, no blocked writers. Tables that cause Spark to OOM (a 1.192 TB table, for example) are handled without special configuration. Production benchmarks show binpack completing in 221 seconds versus 1,612 for Spark — and the engine improves across runs on the same table as it learns workload patterns.

Snapshot lifecycle management

Every write operation in Iceberg creates a new snapshot. Without active expiration, snapshots accumulate indefinitely — each one retaining references to data files that cannot be garbage-collected. At thousands of snapshots on a single table, the metadata tree becomes so deep that query planning alone takes longer than the scan.

Autonomous snapshot management runs retention on a configurable schedule, respecting both a time window (typically 7–30 days) and a minimum snapshot count (5–10). The system is conflict-aware — it will never expire a snapshot that an active reader depends on, and it coordinates safely around concurrent writers.

The impact at scale is dramatic. A single expiration run on a table with 23,183 snapshots removed 2,928 snapshots and 5,819 files, reclaiming 263 MB of manifest data in under 4 minutes. On another table, 22,034 snapshots and 675,510 files were expired, reclaiming 179 GB of storage in a single pass. The storage savings are immediate and the metadata reduction improves query planning performance across every engine.

Manifest and metadata optimization

Iceberg's manifest files are the index layer that tells query engines which data files belong to each snapshot. After many compaction and append cycles, manifests fragment — a table might have 200 manifest files where 30 would suffice. Every time an engine plans a query, it opens every manifest and reads its column statistics. At 200+ manifests, query planning overhead dominates execution time.

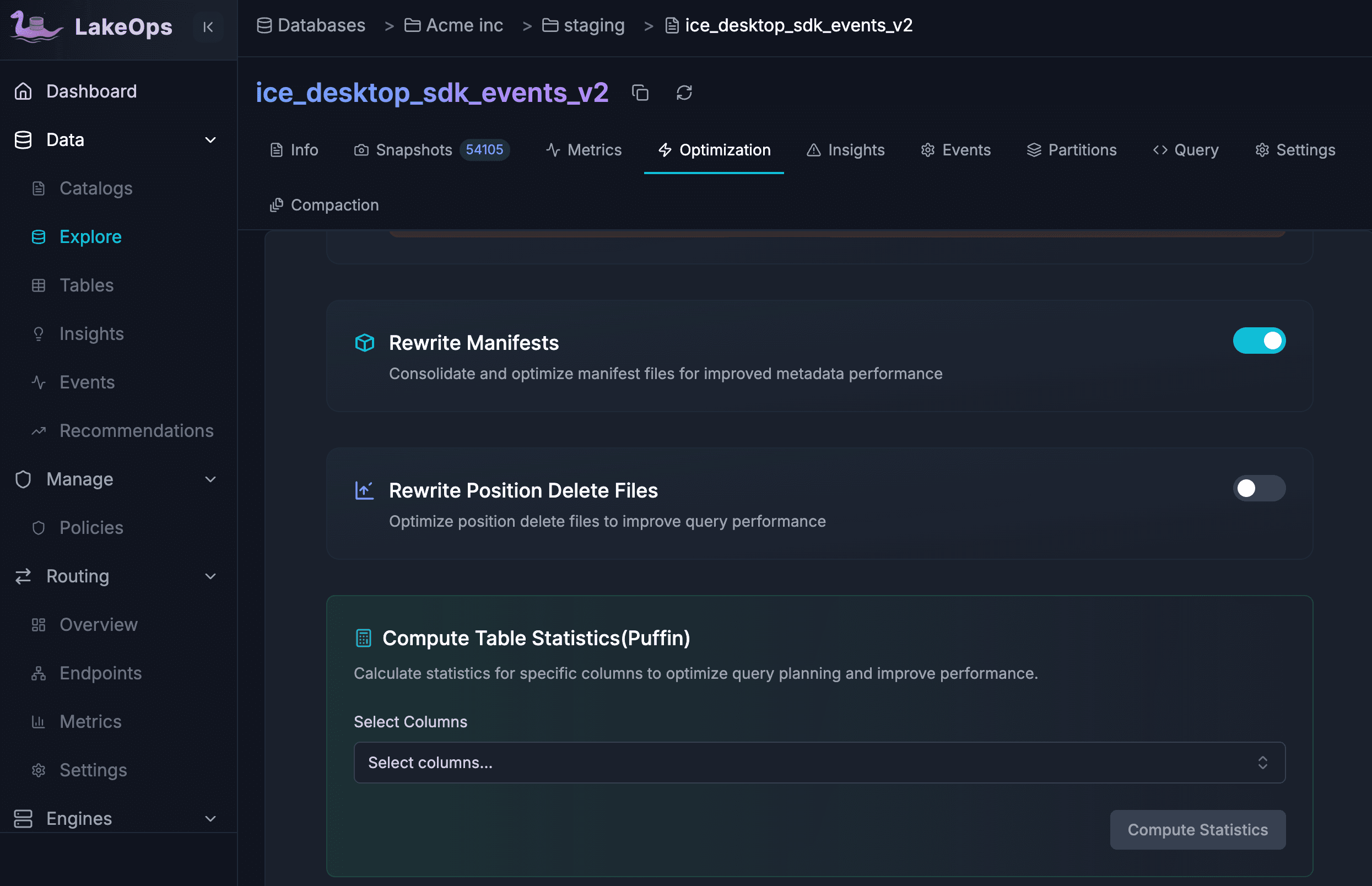

Three operations address this. Rewrite Manifests consolidates fragmented manifests into fewer, larger ones in a single atomic commit — no blocking of concurrent reads or writes. Rewrite Position Deletes merges the delete files generated by merge-on-read operations, reducing read amplification on tables with frequent updates. Compute Statistics (Puffin) generates column-level statistics (distinct values, min/max, null ratios) that enable engines to skip row groups more aggressively.

In Auto mode, LakeOps triggers manifest rewrites when metadata drift exceeds configurable thresholds — for example, when manifest count per snapshot passes 50. The default schedule runs daily at 4 AM, after compaction completes, ensuring manifests reflect the final file set.

Orphan file detection and cleanup

Orphan files are data objects on S3, GCS, or ADLS that no live Iceberg snapshot references. They accumulate from aborted writes, failed Spark jobs, interrupted compaction runs, and incomplete table drops. In a typical production lake, orphan files account for a significant portion of the storage bill — one scan found approximately 200 TB of dead data across 324 tables, roughly 1.8 million orphan files costing $4,000 per month.

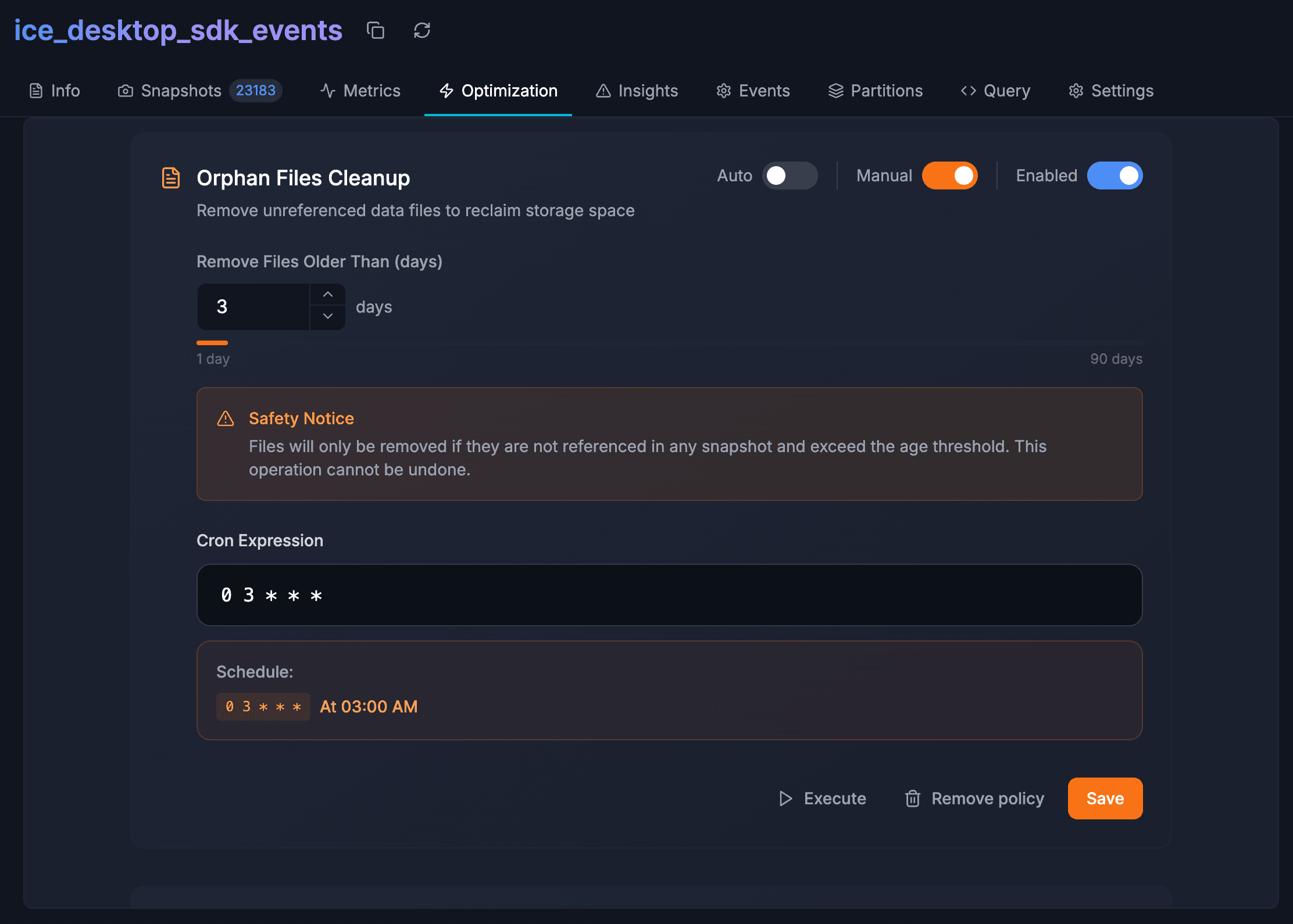

The challenge is safety. A naive implementation that compares storage against metadata can accidentally delete files from in-flight jobs. Autonomous orphan cleanup uses a policy-based approach: an age threshold (default 7 days, configurable 1–90) ensures only files unreferenced for at least that long are candidates for removal. The recommended threshold is at least twice the duration of your longest-running write job.

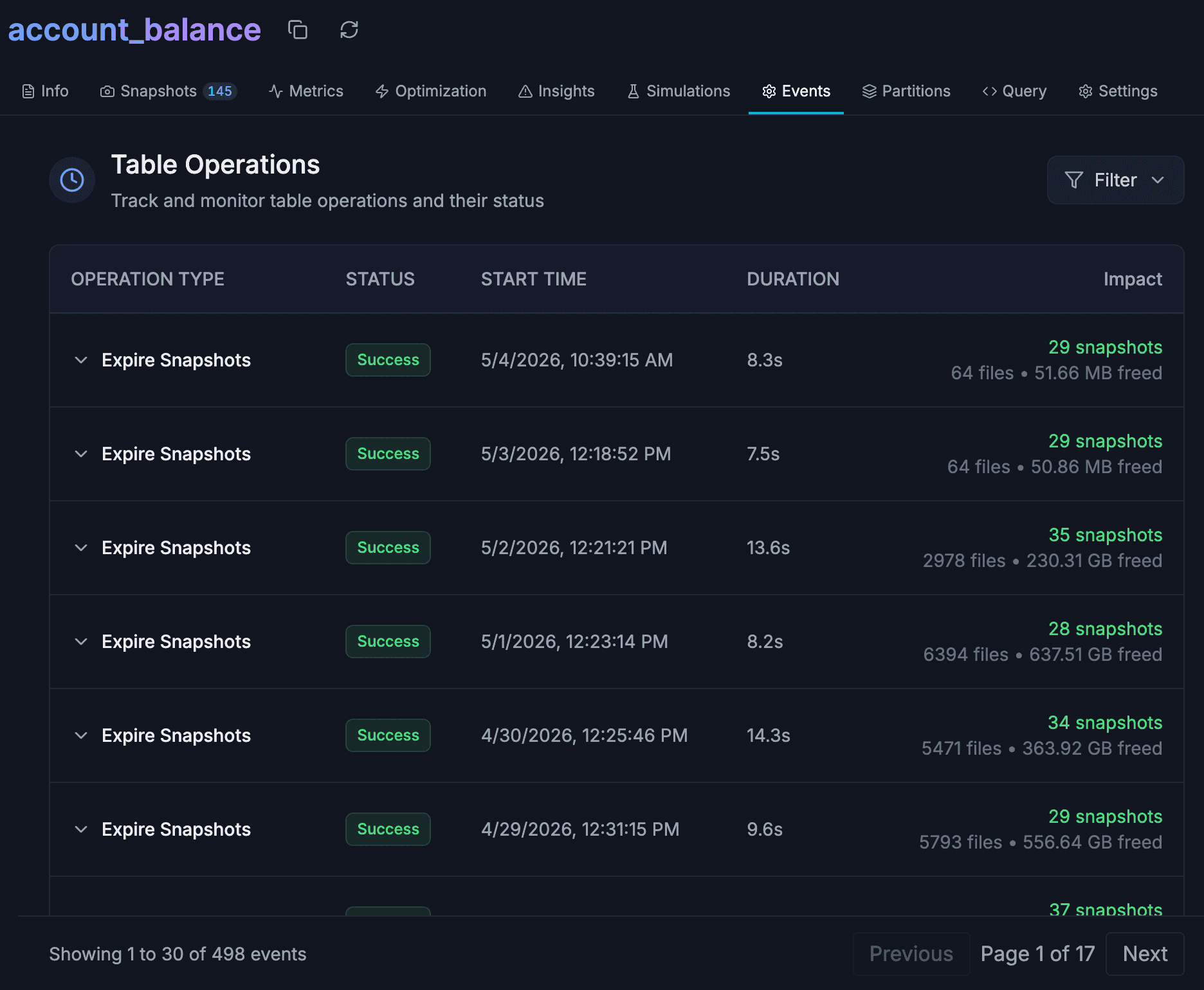

Cleanup schedules are coordinated after snapshot expiration by design — since expiration itself produces newly unreferenced files. A single fleet sweep removed 59,831 files (74.8 GB) from one table in 13 minutes and 13.6 GB from another in about a minute. Every operation logs count and size reclaimed in Events for full auditability.

Layout simulations: preview before production

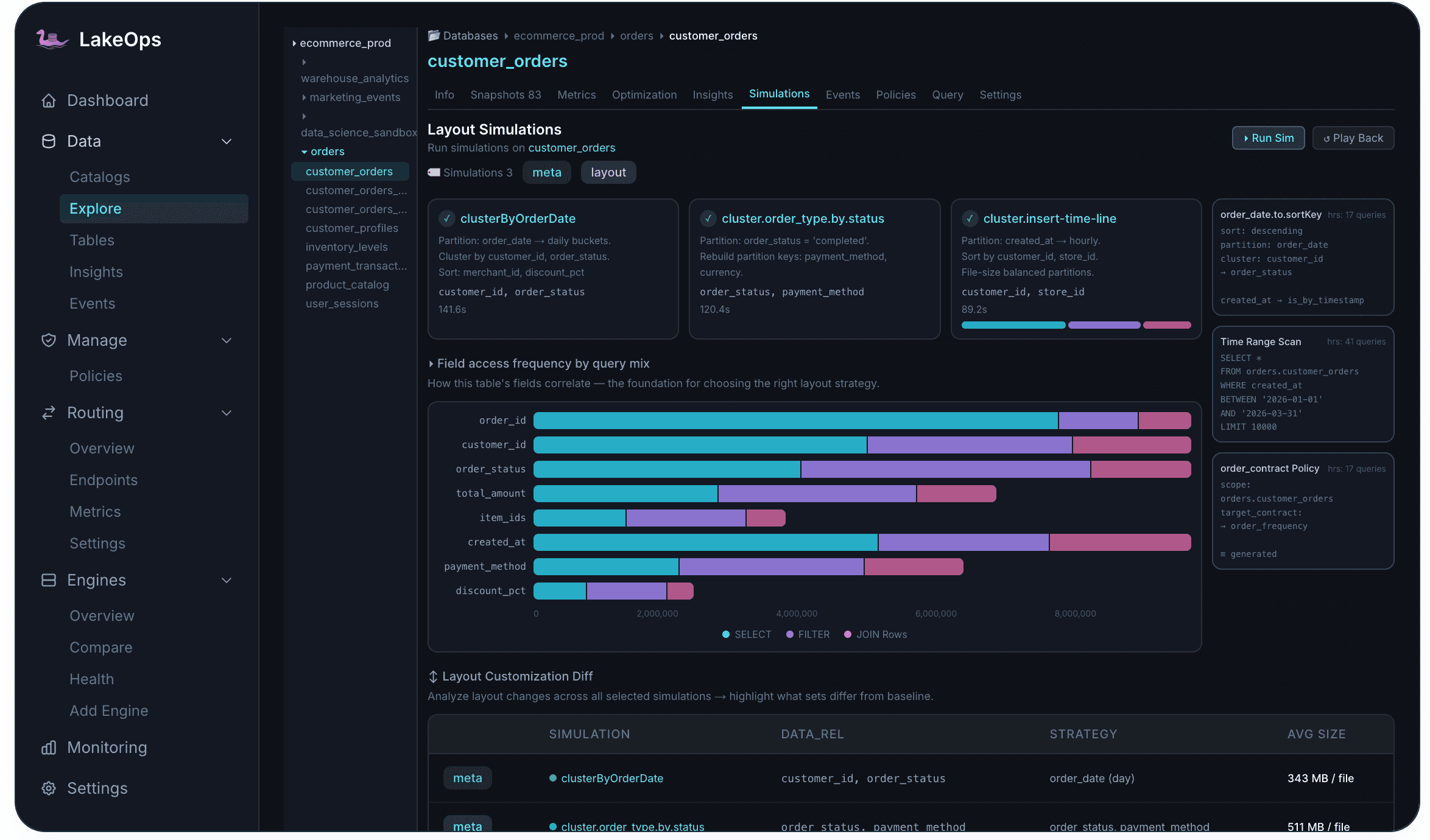

Choosing the wrong sort order can hurt performance instead of helping it. A sort optimized for analytics might degrade ETL. A partition strategy that works today might not match tomorrow's query patterns.

Layout simulations solve this by running on a real Iceberg branch created from the current snapshot. The proposed layout is applied on the branch — producing real file counts, sizes, and execution measurements — and the branch is discarded afterward with no permanent side effects. No data is committed to the main table.

The system continuously tracks field access frequency per column — how often each appears in SELECT, FILTER, and JOIN operations. This feeds directly into simulation configuration, showing which sort columns would produce the best skipping effectiveness for the observed workload.

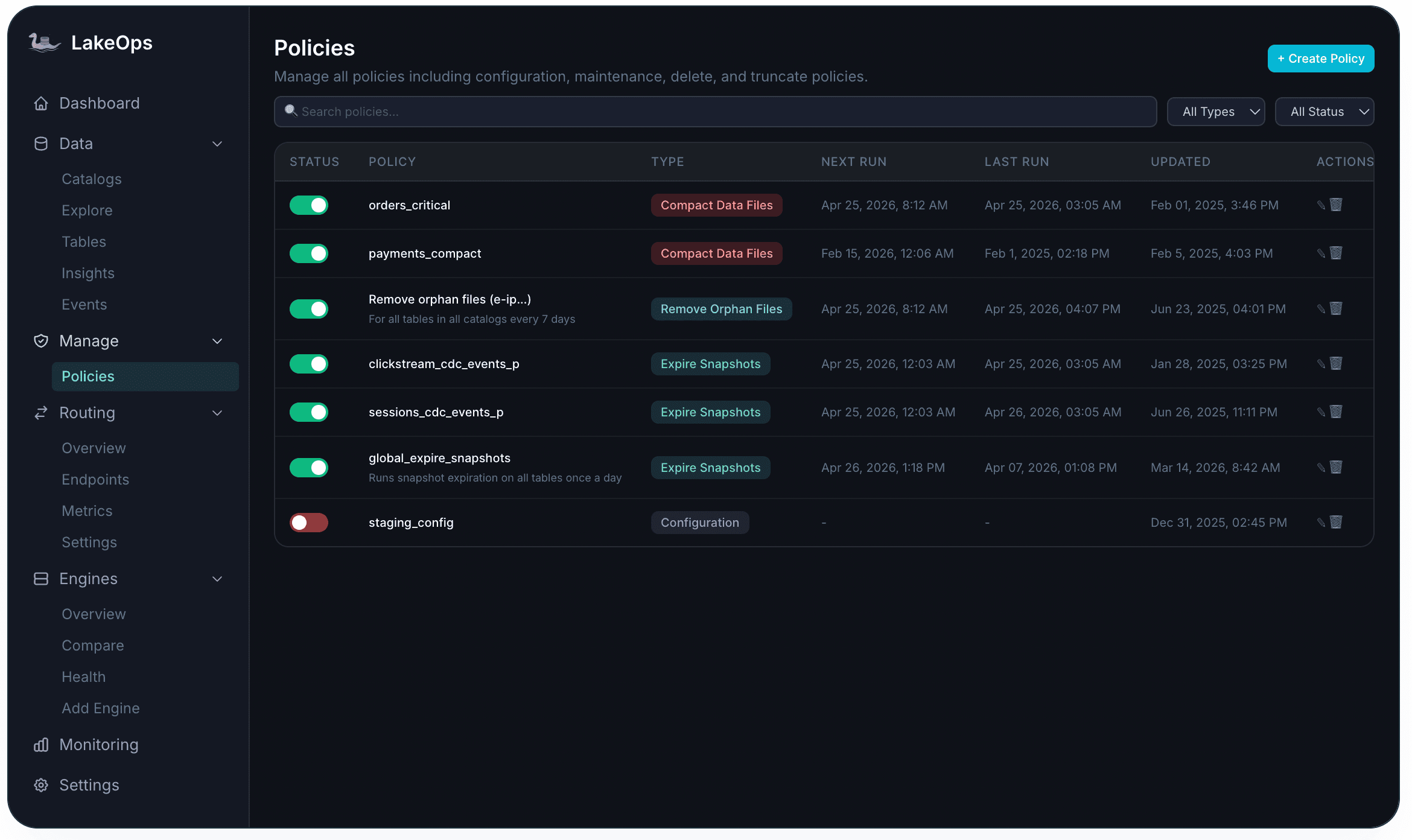

Policies: governance at fleet scale

Per-table configuration works for ten tables. At hundreds of tables across multiple catalogs and namespaces, you need policies.

A policy defines an operation type (compact, expire snapshots, rewrite manifests, remove orphans, or configuration governance), a scope (catalog, namespace, or table), a schedule (cron), and operation-specific parameters. Policies follow a specificity hierarchy — table-level overrides namespace-level, which overrides catalog-level. This lets you set organization-wide defaults and make targeted exceptions where specific tables have unusual requirements.

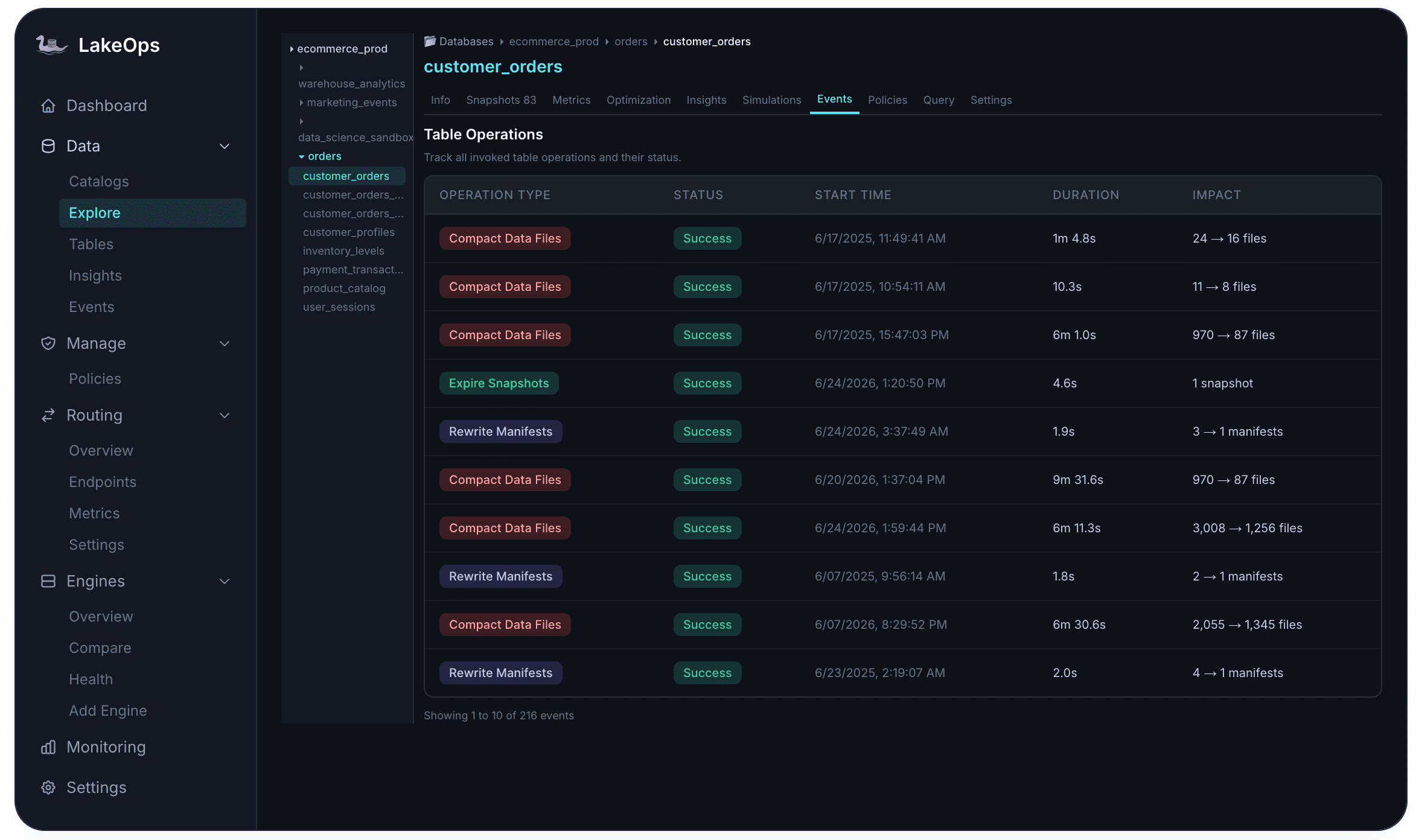

Scheduling best practices are codified: expiration runs hourly, orphan cleanup daily after expiration, compaction during off-peak hours, manifest rewrites after compaction. Heavy operations are staggered to avoid resource contention. Every policy execution is logged in Events with full before/after metrics — file counts, storage reclaimed, duration, and success/failure status.

Observability: connecting operations to cost

Autonomous maintenance without observability is a black box. The dashboard provides fleet-level visibility: total operations run, average query acceleration (pre vs post optimization), estimated cost savings, CPU and storage reduction as a percentage of baseline, and total data optimized.

At the table level, the Insights tab surfaces problems at four severity levels before users notice them — CRITICAL for severe fragmentation, HIGH for excessive manifests or snapshots, WARNING for partition skew, and LOW for early small-file accumulation. The Events tab provides a complete audit trail per table — every compaction, snapshot expiration, orphan removal, and manifest rewrite with duration, impact, and status.

Cross-system telemetry combines object storage metrics (file counts, sizes, access patterns), engine query metrics (latency, scan volumes, CPU), Iceberg metadata (snapshots, manifests, partitions), and optimization pipeline throughput (compaction queue depth, scheduling). This unified signal feeds every automation decision and every dashboard metric.

Continuous improvement

The final characteristic of autonomous maintenance is self-improvement. The system learns from its own execution history.

Compaction performance improves across runs on the same table as the engine learns workload access patterns and data distribution — in production, the same 1.2 TB table went from 22 minutes to 18 minutes to 11 minutes across three consecutive runs with no configuration changes. Sort orders adapt as query patterns evolve — if analysts start filtering on a new column, the system detects the shift in telemetry and adjusts the layout strategy. Scheduling intervals tighten or relax based on observed ingestion rates and degradation velocity.

This is what separates a control plane from a scheduling system. A cron job runs the same operation with the same parameters at the same interval regardless of what has changed. An autonomous system observes, decides, acts, measures, and adjusts — continuously, across every table, without engineering intervention.

The operational outcome

The practical outcome for data platform teams is the elimination of maintenance toil. No more debugging compaction failures at 2 AM. No more manually triaging which tables need attention. No more maintaining a growing library of Airflow DAGs. No more discovering that a streaming table accumulated 40,000 small files because nobody noticed the compaction job stopped working three weeks ago.

Tables stay healthy as a baseline. Queries run faster because data is properly sized and sorted. Storage costs drop because dead data is continuously removed. Compute costs drop because engines scan less data on every read. And engineering time shifts from reactive maintenance to proactive data platform development.

LakeOps connects to your existing catalogs and object storage in about ten minutes — no agents, no data movement, no pipeline changes. Supported catalogs include AWS Glue, REST catalogs (Polaris, Gravitino, Nessie, Lakekeeper), and S3 Tables. The system discovers your tables, classifies health, and begins autonomous maintenance according to the policies you define.