From closed platforms to managed open lakehouses

For the better part of a decade, the default answer to enterprise data infrastructure was a single platform: Snowflake for analytics-heavy organizations, Databricks for ML-forward ones, or both for companies large enough to afford the overlap. These platforms earned their position. They abstracted away enormous complexity, delivered strong performance, and let data teams focus on queries and models instead of infrastructure plumbing. But everything — storage, compute, optimization, governance — lived inside one vendor's boundary.

Then came the data lake. Organizations started dumping everything into S3 or HDFS — structured, semi-structured, unstructured — with Hadoop and Spark for compute. The promise was unlimited flexibility and no lock-in. The reality was data swamps: stale files, broken schemas, no ACID guarantees, and engineering teams spending more time debugging pipelines than analyzing data.

The data lakehouse fixed the reliability gap. Apache Iceberg (along with Delta Lake and Hudi) added warehouse-grade guarantees — ACID transactions, schema evolution, time travel, snapshot isolation, hidden partitioning — directly on top of object storage. For the first time, you could have the flexibility of a lake with the reliability of a warehouse, without copying data into a proprietary system. The data stayed on cheap, durable object storage while the metadata layer made it trustworthy.

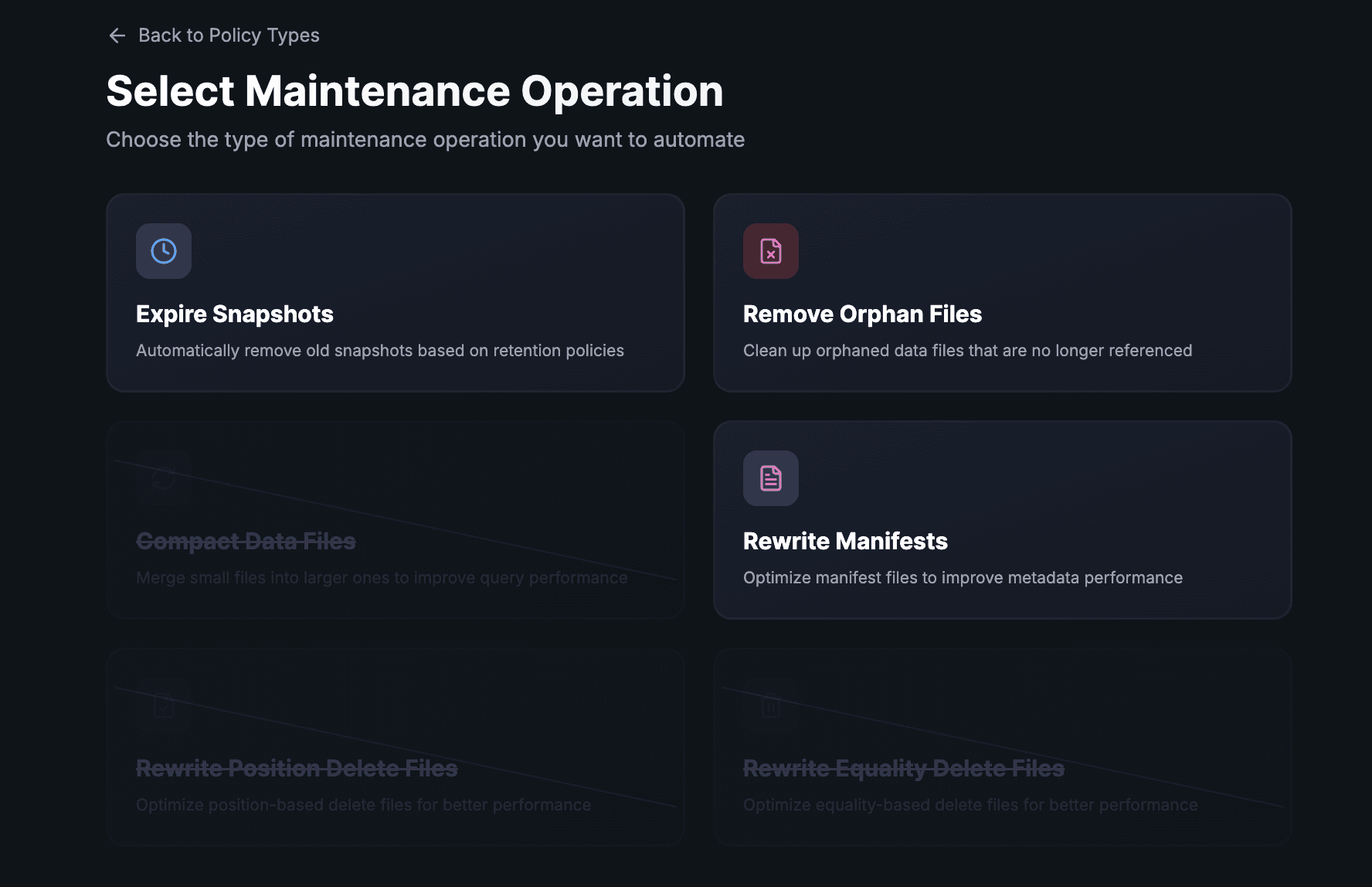

But the lakehouse left two problems unsolved. First, most implementations still lived inside a single vendor's ecosystem — Databricks ran Delta Lake on its runtime, Snowflake ran Iceberg inside its managed service. The format was open, but the operational stack was closed. Second, nobody owned the maintenance. Iceberg gives you powerful primitives — compaction, snapshot expiration, manifest rewrites, orphan cleanup — and then leaves you responsible for running them at scale, across engines, continuously, without breaking production reads. At 50 tables, scripts and cron jobs work. At 500 tables across multiple catalogs and engines, the manual approach collapses — and teams drift back toward managed platforms just to get someone else to handle the operations.

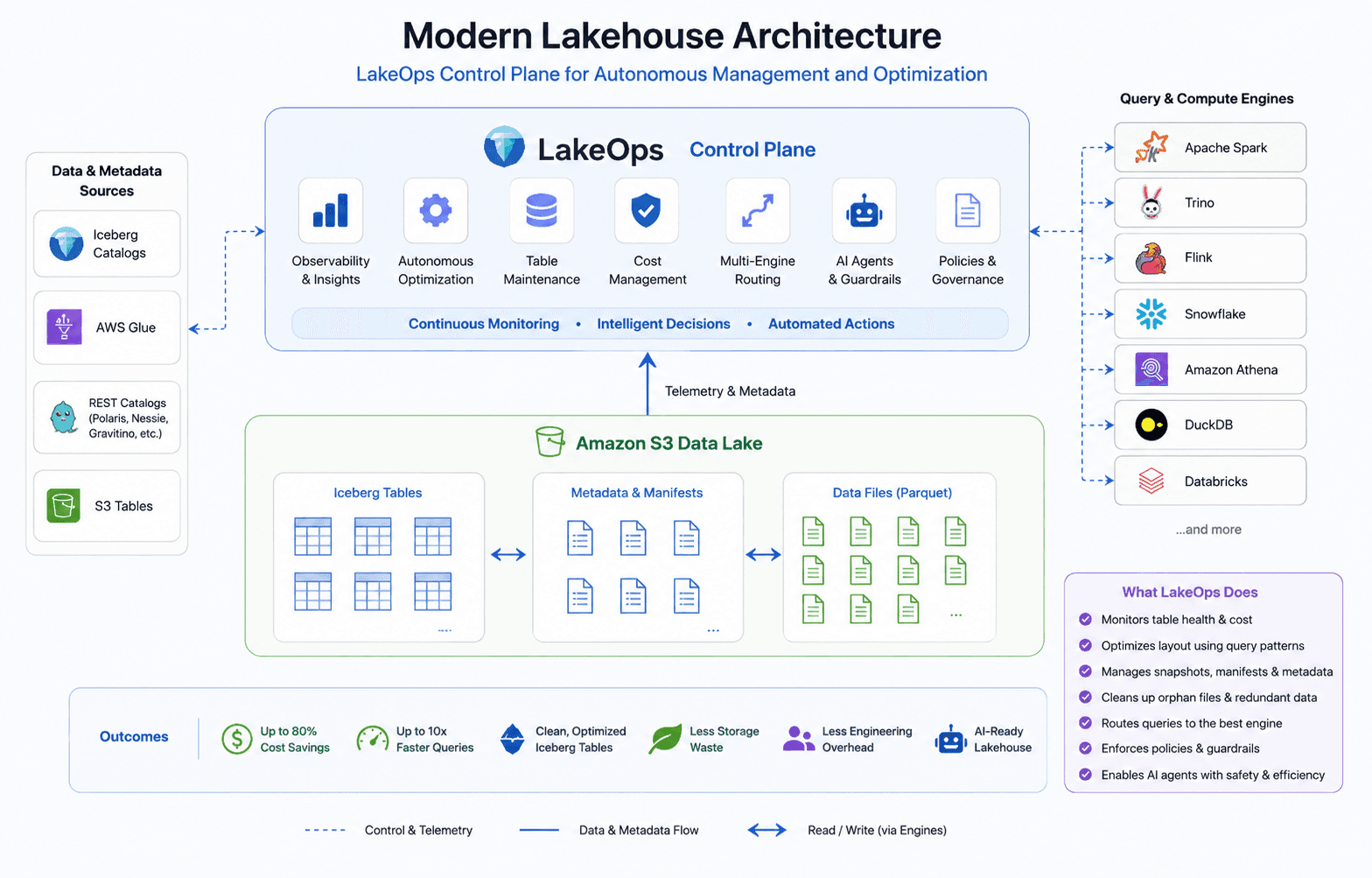

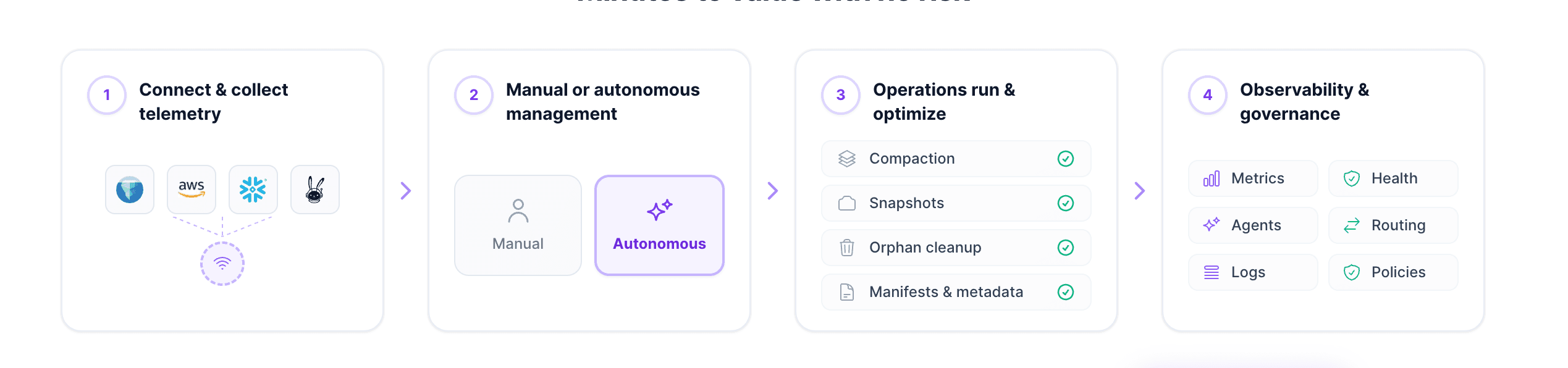

An autonomous control plane is what turns a lakehouse into a managed open data platform. Storage is commodity infrastructure you own. Iceberg is the contract every engine speaks. Engines are chosen per workload. Catalogs like Polaris, Gravitino, Nessie, and Lakekeeper provide the metadata registry. And a control plane — LakeOps — connects to your existing catalogs and storage, continuously handles the operational work (compaction, snapshot lifecycle, orphan cleanup, manifest optimization, observability, policies, multi-engine routing, and agentic AI readiness), and does not replace anything in your stack. It adds the operational intelligence that open-source components do not provide on their own. Setup takes about ten minutes, no code or infrastructure changes.

This is the architecture that data platforms are already being built on in 2026. The rest of this article explains why, how the transition is happening, and what it takes to make it work in production.

The walled-garden model and its costs

Snowflake and Databricks built their businesses on a compelling premise: ingest your data into our system, and we will handle the rest. Storage, compute, optimization, governance, and query execution all lived inside one boundary. For teams without deep infrastructure expertise, this was transformative.

The trade-off was lock-in. Snowflake-managed tables live in Snowflake's storage layer; moving large volumes out means export steps whose cost rises with data age and dependent objects. Databricks reduced friction by keeping data in your cloud storage (often as Delta Lake), but Unity Catalog and the broader product surface still create strong ecosystem gravity. Both platforms charge for compute using proprietary credit systems — Snowflake Credits and Databricks DBUs — that are difficult to compare directly with raw cloud infrastructure costs. As data volumes grow, the gap between commodity object storage and metered proprietary compute becomes impossible to ignore.

But the deeper problem is architectural, not financial. When storage and compute are coupled — or when governance is tied to a single vendor's catalog — every new workload, every new team, and every new use case adds dependency on that platform. The platform becomes not just a tool but a constraint on how your data architecture can evolve.

Why the shift is happening now

The lakehouse proved that open storage could be reliable. Four forces are now converging to make the next step — turning the lakehouse into a fully managed, open data platform — not just viable but urgent.

Apache Iceberg matured. Iceberg solved the fundamental reliability gap that kept data lakes inferior to data warehouses. ACID transactions, schema evolution, hidden partitioning, time travel, and snapshot isolation — these are warehouse-grade features running on object storage. In the independent **2025 State of the Apache Iceberg Ecosystem** survey (fielded January 2026, published on DataLakehouseHub), 96.4% of respondents reported using Apache Spark with Iceberg, 60.7% OSS Trino, 32.1% Apache Flink, and 28.6% DuckDB — evidence that Iceberg functions as a shared substrate across engines, not a single-vendor runtime.

The Iceberg REST Catalog API standardized metadata access. Snowflake and Databricks have both shipped Iceberg REST catalog integrations (alongside other catalog options), enabling federation patterns where external engines and services can interoperate on Iceberg metadata using the same REST contract. In practice, teams can keep Iceberg files on S3 (or compatible object stores), register tables in REST-aware catalogs such as Apache Polaris, Apache Gravitino, Project Nessie, or Lakekeeper (among others), and query through engines that implement the contract—including Trino, Spark, DuckDB, Athena, Snowflake, and Databricks—subject to each engine's supported features and authentication model.

Economics forced the conversation. At petabyte scale, the gap between commodity object storage and metered proprietary compute becomes impossible to ignore. Finance and platform teams increasingly ask whether specific query tiers can run on open engines against the same Iceberg files without paying premium credits for every scan.

The urgent need for agentic AI readiness. AI agents are becoming primary consumers of enterprise data — issuing SQL iteratively inside tool-use loops, generating unpredictable query shapes, and requiring sub-second latency from tables designed for batch workloads. This creates a new infrastructure contract: schema-discovery APIs, query guardrails that enforce cost ceilings at machine speed, well-compacted files for low-latency reads, and stable routing endpoints agents can call without per-session configuration. Closed platforms were not architected for this pattern — their concurrency models and governance assume a human on the other side. Open data platforms allow organizations to insert purpose-built agent middleware between the agent and the data without waiting for a single vendor's roadmap.

The architecture: storage as bedrock, engines as plugins

The open data platform inverts the traditional model. Instead of choosing a platform and conforming your data to it, you start with your data and plug in the capabilities you need.

Storage is the foundation. Data sits on Amazon S3, Google Cloud Storage, Azure ADLS, or on-premises object storage — in your account, under your control. The storage layer is durable, cheap, and universally accessible. It does not belong to any compute engine.

Iceberg is the contract. Apache Iceberg defines how data is organized, versioned, and accessed. Its metadata layer — manifest lists, manifest files, and data files — provides the ACID guarantees and schema management that engines need. Any engine that speaks Iceberg can read and write these tables without coordination with any other engine.

Engines are chosen per workload. Trino for interactive analytics. Spark for heavy ETL and batch processing. DuckDB for lightweight local queries. Flink for stream processing. Snowflake for BI workloads where its optimizer excels. Databricks for ML workflows and notebook-driven exploration. Each engine connects to the same tables, reads the same metadata, and operates independently.

Catalogs provide the registry. REST-compliant catalogs like Polaris, Gravitino, Nessie, and Lakekeeper serve as the metadata registry — the single source of truth for what tables exist, where they are stored, and how they are partitioned. Engines register against the catalog, discover tables, and coordinate writes through Iceberg's optimistic concurrency.

This architecture gives organizations something the walled-garden model struggles to match: the ability to adopt new engines without migrating the underlying files, to retire engines without rewriting the table format, and to benchmark alternative compute against the same Iceberg datasets—materially lowering switching friction compared with proprietary warehouse-native storage alone.

Where Databricks and Snowflake fit

The open data platform does not eliminate Databricks and Snowflake. It reframes them as components rather than platforms — powerful engines within a broader ecosystem, chosen when their strengths justify their cost.

Snowflake remains compelling for BI-heavy workloads where its query optimizer, caching layer, and concurrency handling outperform open alternatives. Its commitment to bidirectional Iceberg federation through the REST Catalog endpoint means it can participate in an open architecture without requiring data to live in Snowflake-proprietary storage.

Databricks brings differentiated value for ML and AI workloads where the integration between notebooks, MLflow, and Spark-native feature engineering is genuinely hard to replicate. Full Iceberg support through Unity Catalog means Databricks workloads can coexist with open engine stacks on the same data.

Both vendors have recognized the direction. Snowflake published "Open by Design" as a company commitment. Databricks announced full Iceberg support and extended Delta Sharing to serve data in Iceberg format. These are acknowledgements that the future is multi-engine — and the vendors who participate retain relevance. But when Snowflake and Databricks become engines in a broader architecture, the question becomes: who manages the lakehouse itself?

The hard part: running a lakehouse without a managed service

The architectural elegance of decoupled storage and compute comes with an operational reality that most vendor marketing omits: when no single platform owns the full stack, nobody owns the maintenance either. In a closed platform, compaction, snapshot management, storage optimization, and query tuning happen inside the vendor's managed service. You pay for it in your credit consumption, but it happens. In an open data platform, these responsibilities fall on your data platform team — and this is where most migration plans underestimate the effort.

Observability across the stack. When queries hit Trino, Spark, and Snowflake against the same Iceberg tables, where do you look when latency degrades? Each engine has its own metrics surface, its own logging format, and its own definition of what constitutes a slow query. Cross-engine observability — understanding table health, query patterns, and resource consumption in one view — does not exist out of the box. Without it, you discover problems after users complain, not before.

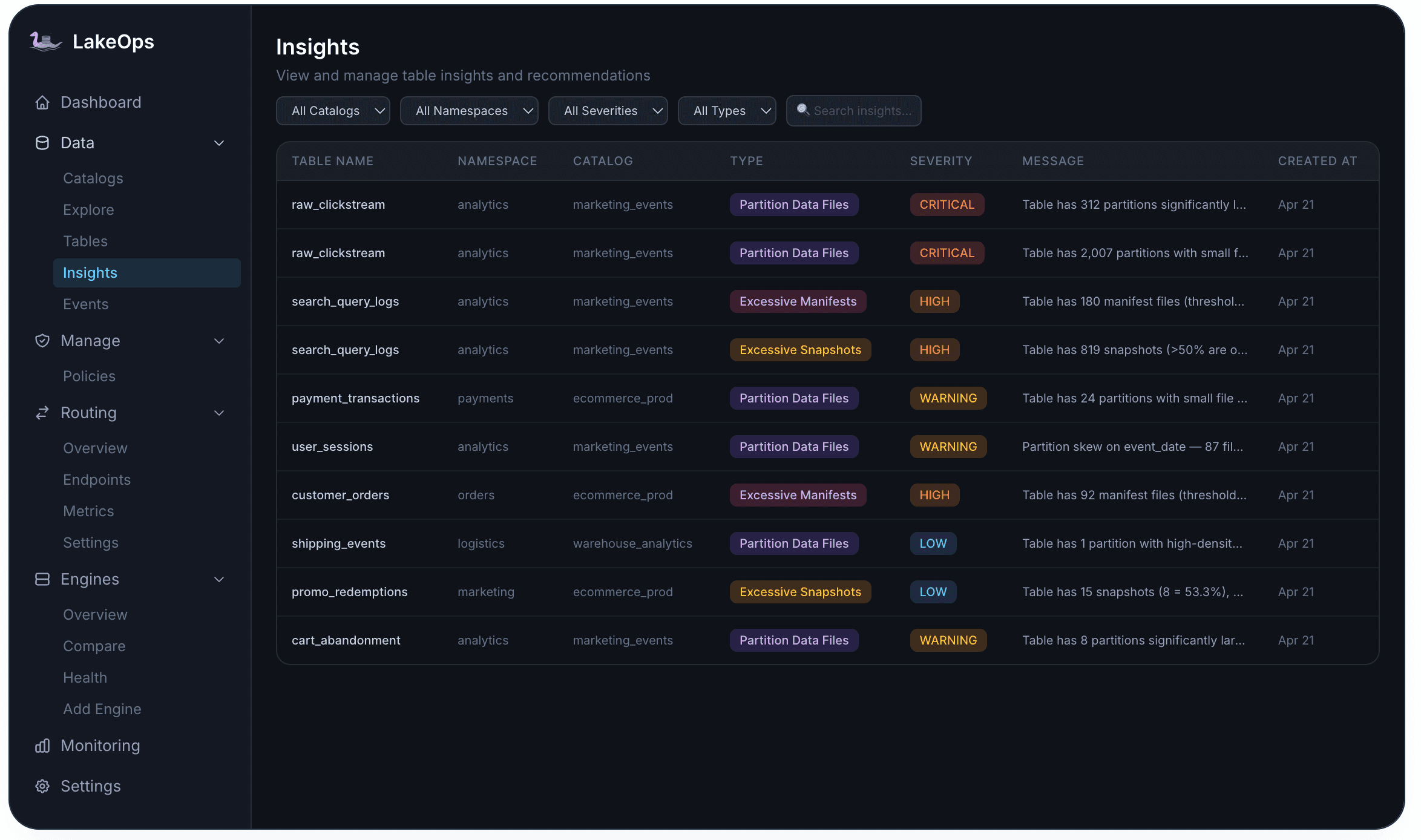

Table maintenance at scale. Iceberg tables degrade without continuous maintenance. Streaming pipelines create thousands of small files per day. Snapshots accumulate indefinitely if no one configures expiration. Orphan files from failed writes consume storage without serving any query. Manifests fragment until query planning takes longer than the scan itself. The degradation is predictable and well-documented — and it compounds from multiple directions simultaneously. A query that returned in 2 seconds last quarter takes 15 once small files and fragmented manifests pile up.

Governance without a single control point. In a Snowflake-only world, access control lives in Snowflake. In a multi-engine open platform, you need governance that spans engines — row-level security, column masking, audit logging, and policy enforcement that works consistently whether the query comes from Trino, Spark, or a Python notebook. Achieving this with disparate engine-native controls is fragile and error-prone.

Agentic AI readiness. AI agents issue SQL iteratively, generate unpredictable query shapes, and need sub-second latency from tables sized for batch traffic. On uncompacted tables with fragmented manifests, agents hit severe latency penalties. They need schema discovery, query guardrails, cost controls, and well-maintained table layouts that most lake deployments never standardized.

Every one of these problems has a manual solution that works at small scale: write a compaction script, build a monitoring dashboard, configure access controls per engine. But manual approaches are reactive — you discover degradation after the damage is done. They are engine-blind — a compaction tuned for Spark does not account for Trino query patterns. And they do not scale — the engineering time grows linearly with table count while the team does not.

Why an autonomous control plane is the missing layer

The challenges above share a common thread: they are continuous, they compound, and they do not scale with human effort. This is the operational gap that the lakehouse evolution left unresolved.

The closed-platform era masked this problem because Snowflake and Databricks handled maintenance inside their stack. The data lake era did not care because nobody expected reliability. The lakehouse era gave you open, reliable storage — but exposed the operational gap fully: you have the freedom to compose your ideal architecture, but you also inherit the responsibility for running it. And the complexity grows combinatorially — more tables multiplied by more engines multiplied by more consumers multiplied by more policies.

What is needed is not a better script or a smarter cron job. It is an architectural layer: a control plane that sits between your storage, catalogs, and engines — observing the state of every table, understanding cross-engine query patterns, and applying the right maintenance at the right time. A layer that does not replace anything in your stack, but adds the operational intelligence that open-source components do not provide on their own.

Without this layer, the operational burden eventually pushes teams back toward managed platforms — trading architectural freedom for someone else owning the maintenance, and re-entering the lock-in cycle that the whole migration was designed to escape.

How a control plane turns a lakehouse into a managed open platform

The lakehouse has all the right building blocks — open storage, open format, composable engines, standardized catalogs. What it lacks is an operational layer that ties them together and keeps everything healthy at scale. A control plane fills that gap: it connects to your existing catalogs and storage, observes the state of every table across every engine, and continuously runs the maintenance operations that turn an unmanaged lakehouse into a managed open data platform — without requiring code changes, data movement, or pipeline modifications.

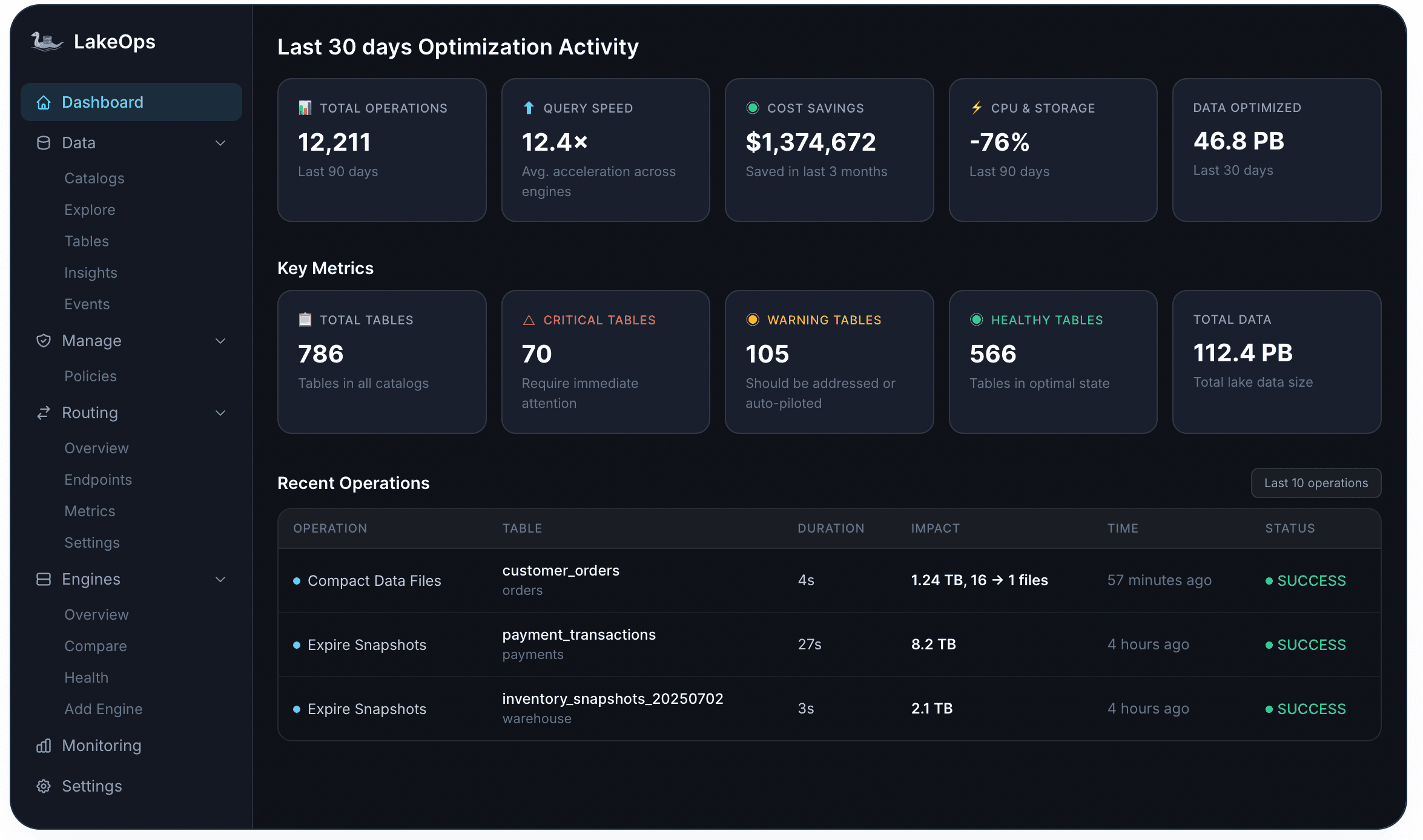

LakeOps is this control plane. It connects to AWS Glue, REST catalogs (Polaris, Gravitino, Nessie, Lakekeeper), and S3 Tables in roughly ten minutes. From that point, it provides seven capabilities that together make the lakehouse as simple to operate as a managed service — without the lock-in.

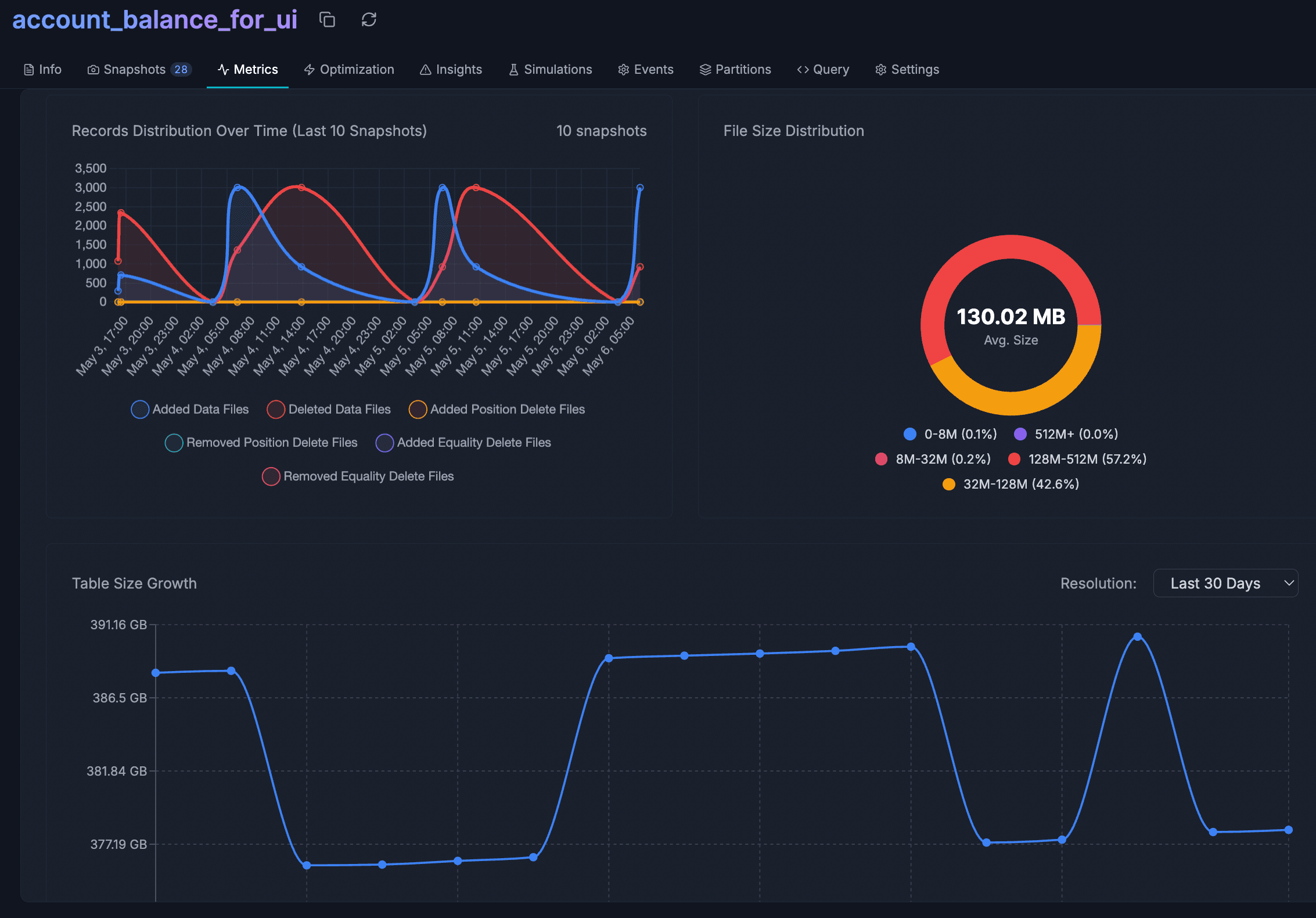

1. Lake-wide observability. You cannot automate what you cannot measure. The control plane unifies storage metrics, query performance, and Iceberg metadata into a single view — across all engines, all catalogs, and every table. Every table gets a health score based on file structure, manifest fragmentation, snapshot depth, and orphan accumulation. An insights engine surfaces problems at four severity levels — Critical, High, Warning, Low — before users notice them. This observability layer is the foundation: it feeds every autonomous decision downstream.

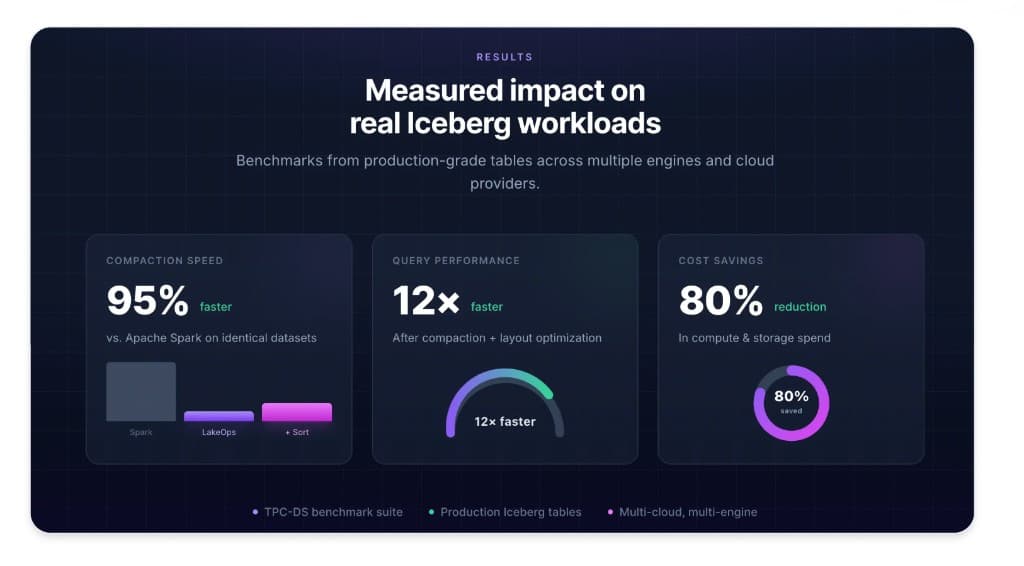

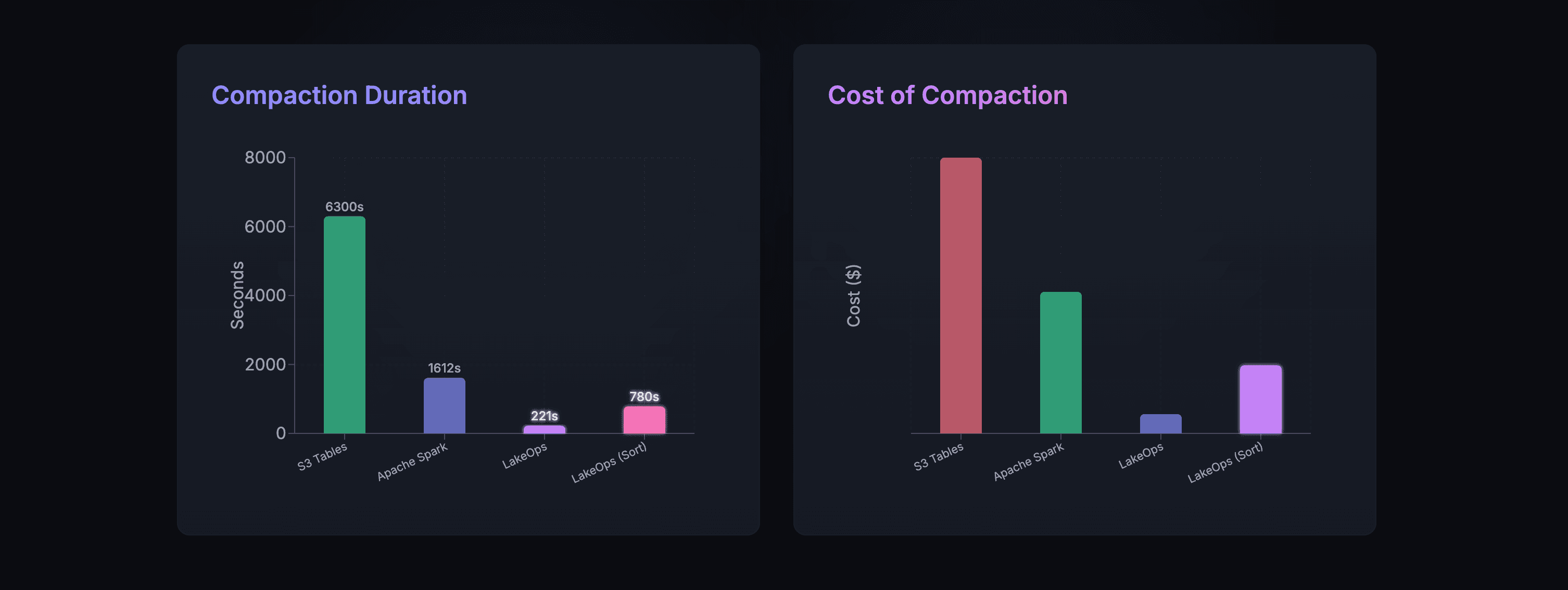

2. Query-aware compaction. Compaction is the highest-impact maintenance operation — and how you compact matters far more than whether you compact at all. Most tools treat it as a file-sizing exercise: merge small files into bigger ones. That reduces file count but leaves data physically unsorted, so every query still scans far more bytes than necessary. The control plane takes a different approach: it observes actual query patterns — which columns appear in WHERE clauses, which partitions are accessed most frequently — and physically reorganizes data files around real usage. Engines can then skip entire row groups using min/max statistics. The result is less data scanned per query, less CPU per read, across every engine. The compaction engine is built in Rust with Apache DataFusion — no JVM overhead, no idle cluster costs — and it continuously adapts sort order as query patterns shift, so the gains compound over time.

3. Snapshot lifecycle management. Every Iceberg write creates a new snapshot. Unbounded accumulation means the metadata tree grows deeper with every commit, making query planning progressively more expensive — and expired-but-undeleted snapshots prevent data files from being reclaimed. The control plane manages the full lifecycle: configurable retention policies, conflict-aware expiration that never removes a snapshot an active reader depends on, and tagging and branching for controlled rollback.

4. Manifest and metadata optimization. Iceberg's manifest files are the index layer between snapshots and data files. After many append and compaction cycles, they fragment — a table might carry hundreds of manifests where a few dozen would suffice. The control plane automates manifest consolidation, position delete optimization, and Puffin statistics computation, reducing planning overhead and improving data skipping across Trino, Spark, Flink, and other engines.

5. Orphan file cleanup. Orphan files — data objects that no live snapshot references — accumulate from aborted writes, failed jobs, and interrupted compaction runs. They serve no analytical purpose but cost money on every storage bill. The control plane removes them safely using age-based thresholds, coordinated with snapshot expiration so that newly unreferenced files from expiration are captured in the same sweep.

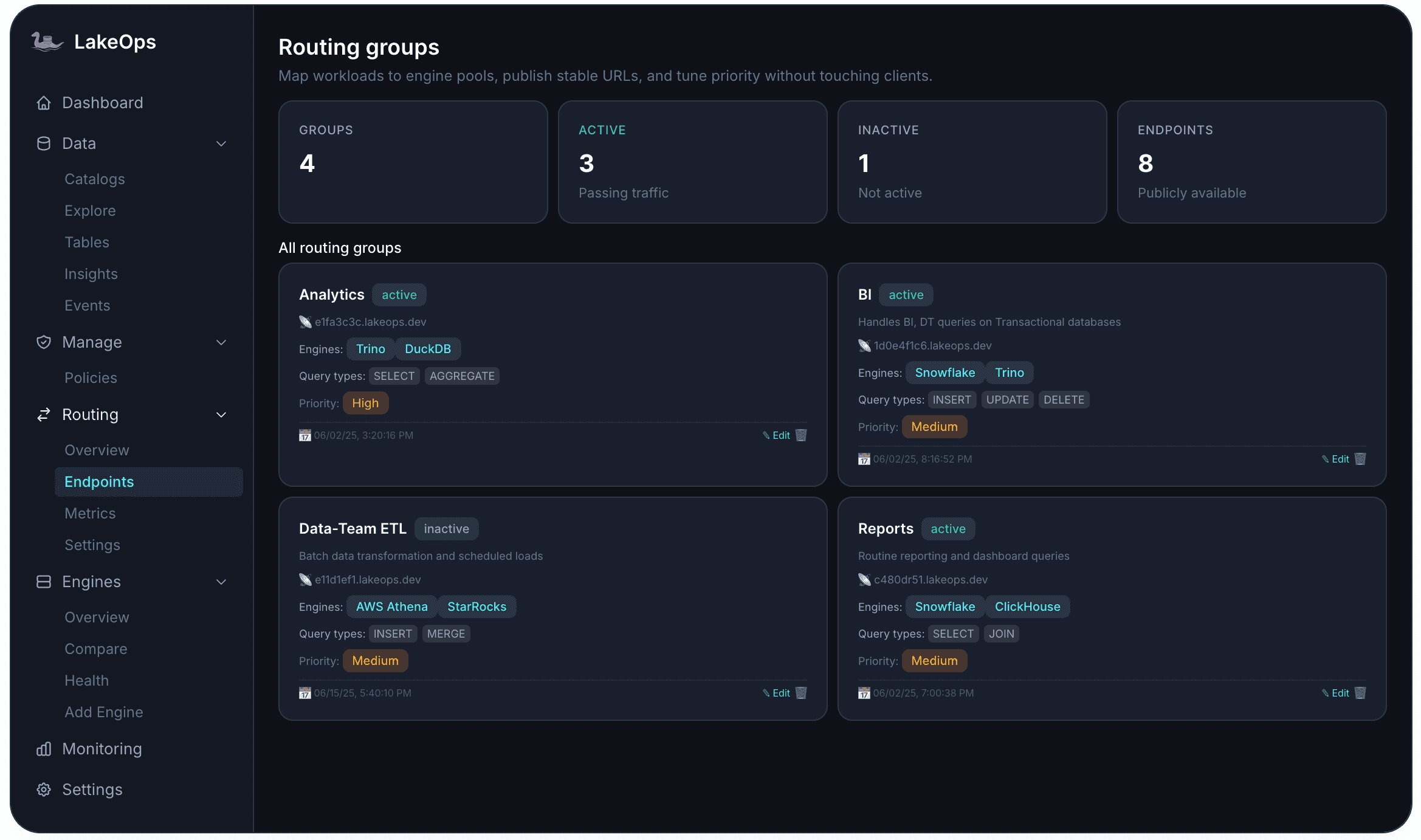

6. Multi-engine query routing. Production lakehouses use multiple engines for different workloads. Without a routing layer, each team picks the engine they know — regardless of cost or latency. The control plane routes queries across Trino, Spark, Snowflake, DuckDB, and more, optimized per workload for cost, latency, or throughput. Stable endpoint URLs give every consumer — human or AI agent — a single connection point that inherits the full policy and governance stack.

7. Agentic AI enablement. AI agents are becoming first-class data consumers — issuing SQL iteratively, generating unpredictable query shapes, and requiring sub-second latency from tables designed for batch traffic. The control plane provides an agent-native MCP interface with schema discovery, layered guardrails (read-only enforcement, cost estimation, PII masking), and cost-aware routing. Because compaction continuously reshapes file layout based on actual query patterns — including agent queries — the lake self-optimizes as AI adoption scales.

Why sequencing and coordination matter

The value of a control plane is not just that it runs maintenance operations — it is that it runs them in the right order, at the right time, aware of what every other operation has done.

When maintenance jobs run independently as disconnected scripts (a Spark compaction cron here, an Airflow expiration DAG there), teams inevitably repeat work, miss opportunities, or create conflicts. A compaction job merges files that are about to be garbage-collected by an expiration run. An orphan cleanup misses files that were just dereferenced by a concurrent expiration. A manifest rewrite runs against stale data because compaction has not finished yet.

The control plane eliminates this by coordinating operations as a pipeline: snapshot expiration runs first — removing old table versions and freeing the metadata tree. Orphan cleanup runs after expiration — catching the newly unreferenced files that expiration just produced. Compaction runs on clean, current data — so it never wastes cycles on files that are about to be deleted. Manifest optimization runs after compaction — so it operates on the final, compacted file set. Each operation's output becomes the next one's clean input.

This sequencing produces compound results that no collection of independent scripts can match. Storage costs drop because expired snapshots, orphan files, and fragmented small files are removed in order. Compute costs drop because query-aware compaction means engines scan dramatically less data on every read. Metadata overhead drops because manifests are consolidated against the final layout. And lake-wide policies codify all of this — retention windows, compaction thresholds, file size targets, scheduling cadences — so the entire pipeline runs autonomously across every table in every catalog, with scope resolution from catalog-wide baselines down to table-level overrides.

The result is a lakehouse that continuously self-optimizes: fewer files, less stale data, leaner metadata, faster planning, less compute per query, and less engineering time. Not from one optimization, but from the compound effect of every operation running in coordination.

The future is managed, open, and autonomous

This is not a theoretical trajectory. Iceberg is the de facto open table format. REST catalogs are the de facto metadata interface. Multi-engine architectures are standard in production. Snowflake and Databricks are becoming components in a broader open ecosystem — powerful engines chosen when their specific strengths justify their cost.

What has changed is the missing piece: the operational layer. The teams that tried to run open lakehouses manually — with cron jobs, custom Spark compaction clusters, and per-engine monitoring dashboards — are the ones now adopting a control plane, because the operational burden scales faster than any team can hire for. The control plane is what turns an open lakehouse from an architectural ideal into a managed platform that works in production.

The format question is settled. The vendor question is settling. The operational question — who keeps hundreds of tables healthy across multiple engines, enforces governance lake-wide, and prepares infrastructure for AI agents — is the one that determines whether your lakehouse becomes a managed open data platform or drifts back toward vendor lock-in. That is exactly what LakeOps was built for.