How data lakes become data swamps

Every data lake starts with the same pitch: dump everything into object storage, process it with whatever engine fits the workload, and stop paying warehouse markups. The early returns are convincing — storage costs plummet, data variety explodes, and teams feel liberated from rigid schemas.

Then the lake turns into a swamp.

It happens gradually. A streaming pipeline writes thousands of tiny Parquet files per hour into partitions nobody documented. Failed Spark jobs leave orphan files that no catalog tracks but S3 charges for every month. Schema changes break downstream consumers silently because there is no enforcement layer. Engineers who once analyzed data now spend their days debugging file layouts, chasing partition skew, and writing one-off compaction scripts that work on one table and break on the next.

The root cause is structural. Traditional data lakes store raw files on object storage — Parquet on S3, GCS, or ADLS — organized by naming conventions rather than contracts. There is no transactional layer, no schema enforcement, and no metadata agreement between the systems writing data and the systems reading it. The result is a set of compounding problems that only get worse with scale:

No ACID guarantees. Concurrent writes produce corrupted or inconsistent state. A pipeline writing to a partition while an analyst reads from it may return partial results, duplicate rows, or errors — with no mechanism to detect or prevent it.

No schema enforcement. When a producer changes a column type or drops a field, downstream consumers break silently — discovered hours or days later.

No partition management. Partition schemes are baked into directory paths. Changing a partitioning strategy means rewriting the entire dataset — so teams live with suboptimal layouts indefinitely, accepting steadily degrading query performance.

No lifecycle management. Files accumulate indefinitely. Old versions, failed writes, orphaned outputs — nothing is automatically cleaned up. Storage bills grow regardless of whether the data serves any analytical purpose.

No unified observability. Each engine (Spark, Trino, Athena) has its own metrics surface. There is no cross-engine view of table health, query patterns, or resource consumption. Problems surface only when users complain.

At small scale, these problems are manageable through convention and scripts. At production scale — hundreds of tables, multiple catalogs, several engines, dozens of consuming teams — the manual approach collapses. The lake is not a lake anymore. It is a swamp.

How Apache Iceberg drains the swamp

Apache Iceberg solves the reliability gap that kept data lakes inferior to data warehouses. It inserts a metadata layer between storage and compute — a contract that every engine can read and write against, without coordination between them. For a deeper dive into the format and its capabilities, see the Iceberg maintenance documentation.

ACID transactions make every write atomic. Concurrent readers never see partial state. Multiple engines can write to the same table safely through optimistic concurrency control.

Schema evolution handles structural changes without rewriting data. Add columns, rename them, widen types — consumers see the updated schema immediately, and existing data remains readable.

Hidden partitioning decouples the physical layout from the query interface. Iceberg derives partition values from column expressions (year, month, day, bucket, truncate) without requiring users to specify partition columns in queries. Partition evolution — changing the scheme — does not require rewriting data.

Time travel and snapshot isolation let you query any previous state of the table. Every write creates an immutable snapshot. Readers are isolated from concurrent writers. Rollback is a metadata operation, not a data recovery project.

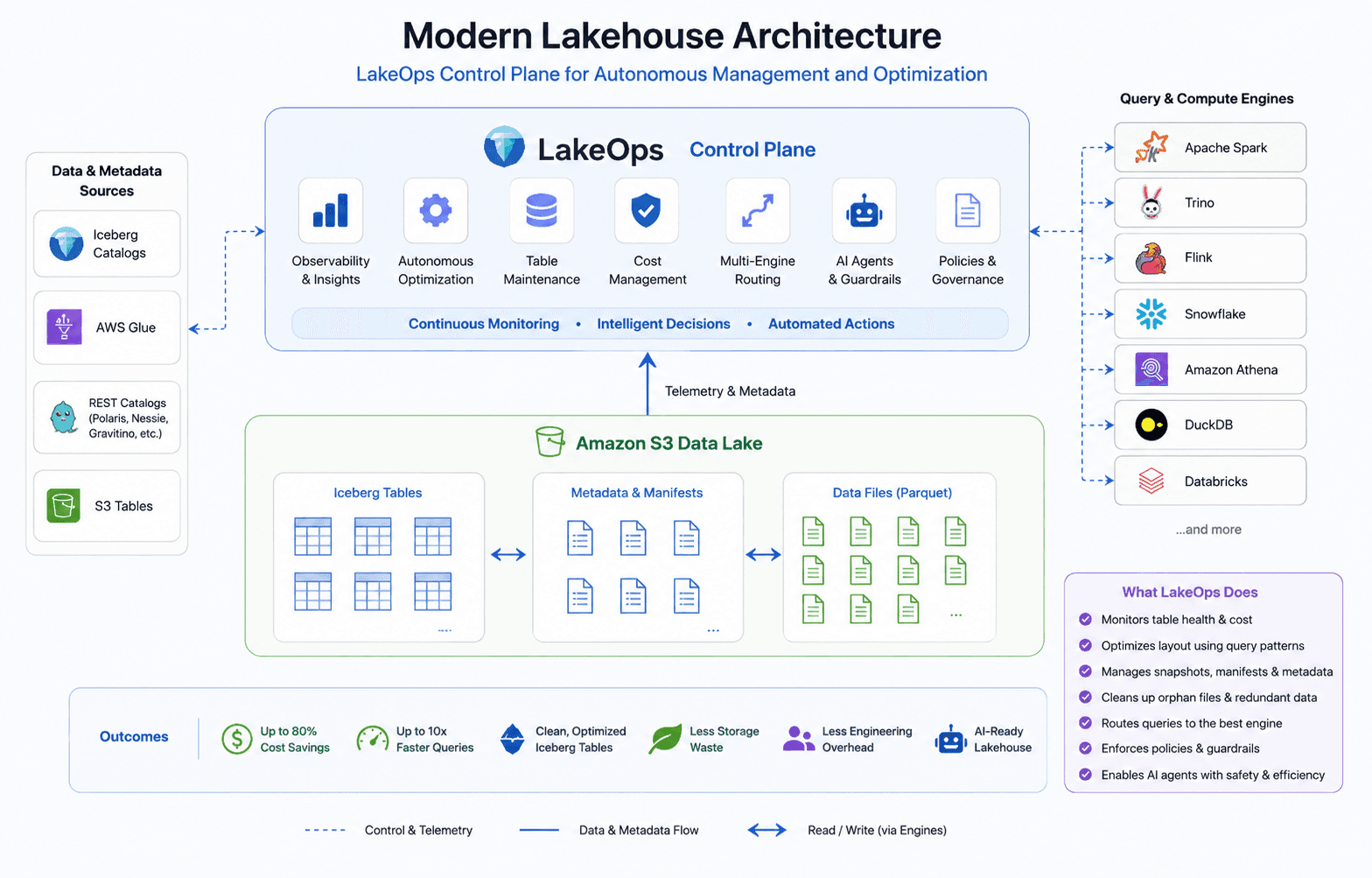

Engine independence means Spark, Trino, Snowflake, Athena, DuckDB, Flink, Databricks, and more all read and write the same Iceberg tables through a shared catalog — AWS Glue, REST catalogs (Polaris, Gravitino, Nessie, Lakekeeper), or S3 Tables. Different teams use different engines for different workloads, all against the same data.

Iceberg turns a data swamp into a reliable data lakehouse. Your data stays on commodity object storage — in your account, under your control — while the metadata layer provides the guarantees that were previously available only inside proprietary warehouses. For more on the shift from closed platforms to open lakehouses, see From Databricks and Snowflake to an Open Data Platform.

The gap Iceberg does not close

Iceberg gives you the primitives. It does not run them for you.

The format provides compaction APIs, snapshot expiration APIs, manifest rewrite APIs, and orphan file detection. But calling those APIs at the right time, in the right order, with the right parameters, across hundreds of tables and multiple catalogs — that responsibility falls entirely on your data platform team. At production scale, this operational burden is where most lakehouse implementations stall.

Compaction is necessary but not automatic. Streaming pipelines create thousands of small files per partition. Without continuous compaction, query engines open hundreds of tiny files instead of a few optimally-sized ones — and because files are unsorted relative to actual query patterns, every read scans far more data than necessary. A query that returned in two seconds last quarter takes fifteen once small files pile up.

Snapshots accumulate without bounds. Every write creates a new snapshot. Without configured expiration, the metadata tree grows deeper with every commit — making query planning progressively more expensive. Expired-but-undeleted snapshots prevent data files from being reclaimed, inflating the storage bill.

Orphan files cost money silently. Aborted writes, failed jobs, and interrupted compaction runs leave data objects on storage that no live snapshot references. Object storage charges per byte regardless. In production lakes, orphan files routinely account for a significant share of the storage bill.

Manifests fragment over time. After many append and compaction cycles, a table might carry hundreds of manifest files where a few dozen would suffice. Every query opens every manifest to build an execution plan — at 200+ manifests, planning overhead dominates execution time.

At fifty tables, you can manage this with scripts and cron jobs. At five hundred tables across multiple catalogs and engines, the scripts become the problem — brittle, uncoordinated, and blind to the interactions between operations. Engineering time spent maintaining the maintenance layer grows linearly with table count. The team does not.

The control plane: the missing layer

The data swamp was caused by the absence of an operational layer. Iceberg added reliability but not operations. The missing piece is not a better script or a smarter cron job — it is an architectural layer: a control plane that sits between your storage, catalogs, and engines, observing the state of every table, understanding cross-engine query patterns, and applying the right maintenance at the right time.

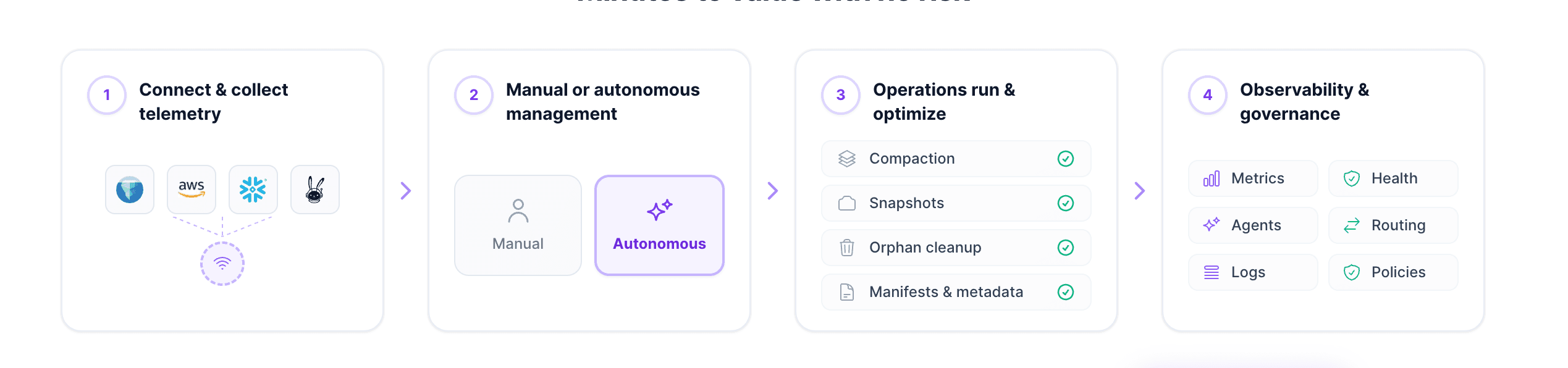

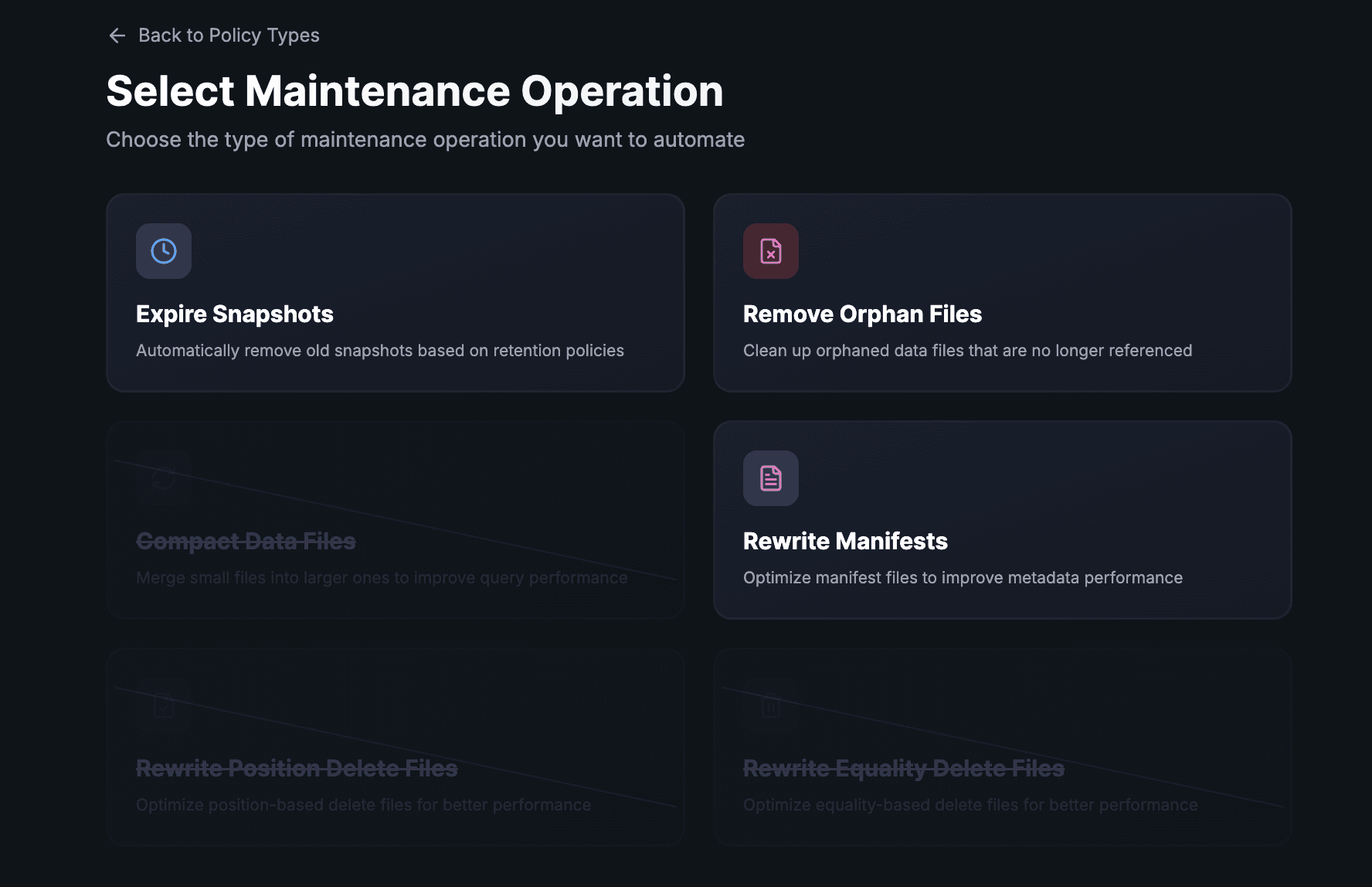

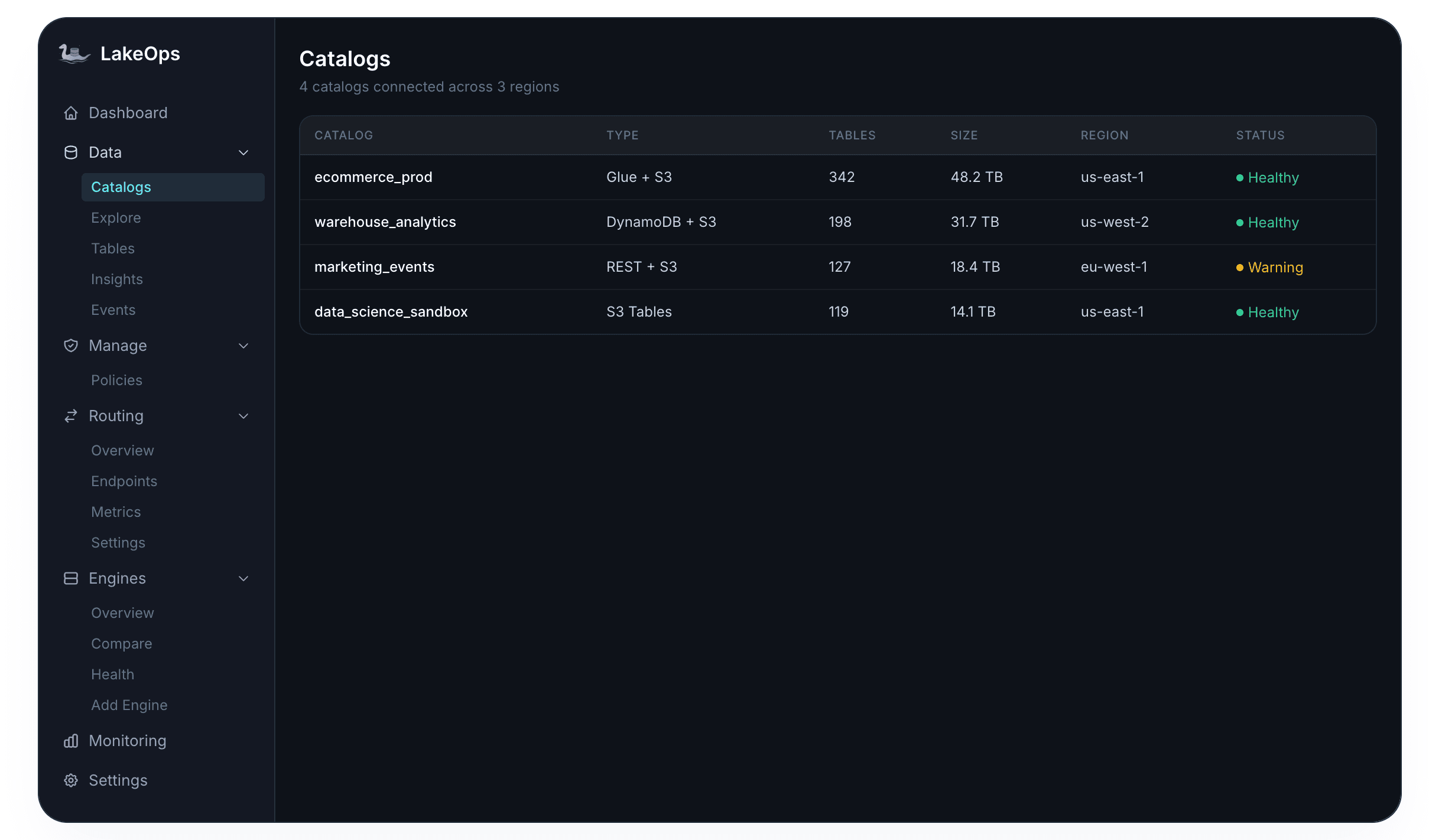

LakeOps is this control plane. It connects to your existing catalogs and object storage in roughly ten minutes — no data movement, no pipeline changes. From that point, it continuously handles everything the format leaves to you. For a detailed walkthrough of every component, see the Managed Iceberg Lakehouse: A Practical Guide.

The sections below walk through each component of what the control plane provides — from observability through compaction, snapshot lifecycle, manifests, orphan cleanup, policies, multi-engine routing, to AI readiness — and how each one contributes to a lakehouse that self-optimizes continuously.

1. Lake-wide observability

The first step out of any swamp is visibility. When three engines query the same Iceberg tables, each engine produces its own metrics in its own format — and none of them tell you about the underlying file structure. Storage metrics live in a cloud console. Query latency lives in engine dashboards. Iceberg metadata — manifest counts, snapshot depth, orphan accumulation — lives nowhere accessible without shell commands. Platform teams typically discover degradation only after users open a ticket.

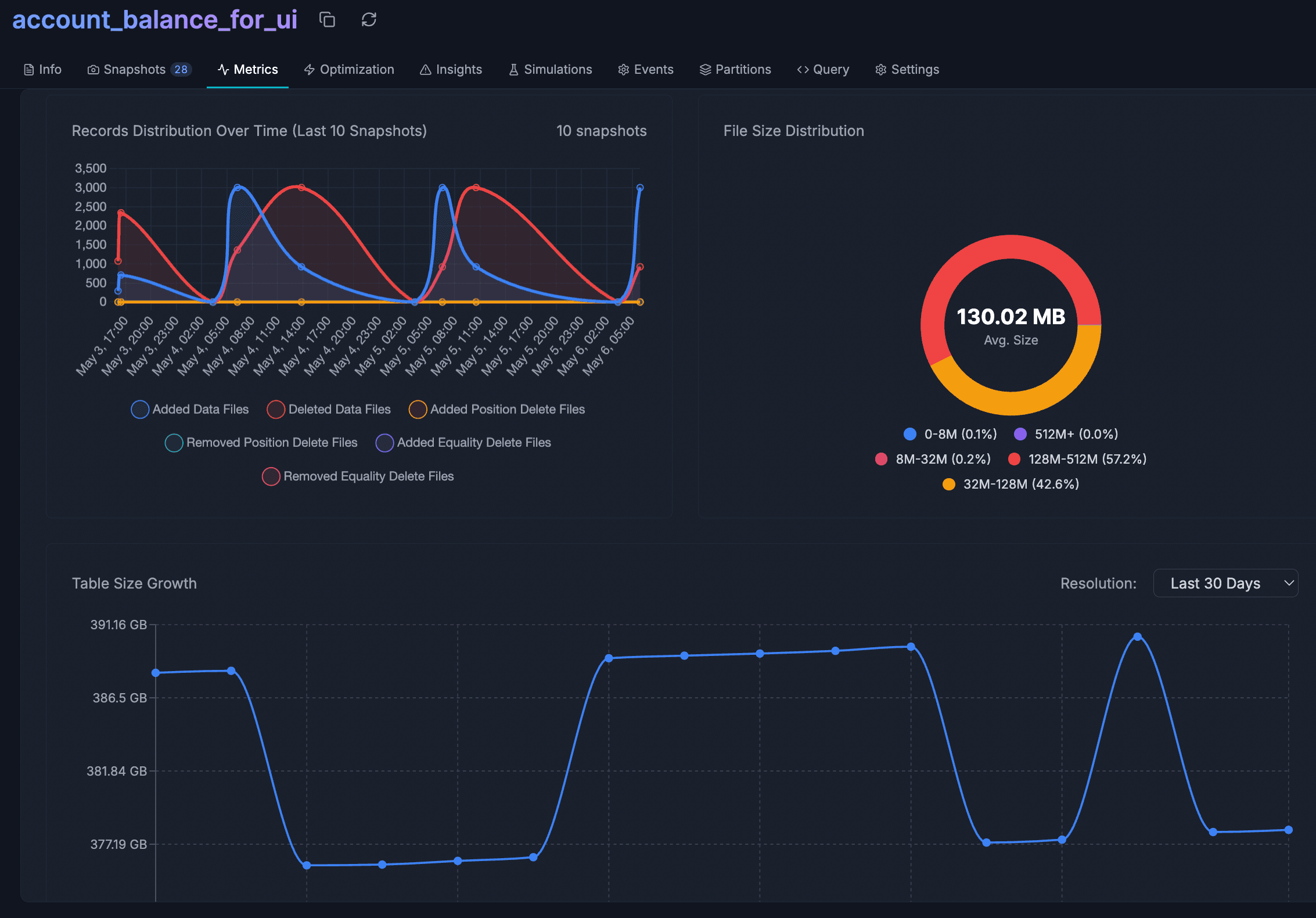

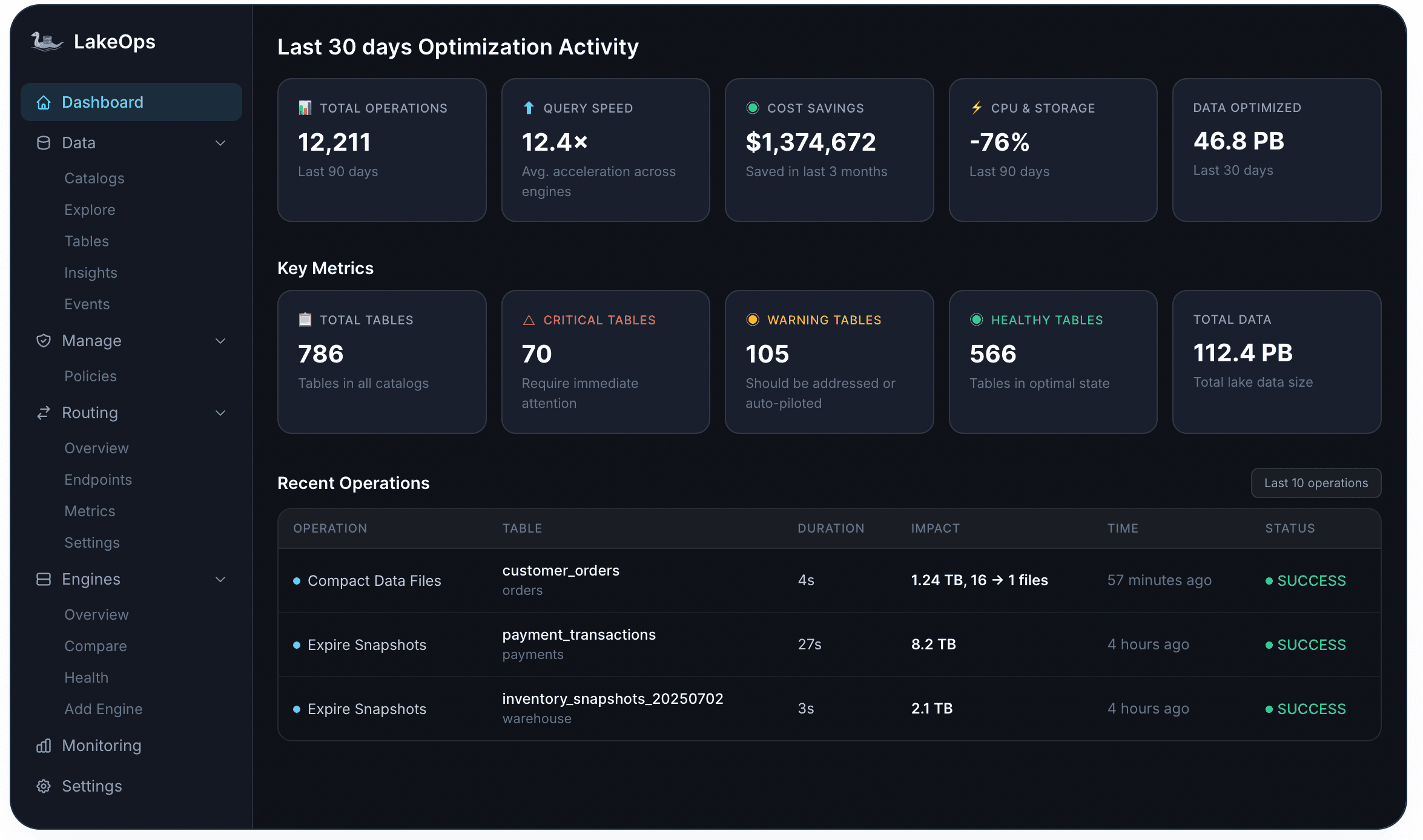

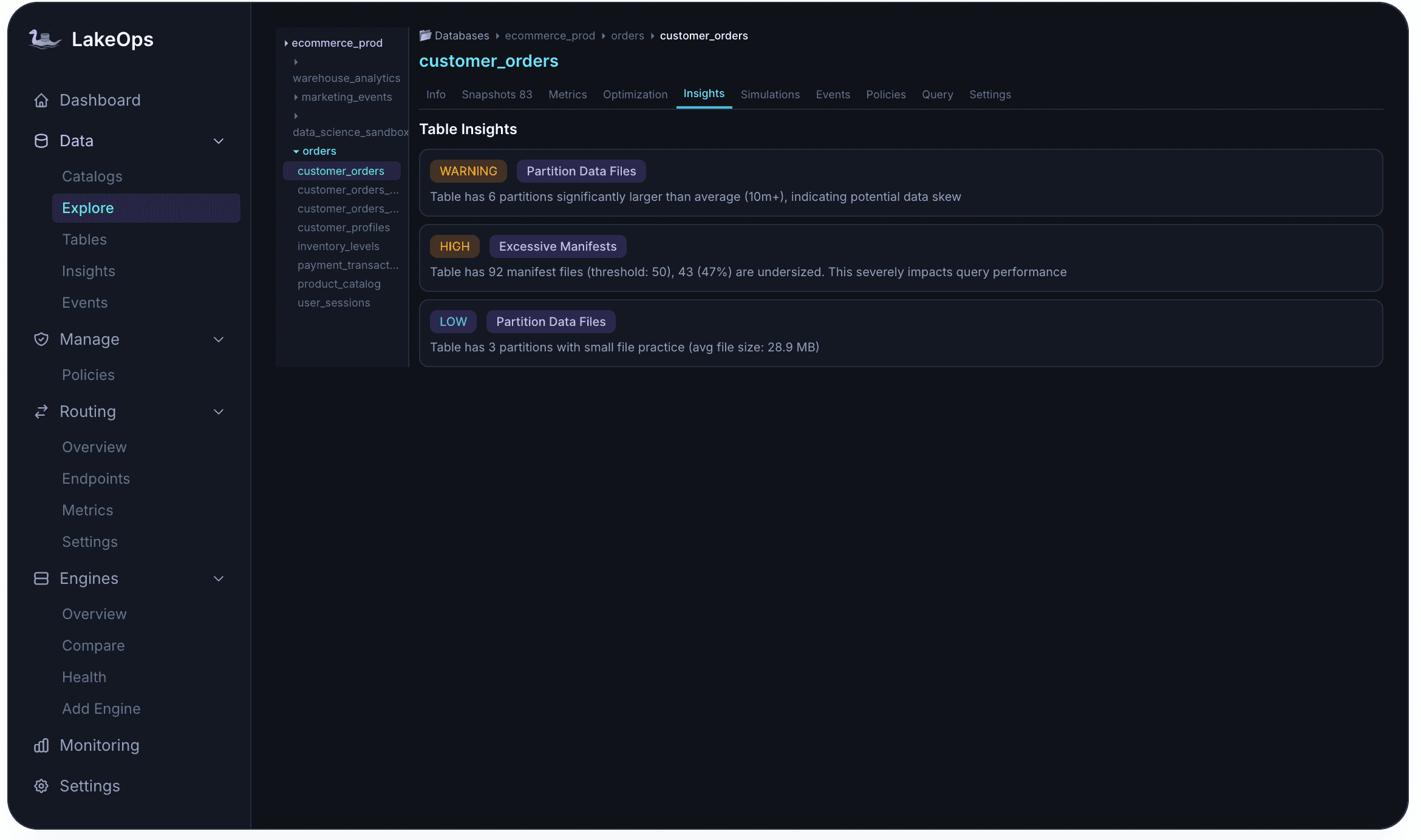

A control plane consolidates all of these signals into a single surface. At the lake level, the dashboard shows aggregate health: how many tables are in good shape, how many need attention, what the optimization activity looks like over the last 30 days, and what the cost and performance impact has been. Every table is scored and classified — Critical, Warning, or Healthy — based on structural indicators like small file density, manifest count, snapshot backlog, and orphan volume.

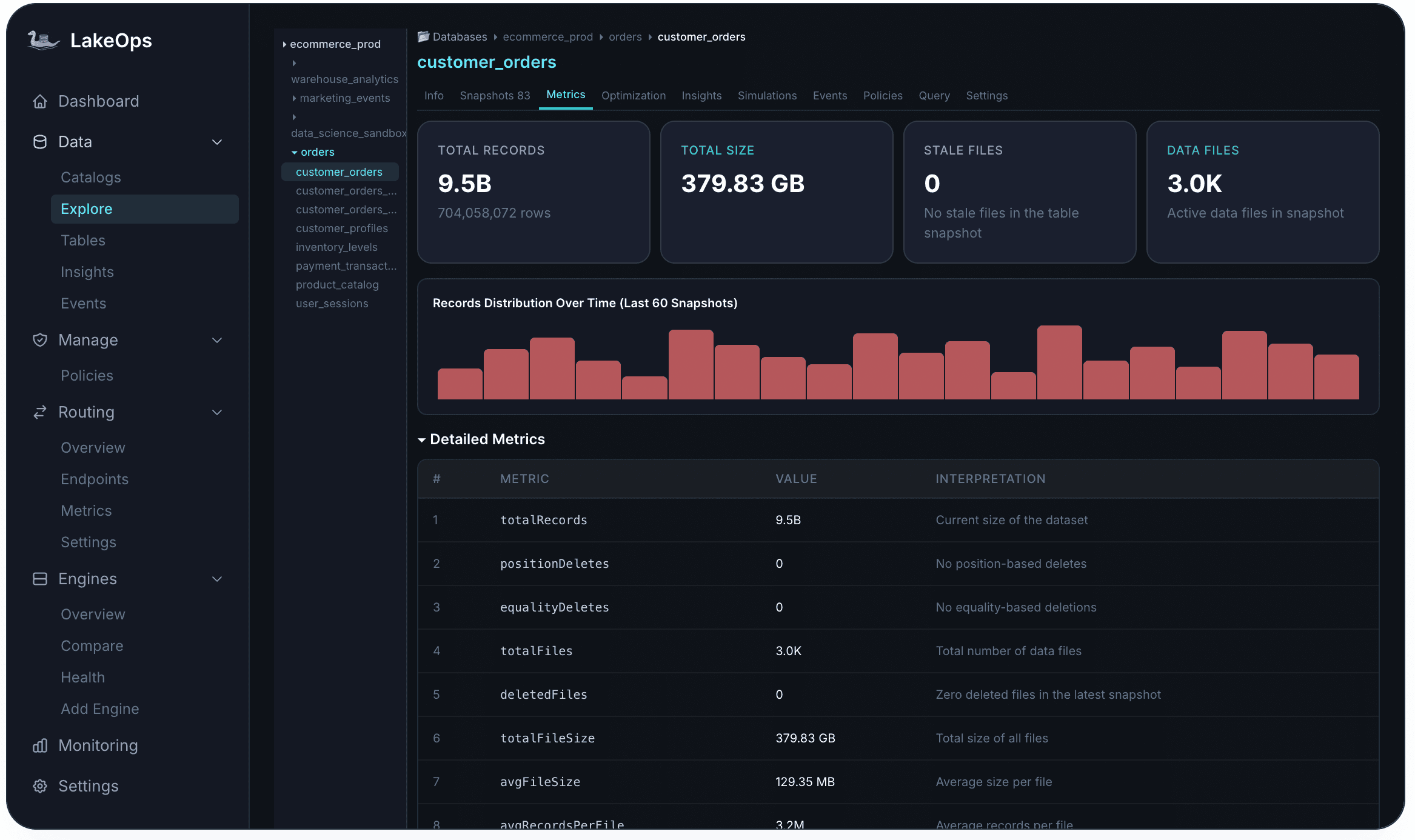

Drilling into any individual table reveals the structural detail: record counts, total size, active file count, average file size relative to the optimal range, and how data distribution has shifted across recent snapshots. Delete files, stale data, and partition imbalances are visible immediately — not after a manual Spark session.

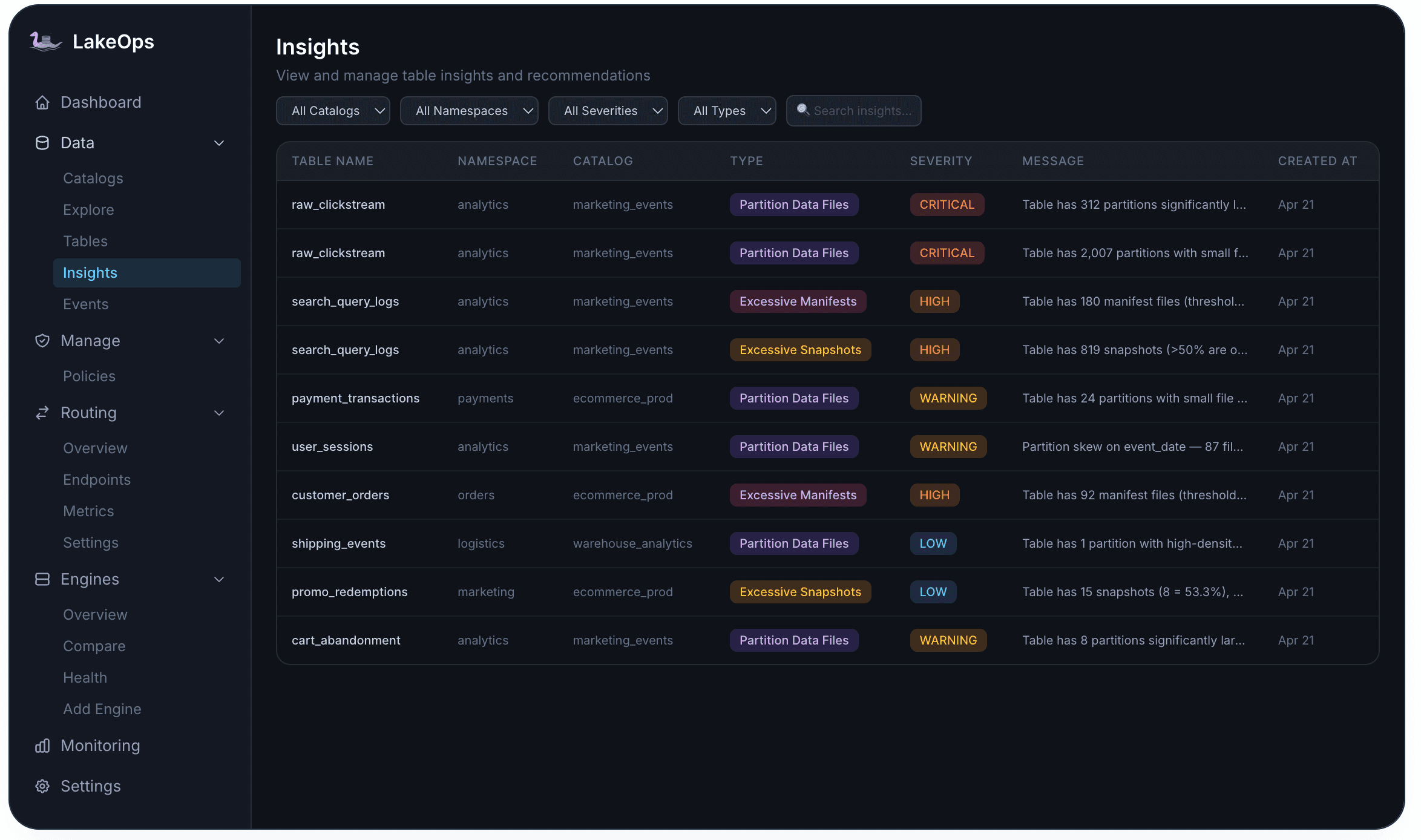

Proactive alerting completes the picture. An insights engine continuously evaluates every table against configurable thresholds and raises issues at four severity levels — from low-priority small-file warnings to critical partition failures. Each alert links directly to the affected table and the recommended fix. This health-scoring loop is what makes everything downstream — compaction, expiration, cleanup — autonomous rather than reactive.

2. Query-aware compaction

Compaction has the single largest impact on query performance and cost in an Iceberg lakehouse. But the approach matters more than the act itself.

Standard compaction merges small files into larger ones — a file-sizing exercise that reduces file count but leaves data physically unordered. Every query still scans most of the table because there is no alignment between file layout and actual access patterns.

The control plane goes further. It collects telemetry from real queries — filter predicates, join keys, partition access frequency — and uses that data to determine the optimal physical sort order for each table. If most queries filter on `created_at` and `region`, the compaction engine rewrites files sorted on those columns. Downstream, every engine benefits from min/max statistics that allow entire row groups to be skipped. Scan volumes drop by orders of magnitude, and CPU savings multiply across every query hitting those tables.

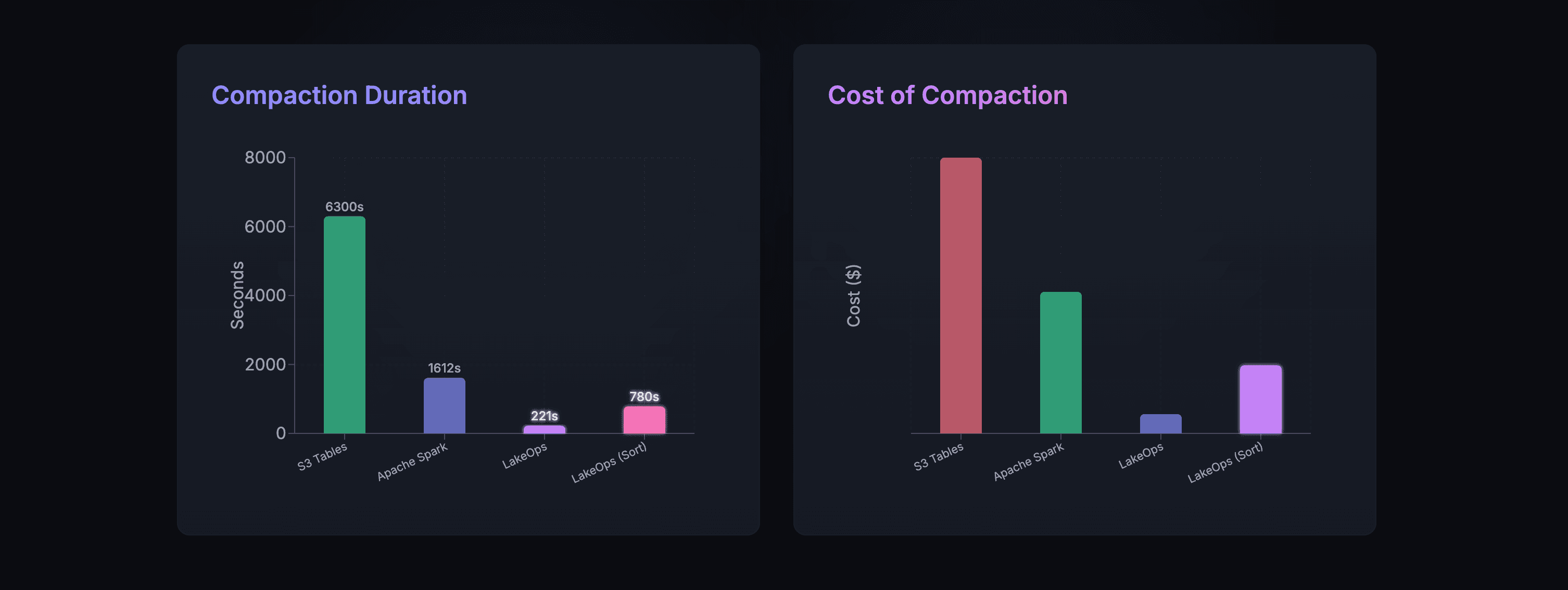

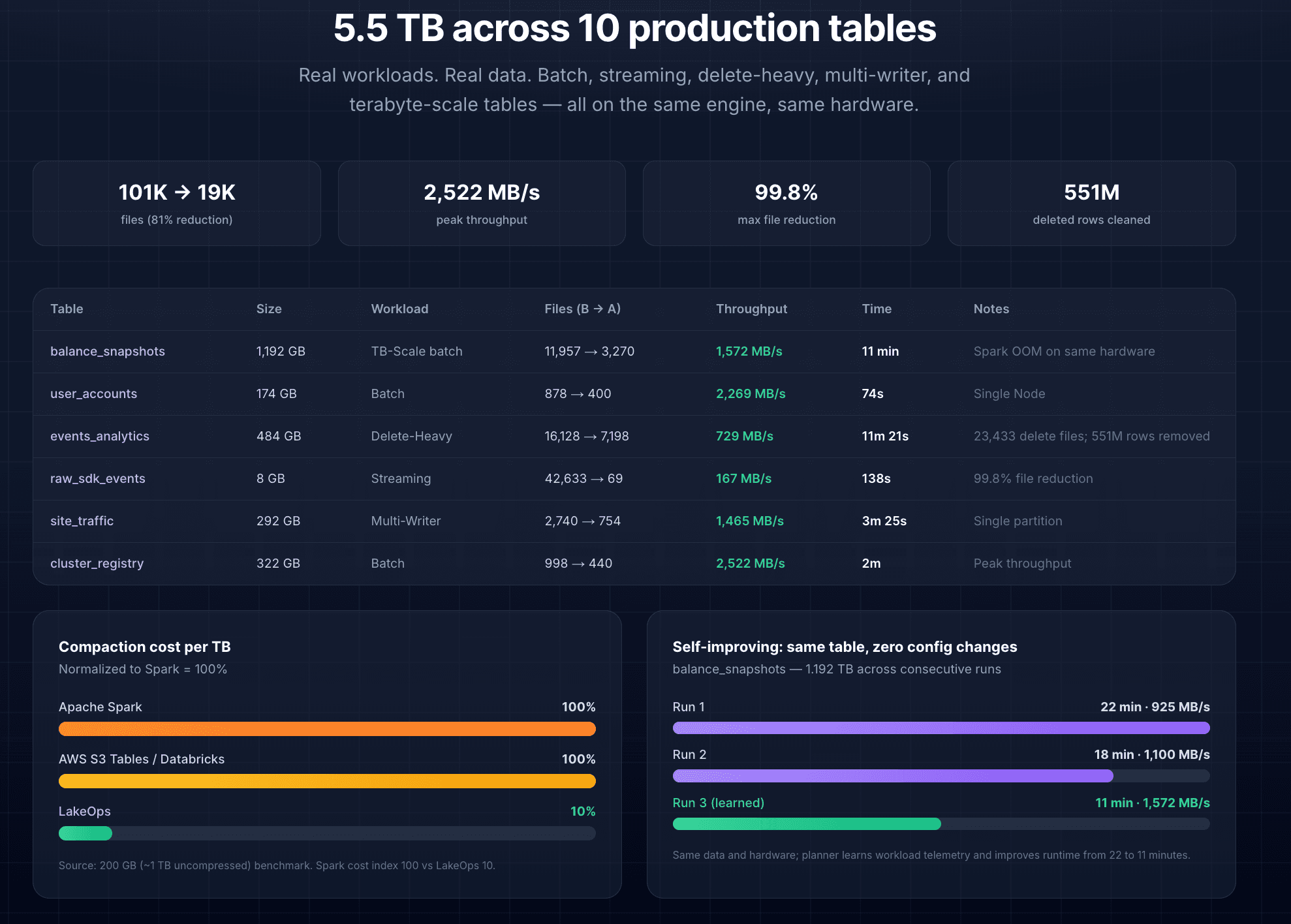

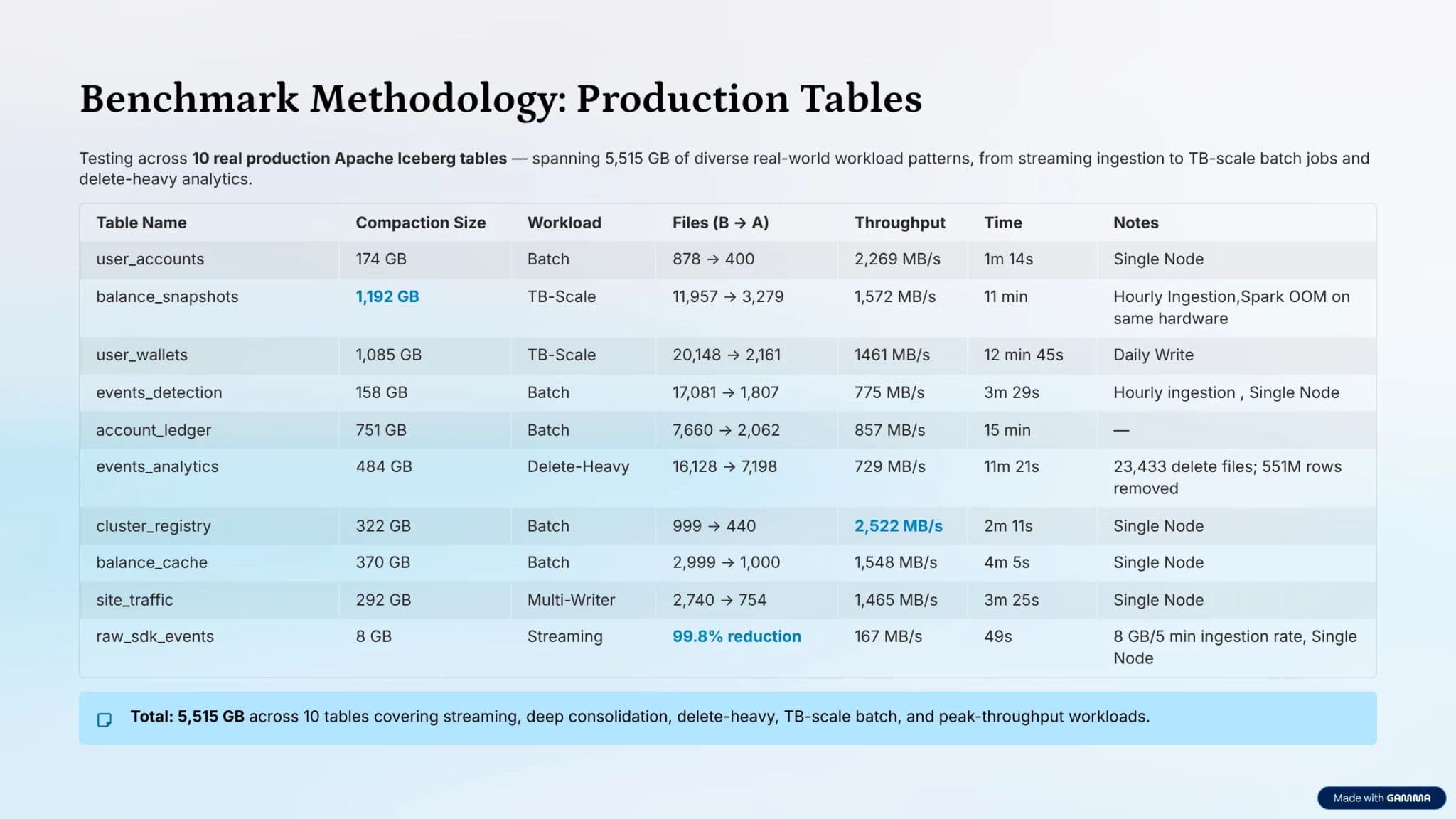

The compaction engine is written in Rust on top of Apache DataFusion, replacing JVM-based approaches entirely. There is no garbage collection overhead, no executor startup time, and no idle cluster to pay for between runs. In production benchmarks across 10 tables totaling 5.5 TB, file counts dropped by 81%, throughput peaked above 2,500 MB/s, and one streaming table saw a 99.8% reduction in file count.

Compaction commits are atomic — Iceberg's optimistic concurrency model ensures no read or write is blocked during the rewrite. And because the engine re-evaluates sort order as workload patterns evolve, the layout stays aligned with real usage rather than drifting over time. For a deep dive into compaction strategies, see Autonomous Iceberg Table Maintenance.

3. Snapshot lifecycle management

Iceberg snapshots are what make time travel and consistent reads possible — every write produces a new immutable version of the table. The problem is that without active management, the snapshot chain grows indefinitely. Metadata trees deepen, query planners spend more time navigating the chain than executing the scan, and data files referenced only by expired snapshots remain on storage, inflating the bill for no analytical benefit.

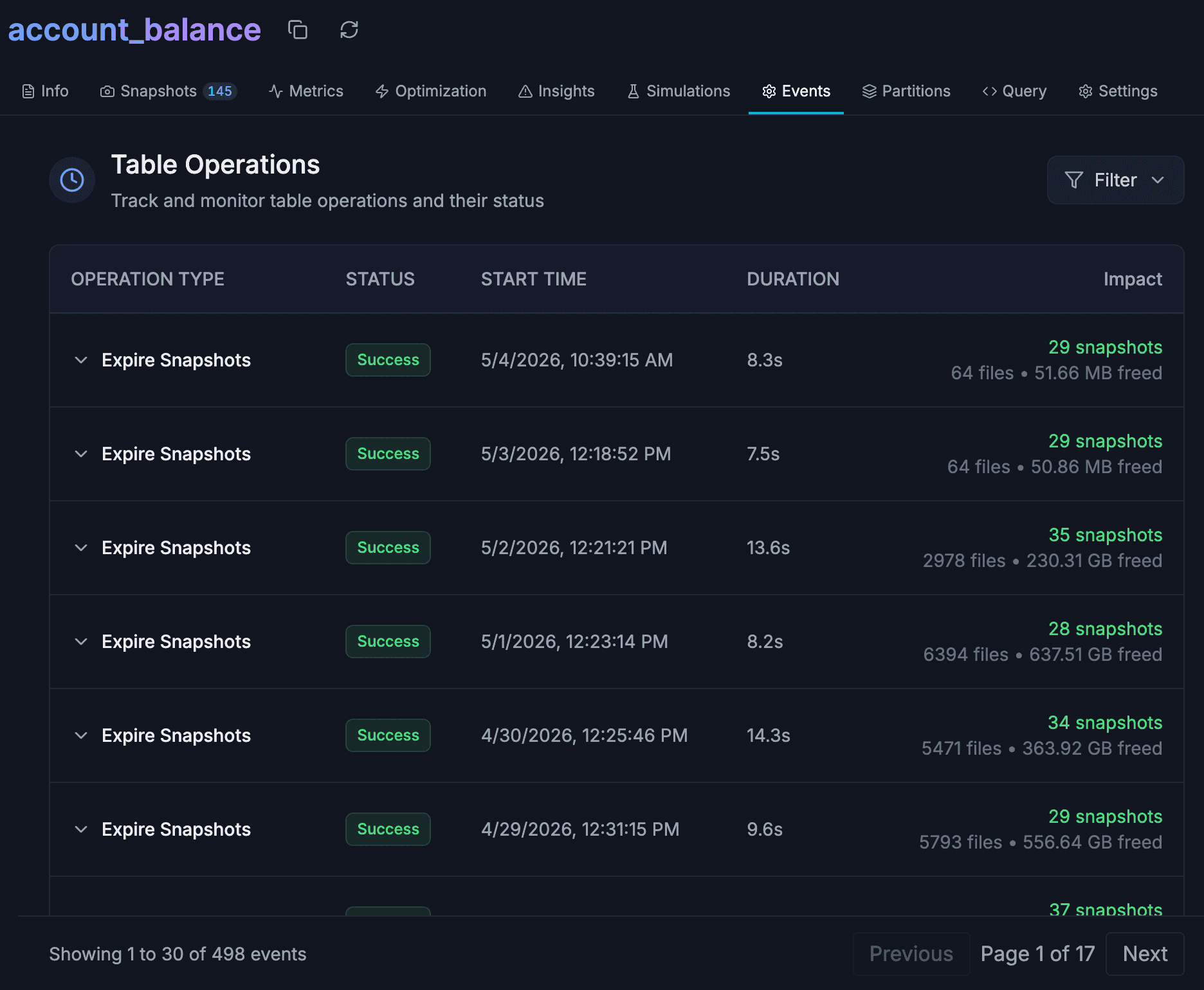

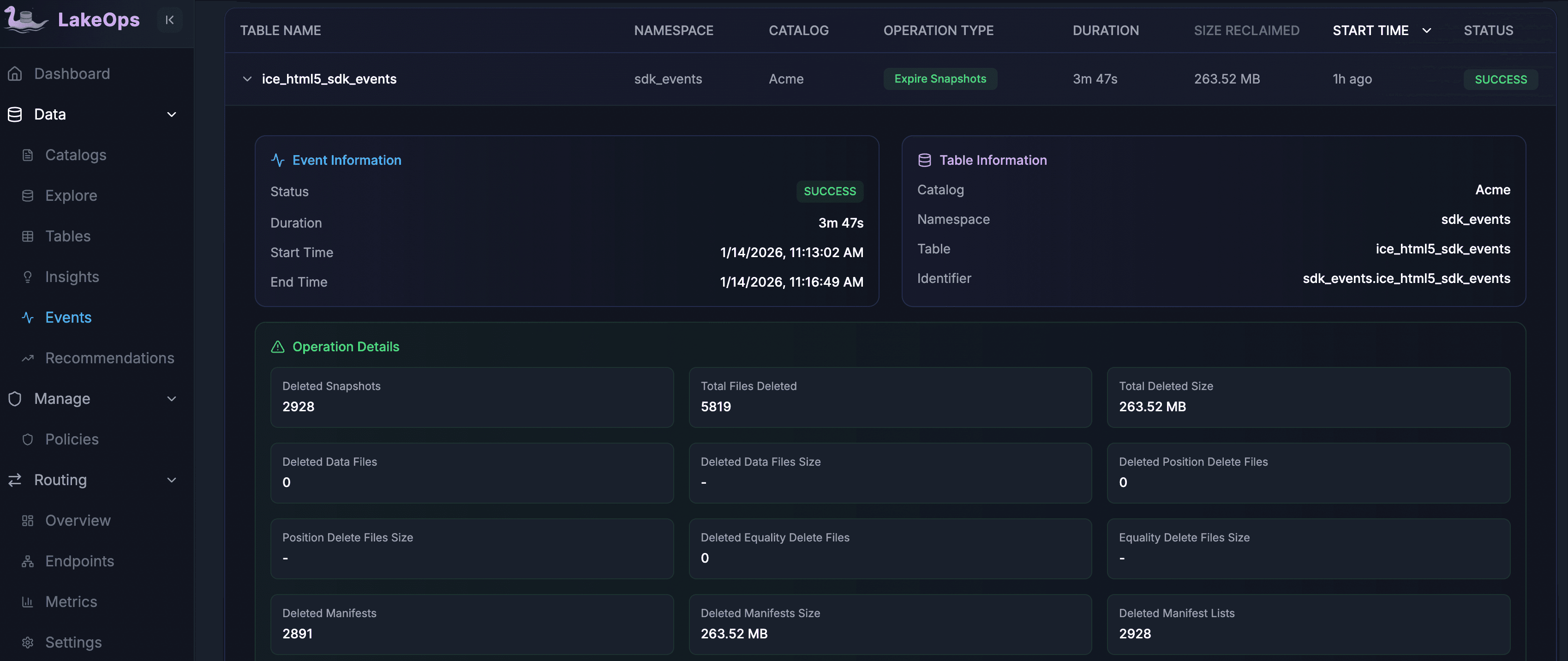

The control plane introduces full lifecycle control. Every snapshot is visible with its timestamp, operation type, and downstream references. Retention policies define how long snapshots should live and how many to preserve for time-travel capability, and they run on configurable schedules. The system is concurrency-safe: it tracks active readers and will not expire a snapshot that any open query depends on. Tags and branches provide controlled rollback without requiring a restore-from-backup workflow.

In practice, the storage reclaimed by snapshot expiration is substantial and recurring. Production tables regularly accumulate thousands of snapshots between expiration runs. A single pass can free hundreds of gigabytes by releasing the data files those snapshots held referenced. Because writes are continuous, the reclamation is also continuous — not a one-time cleanup but a permanent reduction in baseline cost.

4. Manifest and metadata optimization

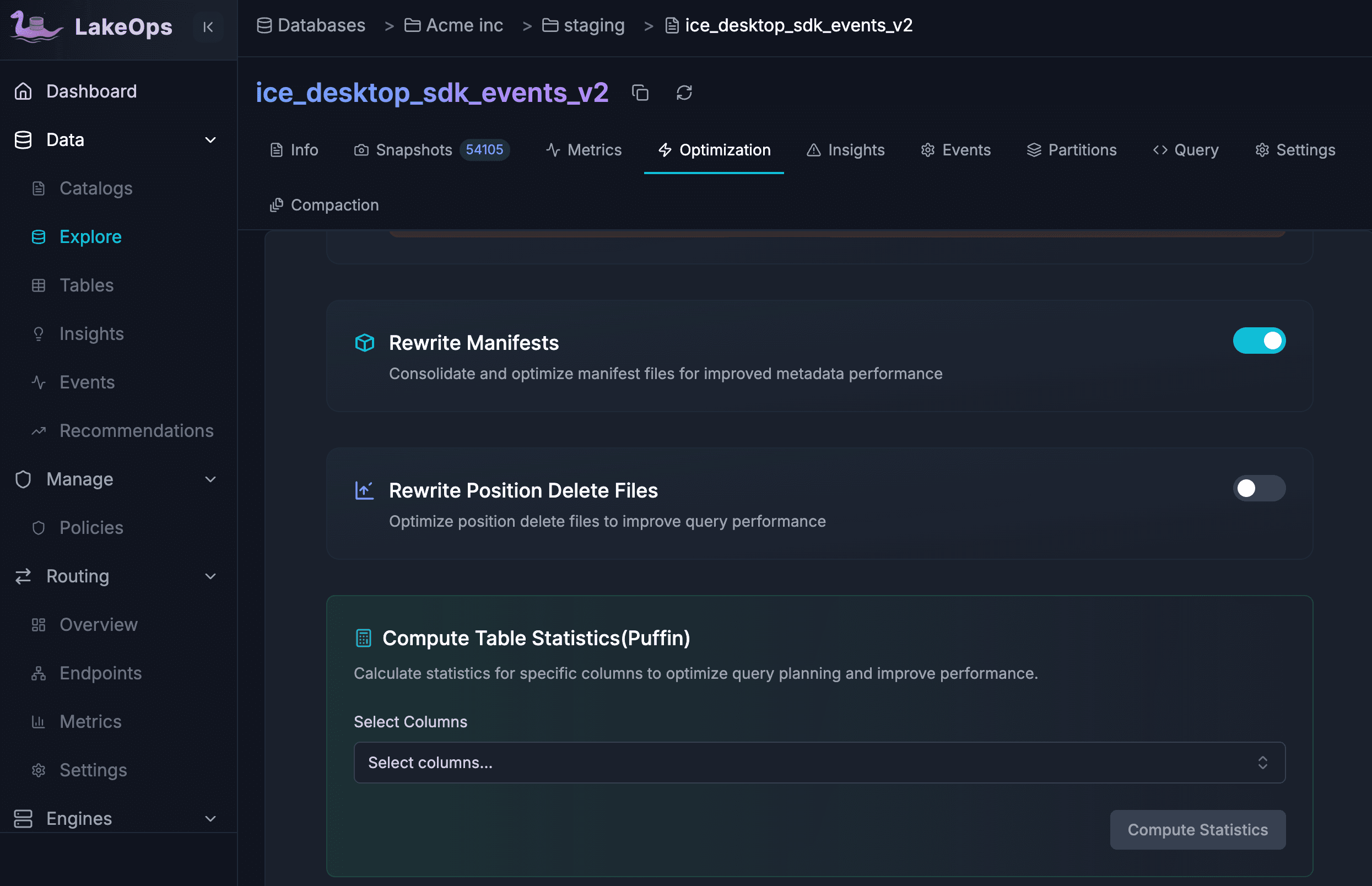

Manifests are Iceberg's index layer — each one maps a subset of data files and carries the column-level statistics that engines use for partition pruning and data skipping. The problem is that manifests accumulate. After many append and compaction cycles, a table that should have 30 manifests might carry 200. Every query planner must open all of them to decide which data files to scan, and at high manifest counts, the planning phase takes longer than the scan itself.

The control plane addresses this with three automated operations. Manifest rewrites consolidate fragmented manifest files into fewer, denser ones — cutting planning time by an order of magnitude on large tables. Delete file consolidation merges the small position-delete files that merge-on-read operations leave behind, directly accelerating reads on tables with frequent row-level updates. Column statistics generation (via Puffin) produces granular min/max, null-count, and distinct-value statistics so engines can prune row groups more aggressively during scans.

Fragmentation is detected automatically. When manifest count crosses a configurable limit (default: 50), a HIGH-severity alert fires. In autonomous mode, the system triggers a rewrite without waiting for human intervention — keeping metadata lean as the table evolves.

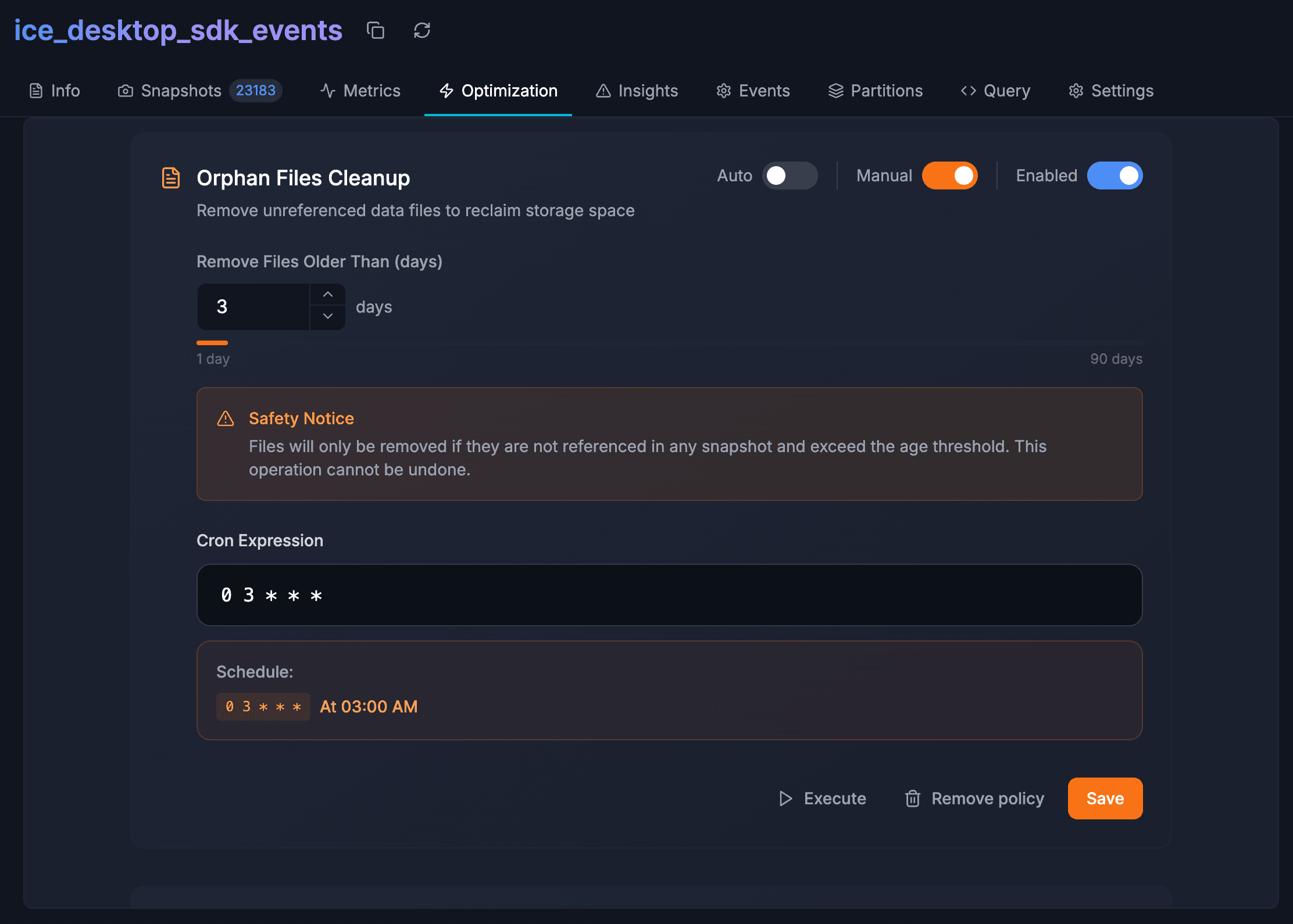

5. Orphan file cleanup

Every failed job, aborted write, and interrupted compaction leaves behind data files on object storage that no Iceberg snapshot points to. These orphan files are invisible to query engines but fully visible to the cloud billing system. Over months of production activity, they accumulate to surprising volumes — one customer discovered roughly 200 TB of unreferenced data across their lake, costing thousands of dollars per month in pure waste.

Removing orphans safely is harder than it sounds. A cleanup script that compares storage listings against current metadata can accidentally delete files belonging to in-progress writes. The control plane solves this by enforcing a configurable age buffer — only files that have been unreferenced for a minimum period (e.g., 7 days) are candidates. Cleanup runs are scheduled after snapshot expiration so that files newly dereferenced by the expiration pass are included in the same sweep.

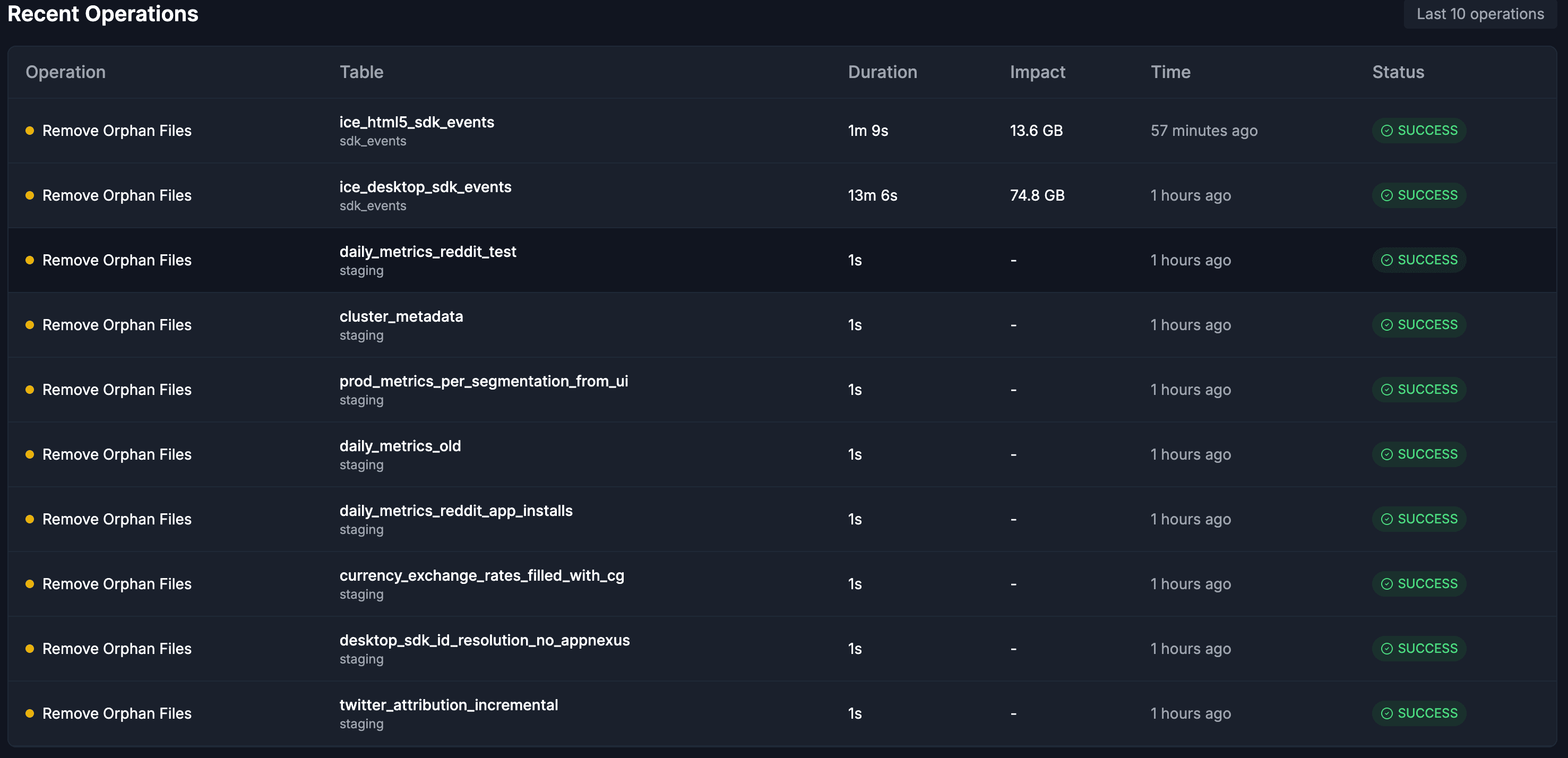

Across a typical fleet cleanup, the control plane processes hundreds of tables in minutes. Large tables with tens of thousands of orphaned files are cleaned in a single pass, while smaller tables complete in under a second. Every operation is logged with its file count, storage reclaimed, and duration.

6. Organization-wide policies and governance

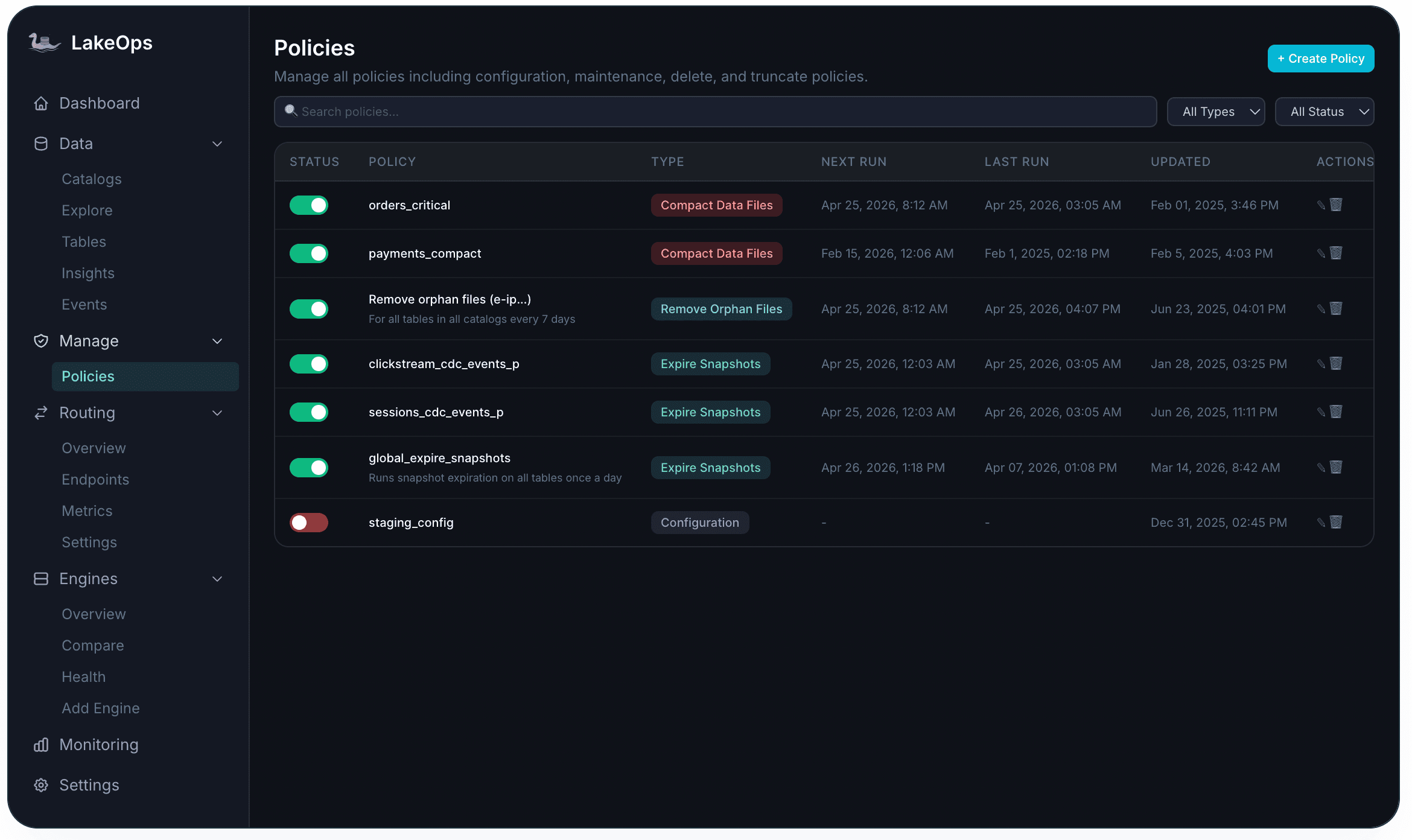

Optimizing one table at a time does not scale. When a lake has hundreds of tables spread across multiple catalogs, with different teams expecting different retention windows and compaction cadences, the maintenance rules need to be declared once and enforced everywhere.

The control plane provides a policy engine that supports six maintenance operation types — from snapshot expiration and orphan removal to compaction, manifest rewrites, and delete file optimization. A separate configuration policy type governs how new tables should be structured and managed by default. Policies are created through a wizard, scheduled with cron expressions, and visible on a single dashboard with status, last run, and next run for every active rule.

Policies follow a specificity hierarchy: a rule set at the table level takes precedence over a namespace default, which takes precedence over a catalog-wide baseline. The scheduler is aware of concurrent writers and will not start a maintenance operation that could conflict with an active pipeline. Every execution is logged with full auditability — what ran, when, what changed, and what it cost. For more on how these policies translate into measurable savings, see Apache Iceberg Cost Optimization.

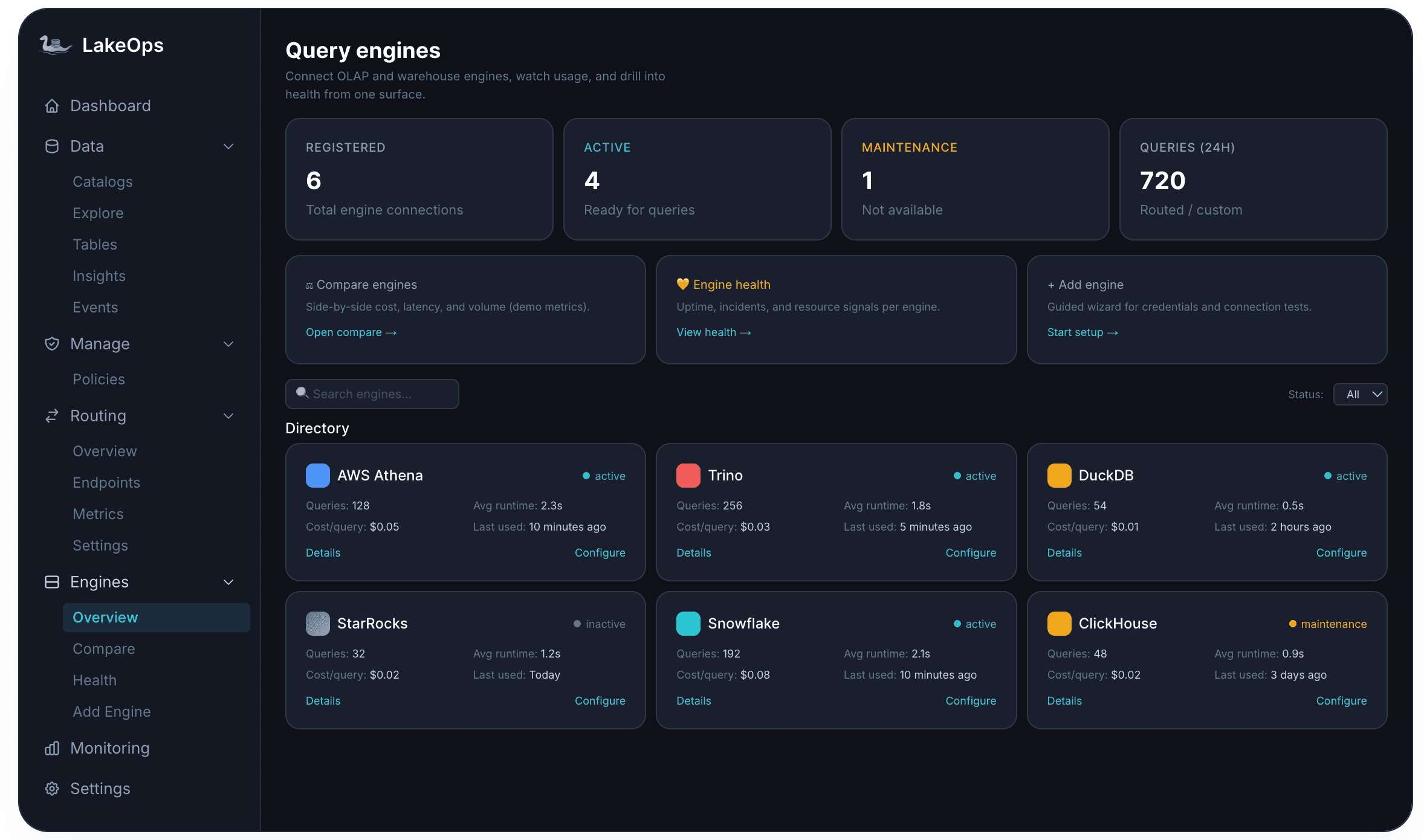

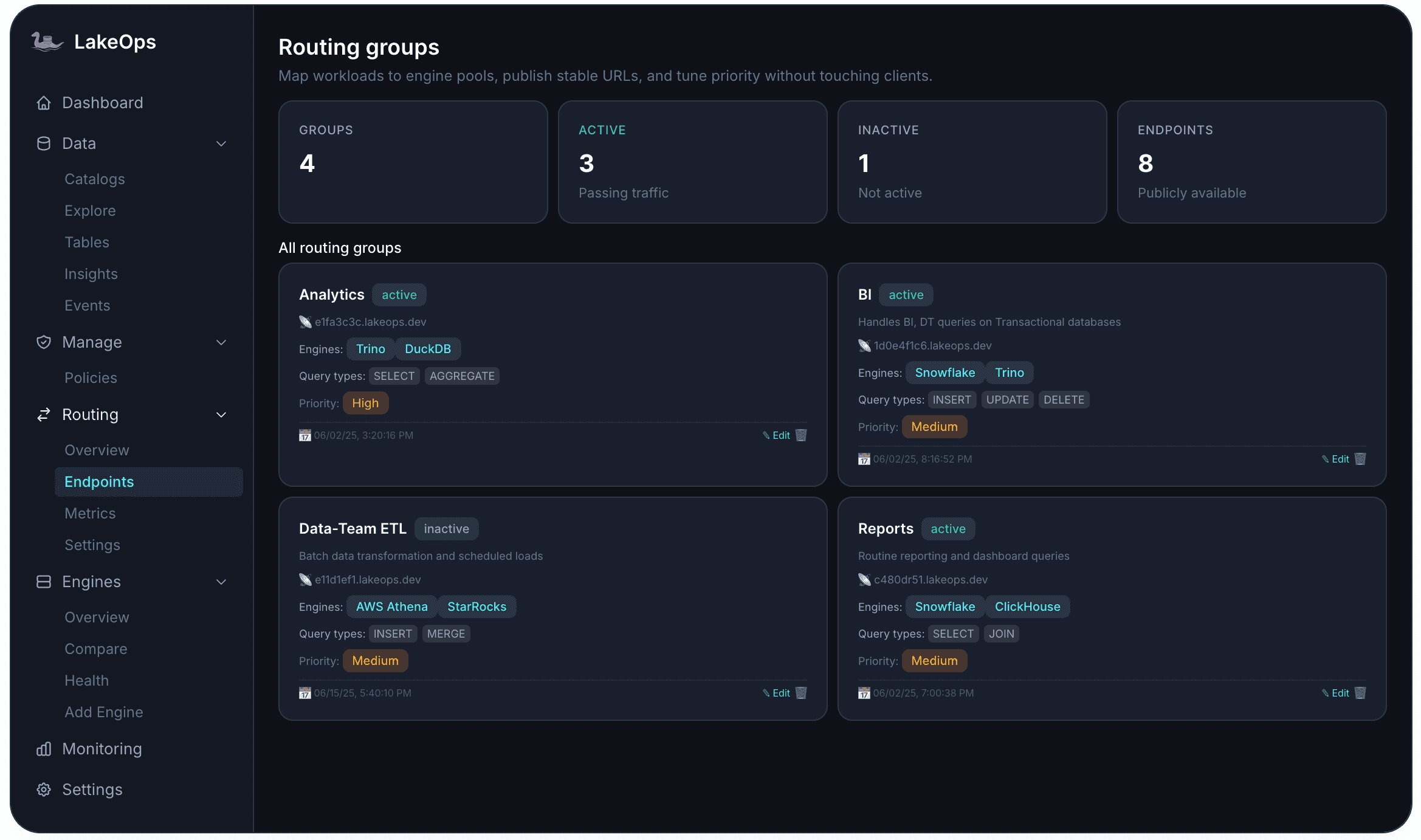

7. Multi-engine query routing

Most production lakehouses run several engines simultaneously — fast OLAP engines for dashboards, heavyweight engines for ETL, lightweight engines for ad-hoc exploration. The problem is that without a routing layer, engine selection is left to the user. Teams default to whichever engine they know, regardless of whether it is the cheapest or fastest option for that query shape. A simple scan that costs a fraction of a cent on DuckDB runs on Snowflake at full credit price because nobody configured an alternative.

The control plane introduces a unified query routing layer that evaluates each incoming query against engine cost, latency history, current load, and table health — then routes it to the best available engine automatically. Three strategies are available: Cost-optimized (cheapest engine that meets latency requirements), Latency-optimized (fastest response regardless of cost), and Throughput-balanced (distributes load across available capacity).

Each routing group exposes a stable endpoint URL that applications and agents connect to directly. The endpoint defines which engines are in the pool, which query types it accepts, and at what priority. A dashboard analytics group might route SELECT and AGGREGATE traffic to low-latency engines, while a batch ETL group routes INSERT and MERGE to cost-efficient compute. Every endpoint inherits the governance and policy stack — no separate configuration needed.

8. Agentic AI readiness

AI agents interact with data differently than humans. They issue SQL in rapid iterative loops, generate query shapes that no dashboard would produce, and expect sub-second responses from tables that were sized for nightly batch runs. Without infrastructure that accounts for this pattern, agent queries hit uncompacted tables, scan excessive data, generate unpredictable costs, and potentially expose sensitive columns to LLM context windows.

The control plane provides a purpose-built agent interface using the Model Context Protocol (MCP), with schema-aware tooling, async query execution, and wire compatibility across PostgreSQL, MySQL, and Arrow Flight. Safety is enforced through layered guardrails: read-only mode prevents DDL/DML, cost-estimation gates reject queries exceeding scan thresholds, PII masking hashes or excludes sensitive columns before results reach the model, and human-approval gates pause high-stakes operations for review.

The architecture creates a feedback loop: the compaction engine sees agent query telemetry alongside human query telemetry, and adjusts table layouts accordingly. As agent usage grows, the tables they access most are compacted first, with sort orders aligned to the predicates agents actually use. The lake adapts to AI workloads automatically rather than requiring manual tuning for each new agent deployment.

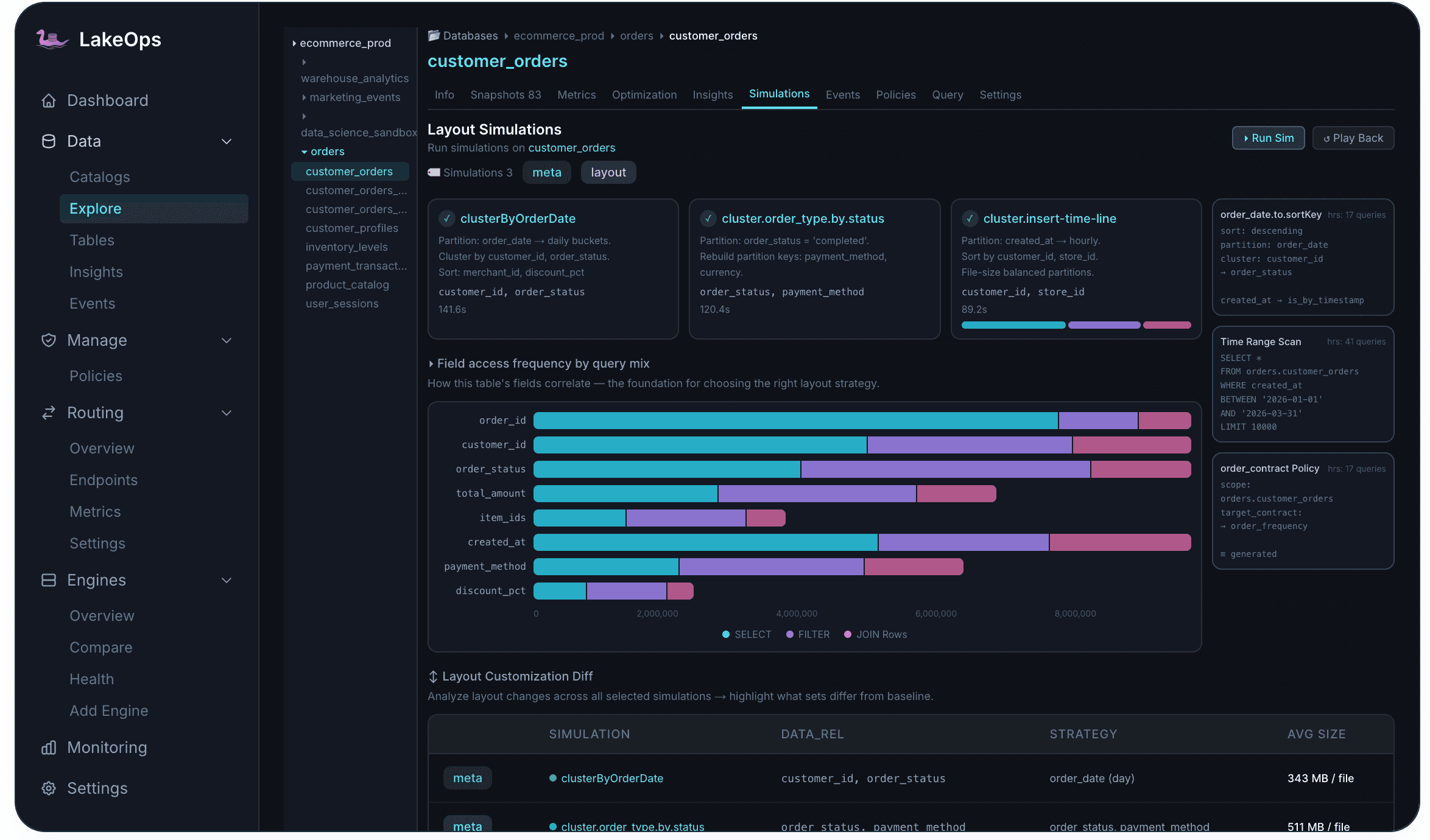

9. Layout simulations: test before you commit

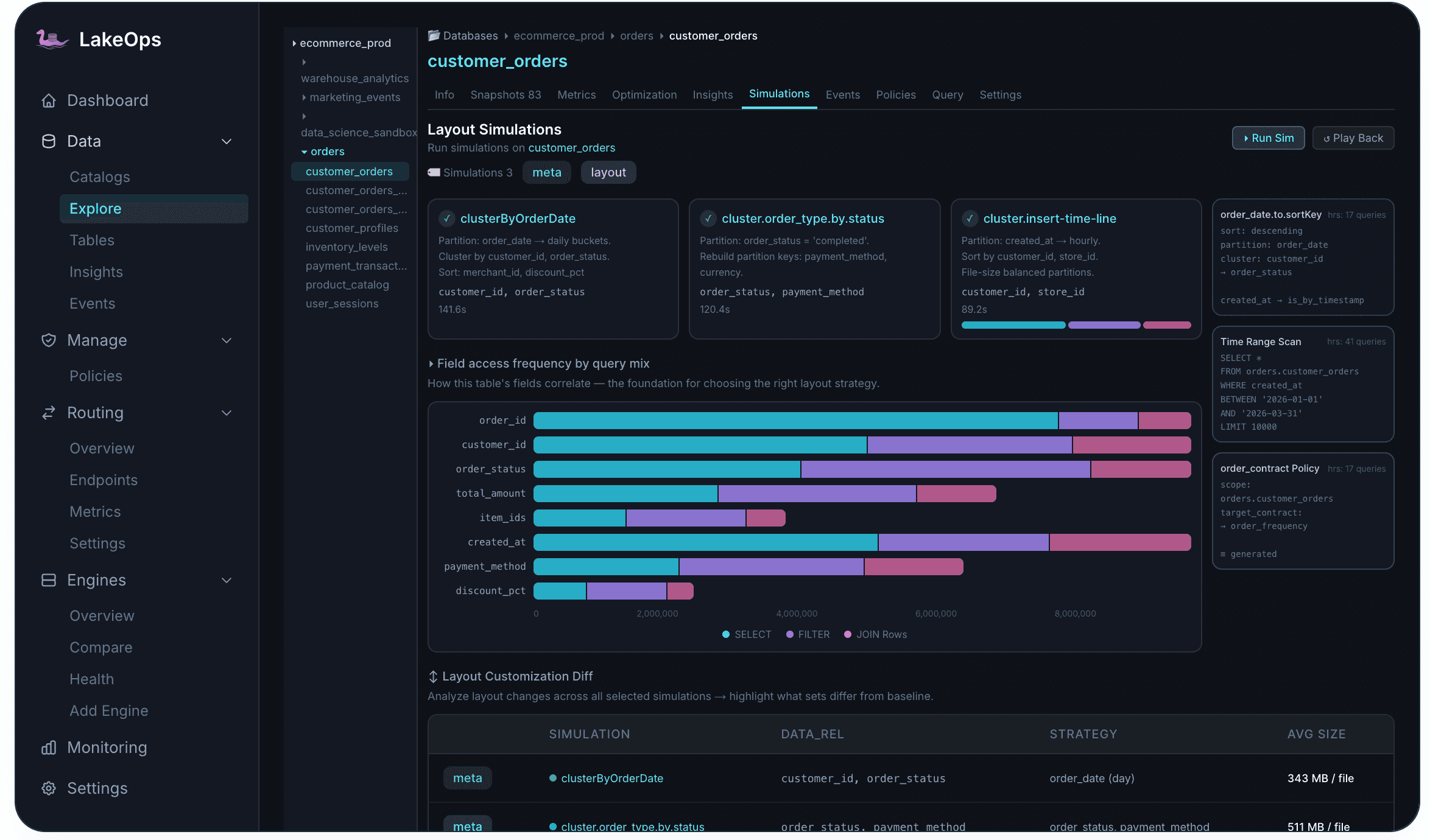

Choosing the wrong sort order or partitioning scheme can make things worse, not better. The control plane provides layout simulations: a safe, branch-based testing environment that evaluates layout changes before applying them to production.

Simulations run on a real Iceberg branch created from the latest snapshot — layout changes are applied, query patterns are replayed, and results are compared against the current baseline. The branch is discarded afterward; no production data is modified. The Simulations tab shows field access frequency by query mix — how often each column appears in SELECT, FILTER, and JOIN operations — so you can evaluate exactly how each approach changes data distribution before committing to a potentially expensive rewrite.

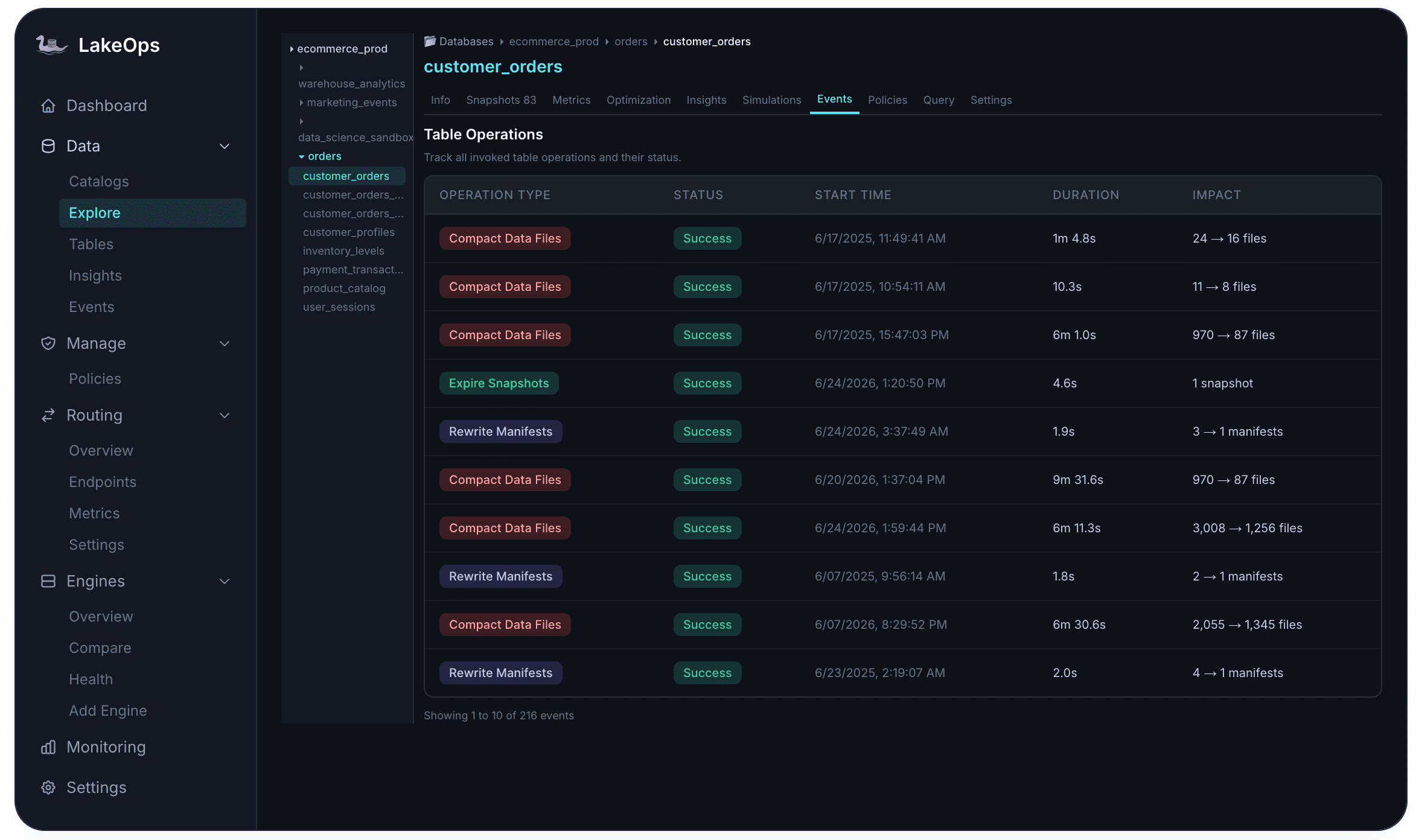

Why the order of operations matters

Running maintenance is necessary. Running it in the right sequence is what makes it efficient.

When operations run independently — a cron-scheduled compaction here, an Airflow-triggered expiration there — they interfere with each other in subtle ways. Compaction rewrites files that expiration is about to dereference. Orphan cleanup runs before expiration finishes and misses the files it just freed. Manifest rewrites target a file layout that compaction is still changing. The net result: wasted compute, incomplete cleanup, and a maintenance layer that consumes engineering time rather than saving it.

The control plane eliminates this by running operations as a coordinated pipeline. Expiration goes first, trimming the snapshot tree and marking data files for release. Orphan cleanup follows, sweeping up the files that expiration just unreferenced. Compaction then runs against the clean, current dataset — never merging files that are about to be deleted. Finally, manifest optimization consolidates the metadata layer against the final compacted layout. The output of each stage feeds directly into the next.

The cumulative effect is larger than any single optimization: storage costs fall because dead data is removed in order. Compute costs fall because every engine reads less data after layout-aligned compaction. Metadata overhead falls because manifests are lean and current. The lake does not just get cleaned up — it stays clean, continuously, without manual intervention.

Every operation is tracked in the Events tab with full auditability — operation type, status, start time, duration, and per-operation impact (files consolidated, storage reclaimed, snapshots expired). This audit trail makes it straightforward to demonstrate the value of autonomous maintenance to stakeholders.

Getting connected

None of this requires a migration. LakeOps plugs into the catalogs and storage you already run — the setup process takes about ten minutes and does not touch your data, your pipelines, or your infrastructure. The control plane reads metadata and telemetry; your data never leaves your account.

Catalog support covers AWS Glue, DynamoDB-backed catalogs, REST-compliant catalogs (Polaris, Gravitino, Nessie, Lakekeeper), and S3 Tables. After connecting, the platform discovers every table, scores its health, and either starts autonomous optimization or waits for you to approve operations manually — your choice. For a full walkthrough of every capability end to end, see the Managed Iceberg in 2026 deep dive.

The swamp was always an operations problem

The data swamp was never about bad technology choices. It was about the absence of an operational layer. Hadoop-era lakes lacked reliability. Iceberg solved that. But reliability without operations is a lakehouse that degrades slowly back toward a swamp.

A control plane closes the loop. The data stays on your storage. Engines are chosen per workload. Catalogs provide the registry. And the control plane handles everything else — observability, compaction, snapshot lifecycle, manifest health, orphan cleanup, policies, routing, and AI readiness — turning open-format reliability into a managed, self-optimizing platform without the lock-in of a closed ecosystem.

That is the path from swamp to modern lakehouse. Not a migration. Not a new platform. An operational layer that makes everything you already have work the way it should. That is what LakeOps was built for.